The bombast with which the so-called Twitter Files have been released is incongruous with the mundanity of their content. Even so, as the circus folds up the big top and the barkers return to their Substacks, it is worth a thorough retrospective to put these breathlessly delivered, revelation-flavored products in context.

That few large news outlets have opted to report much of the information in these threads has been attributed to complaisance, partisanship, complicity with government interference or various species of corruption. The banal truth is that, if other newsrooms are anything like our own, they read each as a matter of diligence, and simply found nothing new or interesting to report, or what little there was contaminated by the dubious circumstances of their presentation.

What’s important to understand at the outset — and what the authors make clear from the start — is that no one involved in the selection and analysis of the internal communications appears to have any familiarity with (let alone expertise in) how social media and tech platforms are moderated or run. This is not said in order to poison the well — it matters because this lack of familiarity is in great part the reason these stories were published to begin with, and it explains the editorial slant they are given.

In each Twitter Files thread, we see unfounded assumptions, insinuations and personal interpretations given equal weight as facts, more or less establishing these as opinion pieces rather than factual reporting. That alone will have spiked a great deal of coverage, as however salacious the theory, little of what is actually provided satisfies editorial standards in many a newsroom.

It must also be obvious by now that this ostensible act of transparency was conducted with a definite goal: to discredit the previous moderation and management teams, and advance a narrative of systematic anti-conservative activity at Twitter. This has resulted, both deliberately and by neglect of basic best practices, in harassment and targeting of individuals.

Plainly this is all orchestrated by Elon Musk, whose spite is equally plain in the wake of his botched purchase of the platform — an event that has been catastrophic to his wealth and reputation. But catastrophe loves company, and he seems insistent that all receive a portion of his ruin.

That said, given the natural curiosity of our readership on these matters, I thought it may be of interest to catalogue the claims in one place, as well as what rendered most of them unreportable, despite occasionally containing notable information.

Part 1: “Handled”

Claim: “An incredible story” of how “connected actors” had accounts deleted and stories suppressed, with a clear left-leaning bias

The inaugural thread unambiguously and repeatedly shows working moderators grappling honestly with difficult decisions.

It also shows the inbox of a content moderation response team: not a dark and secret back channel but an official means for governments (the U.S. and others), individuals, companies, law enforcement and anyone else with special insight or purpose to communicate with the company’s dedicated department. There are no surreptitious “connected actors,” this is essentially customer service. The assertion that there were “more channels, more ways to complain, open to the left” is completely unsupported.

The question of First Amendment violations is a massive red herring, aided by Musk, who publicly aired his misinterpretation of it in the replies. As the thread notes, “there’s no evidence – that I’ve seen – of any government involvement in the laptop story.” Government requests, as documented and discussed publicly for years, are routine. Private requests, like the Biden campaign flagging non-consensually shared nude images of Hunter Biden as violations of Twitter’s terms of service, are routine.

Here as in other threads, the source documents themselves may well be of interest, but are not reliable as presented and do not demonstrate the claims stated. And it must be recorded here how slapdash the redaction and presentation of the information was, giving a sense of carelessness and overhaste to these supposedly momentous reports.

Part 2: “Secret”

Claim: “Secret blacklists” and “shadow bans” were common at Twitter

The second thread is an exercise in fear, uncertainty and doubt that depicts the tools of a functioning social media moderation team as those of a secret speech-controlling elite. Flags and moderation functions are not public by design, as some of the information is proprietary to Twitter, personally identifiable to the account, or the type of thing to be taken advantage of by malicious actors, who would redline behavior if they knew exactly how the system worked.

By the definition applied here, much of what goes on in any company is “secret.” Google, Facebook, Microsoft, Sony, Amazon — any company that maintains and monitors large numbers of users and communications has a “secret” system like this. It was nice to peek behind the curtain, which was why I did report it in that context; I would have done the same if one of those other companies’ non-public moderation practices had been exposed.

Musk’s ‘Twitter Files’ offer a glimpse of the raw, complicated and thankless task of moderation

But in keeping with the intended narrative, the thread only shows examples of moderation actions that affect a handful of conservative fringe accounts. We can’t know if and how these tools were used in other circumstances, such as putting a left-leaning account on a “trends blacklist,” because that data is withheld — “secret,” as Weiss would no doubt put it. It would be irresponsible to draw conclusions based on such purposefully manipulated data.

The thread also does a bit of prestidigitation in the matter of “shadow banning,” which Twitter publicly denies doing according to its own, also public definition. Weiss redefines the term as something Twitter does do (industry-standard moderation practices) and concludes that the company has lied retroactively. The disingenuous presentation discourages coverage.

Part 3: “Interaction”

Claim: “Decisions by high-ranking executives to violate their own policies” in the ban of Donald Trump, and “ongoing, documented interaction with federal agencies“

The deliberations of a social media moderation team put in the unprecedented situation of deciding whether and how to suspend a sitting president’s account (and how to adjust policies going forward) are interesting in a fundamental way; however, the way this information is presented is again too suspect for any reporter to trust and report. With no access to the original chat logs, it is impossible to say whether the conversations here are accurately represented or, as is far more likely given how the narrative in which they are couched, selectively shown (though in fairness, the process by which these logs were given to the authors is not entirely in their control). What little we are privy to is not particularly notable.

The “interaction” with federal agencies is also given a FUD treatment. As noted above, law enforcement and governments are of necessity in constant contact with every social media company — indeed, with all of tech and much of commerce and industry in general. It really is part of their job, and yes, there are agents and specialists designated for social media and tech duty, just as there are some detailed to shipping, manufacturing, finance, etc. Whatever one’s opinion on this practice (and let me just say, I am no bootlicker myself), it surely isn’t news. The attempt to transmute these “interactions” into “intimidation” or “obligation” is not successful.

A presidential election following several marked by attempts (successful or not) at interference by foreign adversaries is of natural interest to the FBI, among other authorities, and a weekly check-in seems the bare minimum to keep each other informed of potential influence campaigns, trends in cybersecurity, relevant intelligence and so on. Let us not forget that Twitter amounts to essential communications infrastructure for every government agency at this point; monitoring it is an important but quite ordinary matter. It would be far more surprising and worth investigating if this contact didn’t exist.

Part 4: “Policy”

Claim: Twitter changes its policies in order to ban Trump, and “expresses no concern for the free speech or democracy implications“

The discussion documented here is only partial, but it seems to show, as before, the team grappling with evolving circumstances and figuring out in real time how the company should respond. In one quoted chat message, former head of trust and safety Yoel Roth puts it quite clearly: “Policy is one part of the system of how Twitter works… we ran into the world changing faster than we were able to either adapt the product or the policy.”

As a private company running its own fast-moving social platform, obviously Twitter changes its policies regularly, and also makes exceptions to them at its discretion; in fact had made them before in favor of Trump. This was a notable exception, of course, but also the result of extensive internal discussion — which acknowledges both the ad hoc nature of the actions and policies, and their gravity as well. It seems strange for this thread to say no discussion was had when one is clearly shown here and in the next thread. (Perhaps it’s a matter of opinion what “expressing concern” looks like.)

All of this was also widely, widely discussed and reported by pretty much everyone in the world at the time.

Part 5: “Unprecedented”

Claim: Twitter’s choice to ban Trump goes against previous decisions and is part of a pattern of politically biased censorship

Again, reading the actual discussions of dozens of people throughout the company — not “a handful” as it is characterized — in an unprecedented situation is interesting, but difficult to report on given the lack of context and editorialized presentation. These internal debates are more or less what anyone would expect, and hope, of a company trying to figure out how to handle this.

The chat logs do offer a note of specificity long after the fact, but the (by this point obligatory) attempt to cast it as an elite group making directed choices to “influence the public discourse and democracy” is again unsupported, and also contradictory with the notion, elsewhere advanced, that this group was being controlled by the FBI and other government agencies.

Part 6: “Subsidiary”

Claim: The FBI has infiltrated Twitter and exerts “constant and pervasive” influence

“The #TwitterFiles show something new: agencies like the FBI and DHS regularly sending social media content to Twitter through multiple entry points, pre-flagged for moderation.”

It may be new to some, but as noted above, this is quite an ordinary and well-documented practice: for law enforcement, and political parties, and government agencies, and private companies, etc., to call content or accounts to the attention of a platform’s moderation team. It has been done for a long time, and in fact much of it is publicly declared by major tech companies in their regular Transparency Reports, which list government requests and orders, what they pertained to and how many resulted in some kind of action, or provoked a challenge or request for a warrant. Notably the thread actually shows this kind of pushback happening.

This type of form email can be found in every platform’s moderation team inbox. Incidentally, the description of so prosaic a greeting as “Hello Twitter Contacts, FBI San Francisco is notifying you of the below accounts…” as having a “master-canine quality” is a real puzzler. I’m genuinely unsure who is meant to be the master and who the canine.

There is of course room for debate on how much the government (among other entities) can or should request, legally, procedurally and ethically speaking. As is the revolving door of high-level corporate and lobbyist positions and government officials. Fortunately for us, just such a debate has been ongoing for two decades. It surely must have bemused many reporters in this space that a topic discussed so widely and for so long is being treated as new or controversial.

Part 7: “Discredited”

Claim: A conspiracy orchestrated by the FBI and intelligence community to preemptively discredit the Hunter Biden laptop story

Even if anyone at any newsroom thought it was worth re-(re-)litigating the laptop story, which was discussed ad nauseam at the time, the way information is presented in this thread is dangerously disingenuous.

The sleight of hand occurs in drawing connections between things with no actual connection — conspiracy theory “logic.” For instance, two facts: One, the FBI was aware of the laptop, and had collected it; two, the FBI sent some documents to Twitter just before the NY Post published its story. These are presented as if clearly linked.

But as the other threads made clear, these FBI document drops were quite a regular occurrence, as often as weekly (in fact later threads complain information was shared too frequently). And there is no evidence the FBI considered the laptop a specific “hack-and-leak” threat, let alone expressed that to parties like Twitter (the general be-on-lookout months earlier is weak tea). Not only is the significance of either fact unsupported individually, but they are connected in the thread in an unsupported way.

This type of suggestive free association occurs repeatedly. And magically, an elaborate “influence operation” uniting the FBI, IC, a think tank, and a few other villains is assembled, like a corkboard with pins and yarn criss-crossing it. (Never mind that subsequent threads show they could barely organize a cross-agency conference call.) Under even the slightest scrutiny this vast conspiracy evaporates, and what is left is clearly a loose collection of people talking about potential cyberthreats in a tense election season.

Few newsrooms would approve of presenting such feats of conjecture as fact, if any reporter even considered using such flimflam as the basis of their own article.

Part 8: “Covert”

Claim: Twitter “directly assisted the U.S. military’s influence operations”

This claim is actually true — or was. We clocked the roll-up of this U.S. influence operation back in August, but this was still a thread that we read with interest.

Meta and Twitter purge web of accounts spreading pro-US propaganda abroad

Every government performs propaganda operations here and there, with various degrees of success and secrecy (both low in this case); it’s table stakes in intelligence. We see networks of fake accounts rolled up frequently, though understandably the ones that are given the most press are foreign operations intending to influence U.S. discourse; these grew so numerous that Facebook started bundling them into roundups and we left off covering all but the most notable, since they were clearly rationing them for positive news cycles.

In this case, an ask was made to give a number of officially military-associated propaganda accounts slightly privileged status (immunity from spam reports, for instance). Twitter agreed, but later the military removed the association disclosure from the accounts, rendering them “covert,” though possibly the word overstates the case. This angered Twitter, but either they felt they could not renege on their deal with the Pentagon, or, given how small and ineffective these accounts clearly were, decided it didn’t really matter much one way or the other. (In retrospect, given the bad PR, they probably wish they had hammered it. But hindsight is 20/20, as most of the Twitter Files demonstrate.)

To observe a U.S. operation to influence discourse abroad is interesting, and it does (and did) prompt legitimate questions of how closely tech companies should work with the Defense Department and intelligence community. Ultimately we felt that peeling back this layer of the onion was laudable but further coverage on our part was superfluous.

Part 9: “Doorman”

Claim: The FBI was the funnel for a “vast program of social media surveillance and censorship” across government agencies

Here we see the government’s haphazard approach to communicating with tech, with multiple agencies and cross-agency task forces overdoing it in various ways (primarily too much email). The number of accounts being flagged by law enforcement and government was already high and rising; Twitter complained and worked hard to triage and prioritize as government requests competed with press, user flags and others for limited moderation attention.

It can’t be that surprising that the government would be overzealous in its efforts to tamp down on misinformation after years of asserting and soliciting opinions on how it might affect elections. Thousands of reports sounds like a lot, but count the number of police departments, state elections authorities, federal task forces and so on, then imagine each of them finding a handful of problematic accounts or tweets each day. They add up quite quickly; it’s a big (and troubled) country, and there’s only one Twitter. Other platforms were experiencing similar overloads and government communications.

Twitter pledges to dial up efforts to combat election misinformation

That these requests were channeled through two primary channels, the FBI San Francisco office and the Foreign Influence Task Force, for flagging domestic and international issues respectively, is presented as ominous but feels simply practical. The alternative, hundreds of sources independently contacting Twitter, is infeasible.

Even if we were to credit some of the accusations, it’s hard to draw conclusions because the context (beyond even “the year 2020”) is exceptional. The period before and after the 2020 election was absolutely rife with misinformation and other social media issues. Meanwhile every government agency even tangentially related to elections was likewise overwhelmed and working overtime. It’s not clear what is meant to be shown beyond an admittedly bloated bureaucracy in action.

Part 10: “Rigged”

Claim: “Twitter rigged the COVID debate” by “censoring,” “discrediting” and “suppressing” information and users according to government preferences

The words used above — rig, censor, discredit, suppress — are strong. But they are not accurate, and the author, apparently a professional quibbler, applies a sort of malicious hindsight to a handful of borderline cases.

The allegation here is that Twitter’s moderation team chose to use CDC recommendations as the basis for its COVID-related misinformation policy. This is neither new nor controversial, and not really even a sensible complaint. It is the role of that agency to study, justify, document, and promulgate best practices in health emergencies. What other authority should Twitter have sought for such a policy? None is suggested. Indeed no realistic alternative exists. It was a public health and misinformation emergency and clear lines needed to be drawn — fast, and rooted in some kind of authority — in order that moderation could occur at all. Twitter used the CDC in its capacity as expert agency in drawing some of those lines.

Twitter broadly bans any COVID-19 tweets that could help the virus spread

It is stated in the thread categorically that “information that challenged that view… was subject to moderation, and even suppression.” Sure, sometimes. And sometimes things that should have been removed weren’t. Moderation is messy and 2020 was messiness epitomized. Mistakes were inevitable, as Twitter made clear at the outset; it’s trivial to go back and find a few among the decisions in their millions. It’s also pointless and subjective, and feels a bit spiteful.

All the thread offers is a “what if” the bar for debate had been moved an arbitrary amount in the direction the author prefers. But it conflates that notion with the idea that, because the bar was not placed correctly in his opinion (one of his quibbles is with masks, it seems germane to note here), that open debate was “censored.” We have seen censorship and this is not it.

Part 11: “Workload”

Claim: Federal agencies leveraged and then overwhelmed channels for reporting accounts

This thread was, like the earlier one, interesting in that the documents quoted show exactly the kind of improvised, scattershot approach expected by a disorganized government in response to the growing disinfo and state-sponsored digital influence ecosystem.

Twitter gave them the same inch they gave everyone else — a line to the moderation team — but the feds took a mile, and then weren’t sure what to do with it. The result was more noise and less signal, until Twitter had to tell them to get their act together and decide on a few reliable points of contact (our scary “funnels” from earlier) and documentation methods. It’s always grimly entertaining to see the government flail like this, but such logistical squabbles don’t seem worth reporting. Keep in mind this was also in the spring and summer of 2020, when all hell was breaking loose in pretty much every way.

As for the repeated assertion that Twitter was paid off by the feds, those are statutorily required consultation fees the FBI incurred through its requests for investigation (Mike Masnick’s reluctant reality checks on this and other contentions have been invaluable).

One note on the “narrative” side: The thread notes an “astonishing variety of requests” for account suspensions from officials. But only one is actually cited: Democratic Senator Adam Schiff’s office “asks Twitter to ban journalist Paul Sperry.” The request (denied) is, if you read it, actually flagging “many” accounts harassing a staffer (whose name is imperfectly redacted) and pushing QAnon conspiracy theories. Of the two named, one was already being suspended and the other was shortly after for other reasons. The choice and framing of this single example is telling. I would have liked to hear more of this “astonishing variety.”

Part 12: “Russian”

Claim: The intelligence community infiltrated Twitter’s moderation process after politicians perceived the company’s response to alleged Russian bot networks as inadequate

In this first place, this all happened a long time ago, and is mostly just internal emails about some news cycles where politicians were saying Twitter hadn’t done enough to prevent Russian election interference. It’s not really clear what story all these snippets are meant to tell.

Second, I remember writing about this back in 2018, and the thread is pretty misleading. Although the thread quotes estimates of accounts found from two to a couple dozen, their investigation as summarized here puts the number closer to 50,000.

Twitter updates total of Russia-linked election bots to 50,000

He also says these searches were “based on the same data that later inspired panic headlines,” for instance mine. But that’s not true. Facebook was reporting impressions from 80,000 posts placed by suspected Russian disinformation accounts. Twitter was looking independently for such activity in its own data.

Conflating them isn’t just wrong, it’s misleading and kind of weird. Again, it’s not really clear what’s being claimed here, and really important context and events are excluded from the account.

Last, and least supported, was the big claim that Twitter “let the ‘USIC’ into its moderation process.” As noted above many times, government entities were already in the process, making requests on a regular basis as they have for a long time and on every platform. The change flagged here is that “any user identified by the U.S. intelligence community as a state-sponsored entity conducting cyber operations against targets associated with U.S. or other elections” can’t buy ads. Considering the fallout from Twitter and Facebook taking money from accounts later linked to state-sponsored propaganda, this seems… smart. Open to abuse by the government, sure, but it’s hardly unique in that respect.

Part 13: “Jabs”

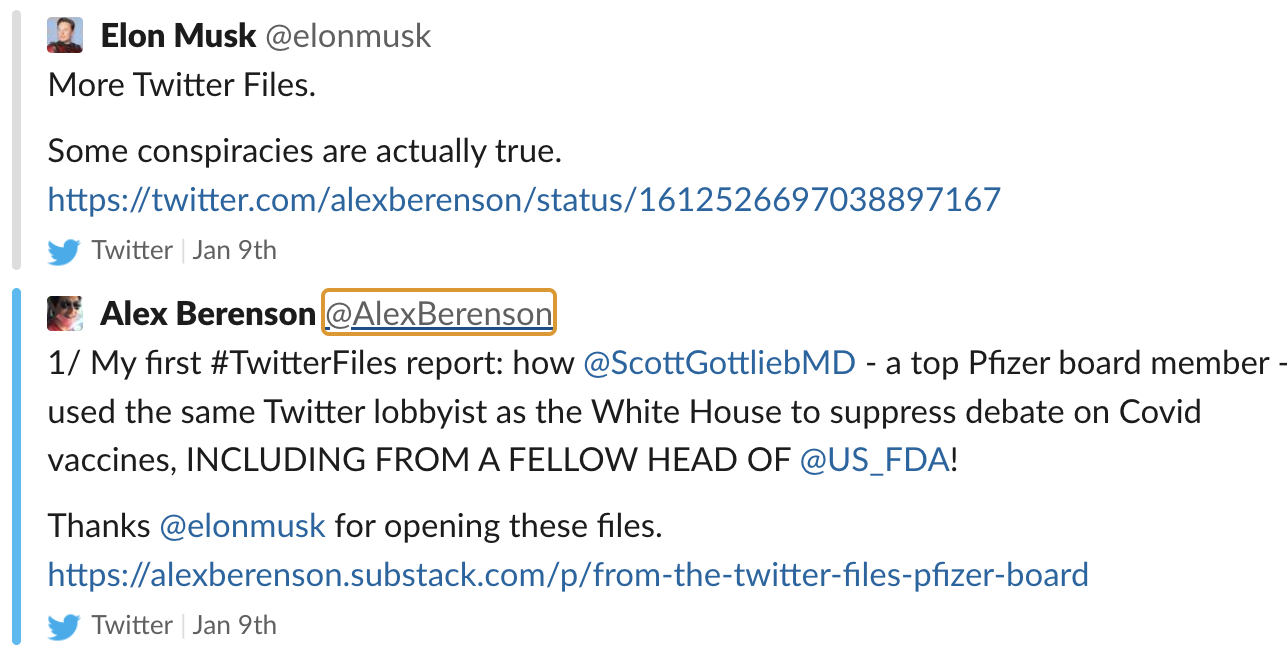

Claim: Pfizer board member and former FDA commissioner colluded with Twitter to silence COVID vaccine skeptics and bolster profits

This thread seems to concern a “misleading” label on a single tweet by one guy who claimed “there’s no science justification for #vax proof if a person has prior infection.” Scott Gottlieb, formerly FDA head and now on the Pfizer board, flagged the tweet to a third party (another of those funnels), who flagged it to Twitter, which evaluated it and labeled it. A second tweet sent the same way was not actioned.

Neither the scale nor the nature of these events are notable.

It must also be mentioned that this thread is authored by Alex Berenson, whom The Atlantic gave the dubious distinction of being “The Pandemic’s Wrongest Man.” Berenson, losing no time in joining the other authors in this golden opportunity to plug a freshly minted newsletter, says he too is a target: “Gottlieb’s action was part of a larger conspiracy that included the Biden White House and Andrew Slavitt, working publicly and privately to pressure Twitter until it had no choice but to ban me. I will have more to say about my own case and will be suing the White House, Slavitt, Gottlieb, and Pfizer shortly.”

This, I think, speaks for itself.

Part Etc…

Further installments in the series may appear (indeed one did, on “The Russiagate Lies,” while I was editing this piece), and like the above they will be covered on their merits. But let the above also serve as a counterweight to allegations that the press was predisposed to dismiss the Twitter Files outright. Though skepticism is a necessary characteristic of the trade, new information like that forming the core of these threads is always welcomed.

But the promise of the project has largely been squandered by the way that new information has been selectively and purposefully presented. Furthermore, the delta between the claims and the evidence for those claims has only widened as Musk has ventured increasingly far afield for willing participants.

In the past such sensitive data dumps have been collaborated on by multiple outlets and legal experts, who examine, redact, investigate and ultimately publish the files themselves. Many journalists, including those of us at TechCrunch, would have valued the opportunity to pore over the data to see how it confirms, contradicts or expands any of the claims above or stories already reported. Until that happens, honest skepticism and concern over amplifying misinformation or a billionaire’s vendetta take precedence over repeating the unsupported and, frankly, increasingly outlandish theories given the Musk seal of approval.

But even his imprimatur is fleeting. In a tweet promoting Berenson’s thread, Elon Musk wrote: “Some conspiracies are actually true.”

And some aren’t. He deleted the tweet soon after.

Comment