Rupa Chaturvedi

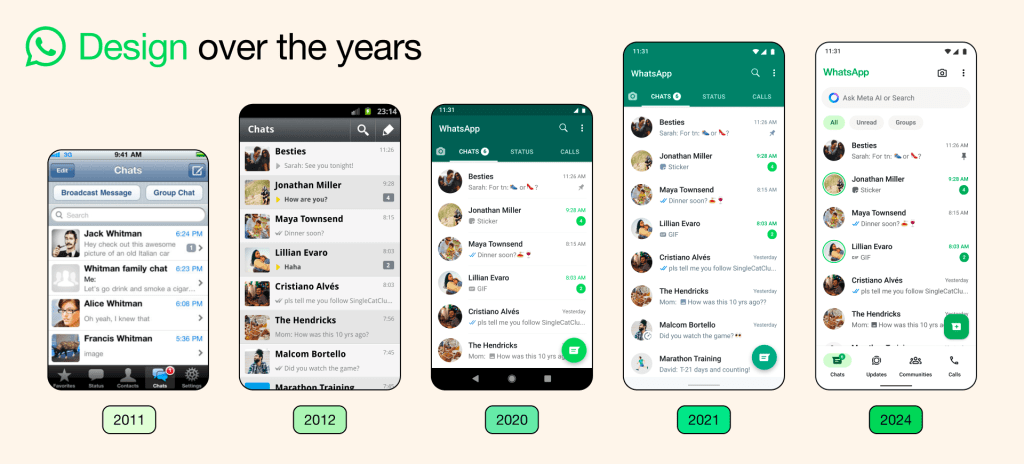

AI assistants are having their moment. Just a week ago, Google announced the aptly named Assistant, an AI capable of carrying on an “ongoing two-way dialog.” It joins a field crowded with Apple’s Siri, Amazon’s Alexa, Microsoft’s Cortana, Facebook’s M, the unreleased Viv and a whole host of others.

But right now, while these assistants can book a meeting, tell you the weather or give you directions to a coffee shop, they still feel a bit cold. They don’t react to changes in mood, circumstances or personal context — or anything else. In other words, they lack empathy.

What does that really mean? Humans have forever personified their technologies. We engage emotionally, have expectations and establish a trust-based association with our technology. If you’ve ever gotten irate at an automated phone menu or thanked your mobile phone for reminding you about an important meeting, this is a feeling with which you’re well acquainted.

The point is, technologies that are truly important to our well-being and happiness transcend their status as just “stuff.” Instead, we engage with them emotionally. The late Stanford professor Clifford Nass went as far as to claim the human/computer interaction is fundamentally social in its nature. In other words, if we have an emotional bond with our technology, wouldn’t it be smart to design systems that feel empathetic to our needs?

If you’re a bit reticent to accept that people can have a truly personal, emotional connection with a machine, consider the case of Ellie. Ellie is an AI psychologist that has primarily been used to treat military personnel suffering from PTSD. She uses verbal and nonverbal cues and engages in conversation like an AI assistant. The really interesting part here is that patients seemed to prefer talking to Ellie over a person. According to Albert Rizzo, one of the brains responsible for Ellie, the patients “didn’t feel judged, had lower interest in impression management and generally provided more information.”

Of course, talking to a psychologist is different than talking to an assistant. But it’s notable that people felt more comfortable divulging their real personal hardships to a machine than to a person. And when we talk about designing an AI assistant, it’s worth keeping this lesson in mind. Users aren’t creeped out by technology that knows and understands them. Done well, it can be just the opposite.

So how do we design an empathetic AI? How do we humanize our algorithms? We can start by freeing them from being so primarily backwards facing. Algorithms need tons of data to function of course, but they shouldn’t need to know everything about a user to help then book a plane flight. If we approach the problem as creating a more human (and thus more empathetic) AI, we need to imagine it interacting on a human, social level.

When we meet a stranger, do we start by asking for all their data? What purchases they’ve made in the last year? What their email address and credit cards are? Predicting what you may like based on my purchases for the last six months is intelligence. We can do that now. But you knowing I just want to let my hair down today and chill is empathy. We need algorithms that make decisions in the moment, about individuals, not from the past about groups of individuals.

One way to do this is by reimagining what we’re doing with speech recognition. AIs now can understand words, but they can’t really understand the emotion and tone behind them. This, of course, is what people do unconsciously, all the time. And if you look at a company like Mattersight, which has analyzed millions of hours of conversation to find personality and mood cues, you can start to see the outlines of how we might do this.

Which is to say those algorithms exist. It’s a matter of using them differently, designing for the user, not the tech. Instead of focusing on assistants that process what you say, we can also focus on assistants that understand how you say it. An AI that understands, in the moment, how a user feels, can behave empathetically. Imagine an AI that wouldn’t sass you when you’re sounding glum or one that would speed through an interaction when you’re sounding harried. In other words, an AI that modifies its behavior based on its user’s mood.

Of course, there are other ways to go about creating an empathetic AI past speech analysis. Facial recognition programs are getting better at intuiting feelings. An AI assistant you kept in your family room that could tell you’d spent the last hour laughing your way through your favorite comedy or if you’d spent it arguing with your significant other should be able to tailor its behavior and interactions around your facial expression, as well as the tone of your voice. It would react to you in the moment, not your browser history or other users who share your consumer profile.

Empathy is, fundamentally, about understanding individuals and how they feel. It’s also about realizing that people change constantly. We have good days and bad days, pick up new hobbies, change our patterns because we’re on a diet or are going on vacation or preparing for a big release at work. Every day is different, and every interaction is different, as well. An empathetic AI needs to understand that. Designing an AI assistant that can schedule a meeting on your calendar and notify you is intelligence. Knowing that you might have a “wrench in your day” and need to reschedule things on-the-fly is empathy.

If you’re catching a pattern here, you’re right. To create an empathetic AI, it’s not about seeing users as a group, but seeing each user as an individual. It’s about designing a system that picks up on the same subtle clues humans do when we gauge each other’s state of mind and intent and learn from those reactions. It’s about creating something that evolves and changes its behavior in the moment, just like we do in every conversation we have. It’s about making a technology that views users as the unique people they actually are. And if the next AI is going be empathetic, that’s exactly what we’re going to need to do.

Comment