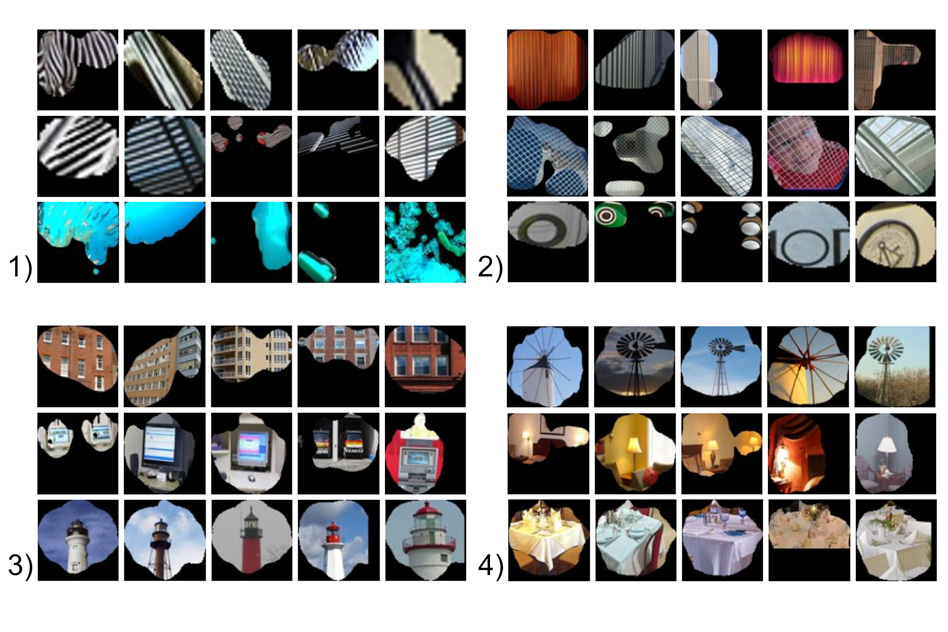

In this week’s edition of ‘omg the machines are on the verge of sentience,’ we bring you news of a research project out of MIT’s Computer Science and Artificial Intelligence Laboratory: A system designed to recognize specific scenes, which is a key ingredient in making computers smarter, turned out to also be able to identify objects as an unintended side effect.

When humans look at a picture, they automatically make a general judgement call about what’s going on in the scene – they intuit setting and context, essentially. This is a crucial skill that current computer vision and machine learning systems have a lot of trouble with, which can hinder the ability of intelligent systems designed to take on tasks like, say, driving or delivering packages. MIT researchers embarked on tackling scene identification, improving the performance of previous attempts by as much as 33 percent.

This weekend, the research team behind the project is presenting a paper about an unintended– but welcome – consequence of their initial work. The system they designed turns out to be able to identify objects as well as scenes, picking out pieces in what the researchers think are attempts to make sense of the whole.

Researchers weren’t entirely sure why their scene recognition tech was achieving about 50 percent accuracy when it came to labeling scenes (compared to just 80 percent among humans, by the way), but then they noticed that the system was picking out specific visual features within the image and returning to those; the theory is, it was doing something akin to what we do: recognizing parts as emblematic of the whole (a bed means a bedroom, a long table and chairs with a speakerphone means a conference room, etc.).

The team is hoping that this means object recognition and scene recognition are not only coincident in high-level machine learning systems, but mutually reinforcing, meaning as one gets better as does the other. If it is, that could significantly accelerate the pace of smarter computers – which will result in either Utopia or apocalypse, obviously.

Comment