At NUI Central‘s Kinect for Windows Hackathon, a projector on the ceiling creates an image of sand and shrubbery on the ground. The terrain is supposed to be Afghanistan. After walking around on the small projected space expecting nothing to happen, a boom and a white flash hit my senses. I stepped on a land mine, or at least that’s what the app, Sweeper, wants me to think. Sweeper is just one hack created last weekend at Microsoft-sponsored event in New York City.

Microsoft provided an open space for developers to tinker with the latest SDK of its Kinect V2 and two experimental technologies within the Kinect that could be coming soon: a near-field sensor and Ripple.

Ripples through the Earth

A team of developers from Critical Mass created Sweeper with Microsoft’s Ripple, which operates on JavaScript and HTML5. Ripple uses a dual-screen projector set-up: one projecting on the ground and the other in front of the user.

Sweeper places the user on projected tiles to walk around an area depicting the ground in specific countries where mines are an issue. When the user steps on the hidden mine, the screen flashes and is followed by the sound of an explosion.

Once the user steps on the mine, the app provides details about types of mines and other statistics, such as how it costs $3 to deploy a mine, but between $300 to $1,000 to disarm one, according to the United Nations.

The team believes this shock factor will help educate people on the issue while also helping to raise donations for groups such as the United Nations Mine Action Service to aid the disabling of these mines.

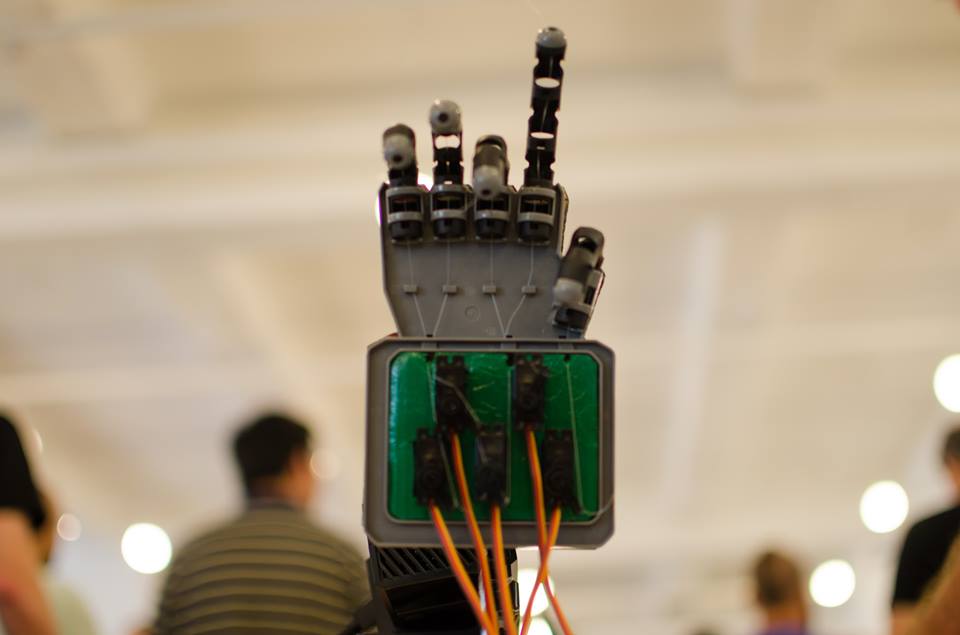

Exploring Natural User Interfaces with the Kinect V2

The Kinect V2 registers more joints and can map six skeletons as opposed to V1’s two skeletons. Laureano Batista, a user experience designer who attended the hackathon, hopes the V2 will provide more exploration, artistic and creative experiments for developers, rather than entertainment.

“[Natural user interface] is not one amongst many strategies of going forward; NUI is going to be the way that we deal with technology,” he said.

It’s one of the reasons why NUI Central, a community for people interested in developing and creating natural user interface technologies, hosted the two-day hackathon. The team is hung up on “natural interfaces” — defined as any manner humans use to express themselves: from hand gestures to facial expressions and body movement. To them, a touchscreen device is not a natural interface.

NUI is “a more humanistic approach to communicating with computers and devices,” said Debra Benkler, co-founder of NUI Central.

The approach focuses on how technology reacts to humans, rather than how humans react to technology. Benkler believes this switch has already happened and hopes the NUI community can help change the way people live.

This thinking informed several other hacks, including an object avoidance robot. Developer John Yeh mounted a Kinect V2 on a chassis to create a robot that maneuvered objects in its path using the sensors from the V2.

Yeh said this could be applicable to a drug delivery robot in a hospital, which would move around people so that people would not have to react to it. The idea is to make the technology around us transparent.

Near-Field Sensors And Your Next Doctor’s Appointment

Imagine replacing the standard visit to the doctor’s office with clicking an app in the Windows Store. That’s what Dwight Goins wants to do.

Goins, who hails from Colorado Springs, Colo., is a system architect for Nimbo, but on the side, he’s creating a framework for a virtual doctor app that can read vital signs using the near-field sensor, which works at a distance from 1 centimeter on the Kinect V2. So far, it can only check an individual’s pulse.

He is still tweaking the app’s user interface, but said there are many powerful algorithms that can be implemented with the app’s framework, as the app uses the sensors to read changes in skin pigmentation. Algorithms can be programmed to look for jaundice, blood content and check the breathing rate. He doesn’t plan to give the source code away for free, but he wants to give developers examples on how to use the framework.

Both the Ripple and the near-field sensors are experimental technologies within the Kinect V2, says Ken Lonyai, co-founder of NUI Central, and it’s not clear if Microsoft will officially release them.

Comment