Ford is on a quest to ensure that its drivers keep their hands off the wheel for as long as possible. Drivers who own BlueCruise-equipped cars, anyway.

Today, the company is announcing BlueCruise 1.3, dropping just a few months after the 1.2 release, which added niceties like automatic lane changes.

For 1.3, BlueCruise will stay more engaged through tighter corners than before. It will also position the vehicle more accurately in narrow lanes.

No, these are not radical changes to the way BlueCruise operates, nor will they revolutionize the lives of the people who pay up to $800 per year for access to the service. But, being evolutionary and iterating quickly is exactly the point.

When Sammy Omari joined Ford a year ago, Ford’s software teams would issue internal releases of software on a quarterly basis. “Now we have an internal release every week,” he said.

Omari is Ford’s executive director of advanced driver assist technologies, and CEO of Ford’s new autonomy subsidiary, Latitude AI. Omari joined from Motional, the $4 billion Aptiv-Hyundai joint venture.

“Going from a quarterly release to a weekly release is obviously a lot of tooling, and also a lot of mindset changes,” Omari said. Today, Ford’s focus is less on gathering requirements and more about addressing customer feedback.

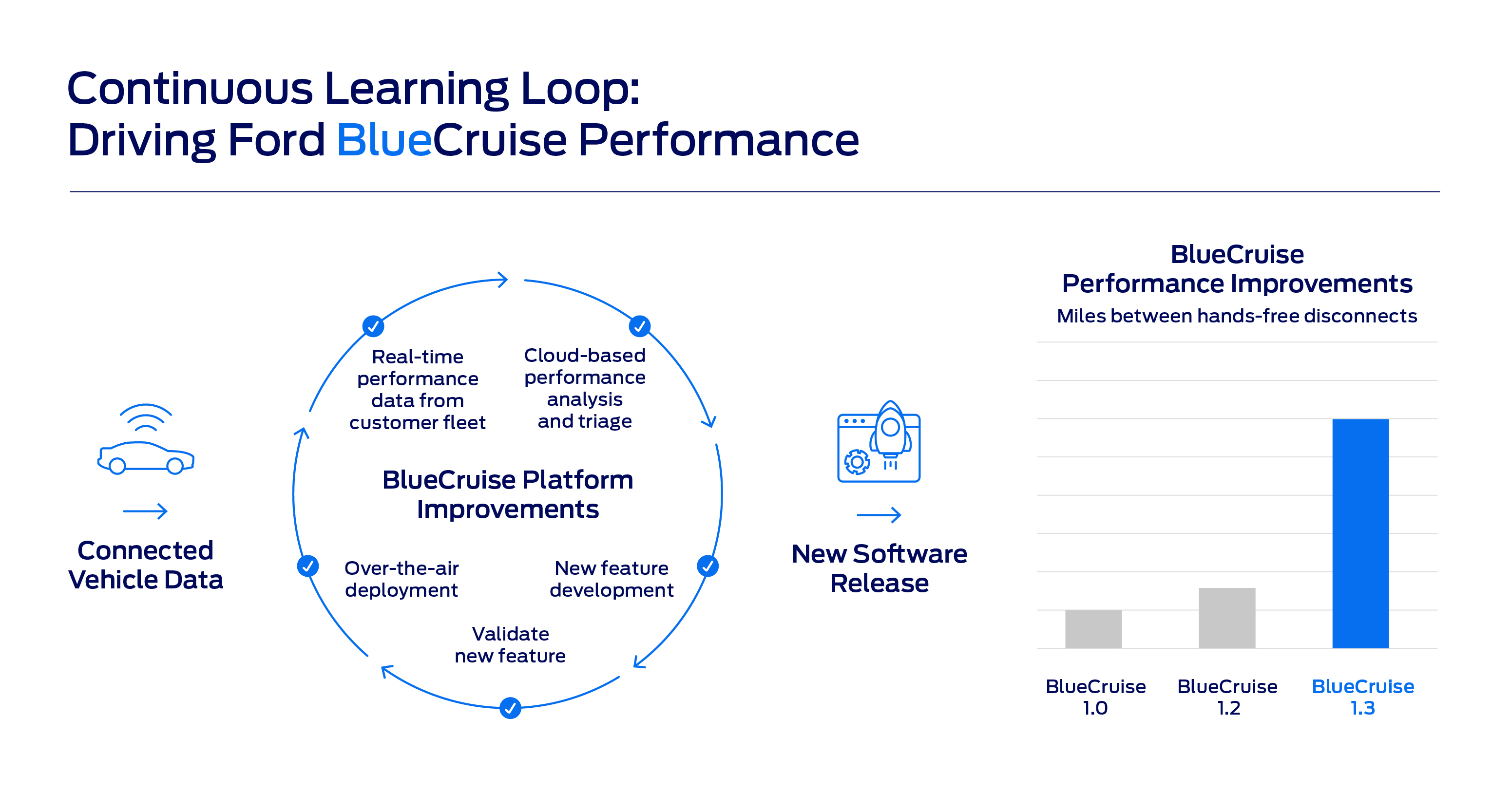

It’s feedback that largely comes in automatically. “If you opt in, you share data every time, for example, during hands-free you take over and you control the vehicle yourself, or if the vehicle kicks you out and says ‘Please take over again,’” Omari said.

His team looks at those interventions on a daily basis, grouped by factors like geography, vehicle, and road type. By focusing on those moments, Ford engineers have massively expanded the operating window of its most sophisticated advanced driver assistance system (ADAS), allowing more drivers to spend more time with hands off the wheel.

“We’ve basically improved that 3x,” Omari says of the latest release. For anyone coming from BlueCruise 1.0, Ford says it’s closer to a 5x improvement.

That’s part of how Ford is hoping to continue to improve the appeal of BlueCruise and part of its continued evolution to be less of a car company and more of a services company.

“It’s the same way that Netflix needs to continue providing content. […] We think about this the same way. If someone is on BlueCruise, they will continuously get updates the same way,” Omari said.

But Netflix isn’t the only company that Ford is emulating. Perhaps in a nod to Doug Field, Ford’s chief advanced product development and technology officer, hired from Apple in 2022, Omari says that Ford is also looking to Cupertino for inspiration.

“This is about ADAS, but it’s also about a lot more than ADAS. It’s basically about the ability for Ford to now have an installed base of hardware on a lot of our vehicles,” Omari said. “The same way that Apple has iPhones, [regardless] of iPhone 10, 11, all the way up to 14, and they can roll out iOS updates regularly. We think about this the exact same way.”

In other words, like with Tesla, model year distinctions are becoming irrelevant.

While Omari declined to specifically list what features and functions are next for BlueCruise, he didn’t rule out Ford’s hands-off technology eventually being able to navigate drivers off of protected highways and into rural and even urban streets.

“It’s basically understanding where our customers largely [are] using the vehicles, and then expanding in those areas,” he said.

However, there is one frontier where BlueCruise, at least in its current guise, will not go: eyes-off driving. So-called Level 3 driving, where the car can fully take care of itself in some situations but may hand control back to the driver, is not possible on the current hardware found on the Mach-E and F-150 Lightning.

“We do need new hardware, and the reason for that is largely redundancy,” Omari said. This includes both sensing redundancy, so that multiple sensors are covering the vehicle in 360 degrees, and also redundancy when it comes to control features like power steering.

Hardware redundancy is not something that can be added via OTA update to Ford’s current lineup of cars.

Instead, true eyes-off driver assistance, of the sort that Mercedes-Benz has recently been granted approval to sell, will only come on Ford’s next generation of EVs, expected in 2025 and at least partly built at the $5.6 billion BlueOval City complex.

That functionality will require not only new levels of hardware to enable, but also a more refined, more thoroughly integrated software stack, too.

Right now, the “perception” piece of BlueCruise — that is, the first level of processing information from the car’s various sensors — is handled in part by software licensed from Mobileye.

That may change for Ford’s next-gen, eyes-off solution: “We haven’t talked specifically about to what extent we will continue to use Mobileye moving forward. But we did just bring in the Latitude team earlier this year, which does have a very large number of very experienced perception engineers,” Omari said.

What that means for Mobileye remains to be seen. What it means for Ford is that many of the displaced workers from the Argo AI project have new jobs — and a new purpose.

Omari says somewhere north of 550 employees, largely former Argo staff, are now working with Latitude, Ford’s new autonomous driving division that, in many ways, is picking up the pieces from the abrupt termination of the Argo AI project in October of 2022.

On the surface, it’s a confusing move to spin up a new autonomy division so quickly after axing the last, but Omari says the purpose is very different.

“Argo was all about level four robotaxis,” he said, which, in terms of technical and commercial viability, still have “a very long way to go.”

“At the same time, with L3, we have a product that we know our customers love, and we have a very clear path towards how we can build this from a technology standpoint,” Omari said, adding that the path to commercial viability is much shorter: “The moment where people can get their eyes off the road, this is a massive game-changer…People will be willing to pay for that — quite a substantial amount.”

Latitude’s goal, then, isn’t to create fully autonomous driverless shuttles of the sort that Waymo and Cruise have been developing for years. Instead, the shift is back to technologies that can be deployed to passenger vehicles more quickly and more profitably.

The Argo AI sensor package, mounted to the roof of its test vehicles, costs more than the cars themselves. For an options package on a near-future Ford consumer vehicle, affordability will be key.

What exactly that future, Latitude-powered, eyes-off system looks like and how much it will cost remains to be seen. For now, though, the 1.3 release of BlueCruise will land on all compatible Ford Mustang Mach-E SUVs sometime this summer.

F-150 Lightning owners with BlueCruise will have to wait a little longer, but Omari promises they’ll have it by the end of the year.

And he’ll be testing every new version every step along the way: “Every week on Friday I get in the vehicle, I get the newest release, I go test drive.”

Comment