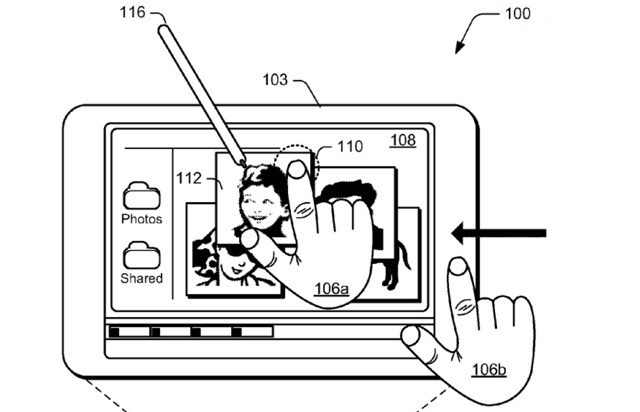

A little while back, I got an email from Atmel, one of the leading touchscreen makers, asking if I wanted to check out their latest creation: a new active stylus that works with an improved touchscreen, for stylus actions alongside normal finger-touches and technologies like palm rejection. I passed, because to be honest, it didn’t sound very exciting.

It has shown up at a few other websites, though, and I thought (slightly apologetically) that I should at least watch the video. I did. And — it’s not very exciting.

Yet despite being a third-class citizen in our world of capacitive touchscreens, being publicly ridiculed by Steve Jobs, and generally being considered a nuisance, the stylus isn’t something we should relegate to the company of floppy disks and CRT monitors just yet. Here’s why we can’t write it off.

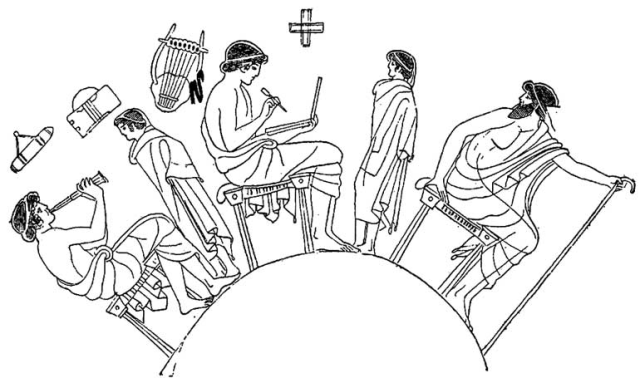

The first styli, strictly speaking, were used by the Romans, since they invented the word. But cuneiform writing was performed with a primitive stylus as well, and certainly it was used before then, though they were probably used more for scraping marrow from mammoth bones or the like. The point is they’ve been around for a long time because they have always offered certain advantages. They still offer them now.

First, a stylus amplifies your input. With a stylus you can make quick and precise movements of a number of sizes. Ever wonder why nobody writes longhand with their finger? By amplifying small but precise movements that can be done rapidly, handwriting was made possible in the first place, as well as things like detailed drawings and paintings. Even if you’re drawing in the dirt, you do it with a stick.

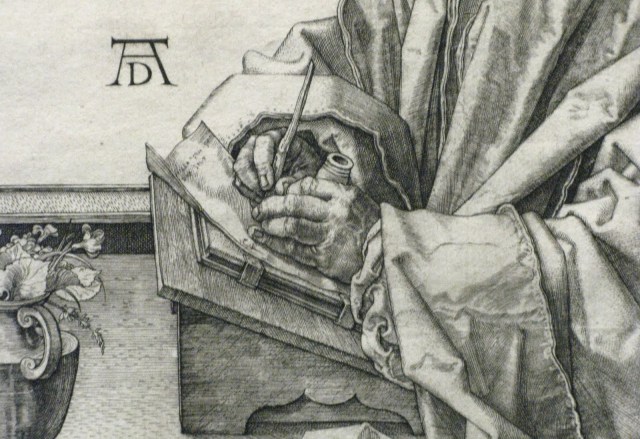

Second, it dampens your input. This seeming contradiction is at the heart of why a stylus, pen, brush, or what have you is so powerful. While it allows you to amplify the movements you make by extending their effective range, it also allows for more precise control by utilizing the gamma motoneuron system. This is (if I remember correctly) a sort of global tension control in your motor system that allows you to ratchet up the tension in lots of muscles in order to have more precise control over them. Have you ever noticed that you were unconsciously clenching your jaw or tightening your neck muscles while performing an action that required great precision and concentration? That’s the gamma system’s effects spilling over onto adjacent systems while it ups the quality of your hand’s movements.

We use this system while we write and draw; haven’t you ever noticed how tightly some people grip their pen or pencil? By overshooting the tension required, the gamma system allows for tiny adjustments and quick but exact actions. The fine controls of our hands and fingers, however, are designed more around gripping and applying various amounts of pressure, not making tiny movements.

Third, you can see what’s under the stylus. This is essential to artists, of course, but it also completes a simple visual feedback loop in which you can tell what you’re touching. With a fingertip, past a certain point it’s guesswork. You see the button, you move your finger, and then you hope. But with a stylus, pen, or cursor, you see the button, you see where your control point is, you move it closer, you see it’s closer, you move it on, you see it on, and you click, or write a check mark, or tap.

You can see that these advantages aren’t just, say, 20th-century advantages, for generations that needed pen and paper to record things. A surgeon uses a sharp stylus to perform surgery. A painter uses a soft stylus to make strokes. We all use stylii with special tips to screw in screws, flip eggs, eat chinese food. The stylus isn’t a holdover from an earlier age; it’s a fundamental add-on to human physiology.

So why did Jobs mock it and leave it behind? For some time before the iPhone came out, the stylus was used because it was the only option. Capacitive screens were too expensive, or not precise enough. Resistive screens offered a compelling alternative to d-pad-based navigation, and the best way to interact with resistive screens is a stylus, not your fingertip. Jobs wasn’t ragging on the stylus, he was ragging on an old solution to a problem, a solution people hadn’t bothered updating. The uses and form factors of mobile phones are such that a stylus isn’t the best solution when it isn’t the only solution; a fingertip serves much better in most cases. But there are just as many cases, as with the mouse and the trackpad, where the opposite is true.

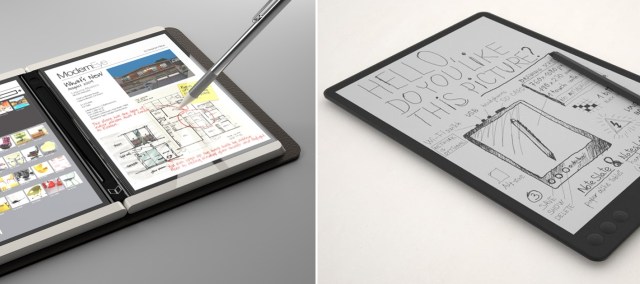

Think about the Courier and the Noteslate, both of which generated a froth of enthusiasm despite not being real. The idea was a sort of next-generation paper notebook, stylus and all. You wrote things, you circled things, you touched them with your finger if that worked, you used the stylus if that worked. Some might say it was more of a throwback than a look forward, a product that clung to outdated notions of how we interact with information. Outdated as opposed to when – now? Does this imaginary interlocutor think that in 20 years, we’ll all still be using 10-inch glass screens, running our fingers across them, doing pinch-to-zoom? This excellent “brief” rant on interaction design points out just how shortsighted today’s devices are: entirely abstract, using next to no natural inputs or gestures, and totally inflexible. Seeing the things cooked up with a Kinect suggest a fusion of the virtual and the real that makes a tablet’s flat, static window look positively primitive.

But clearly, to return to the topic at hand, Atmel’s state of the art touch solution isn’t what we’ve been waiting for. An improvement to be sure, but it’s a far cry from the level of detail possible with a Bic and a sheet of paper, and until the stylus and screen pass that level of usefulness, the applications are limited (though it will likely work nicely with Windows 8). What needs to happen before the stylus becomes truly relevant again?

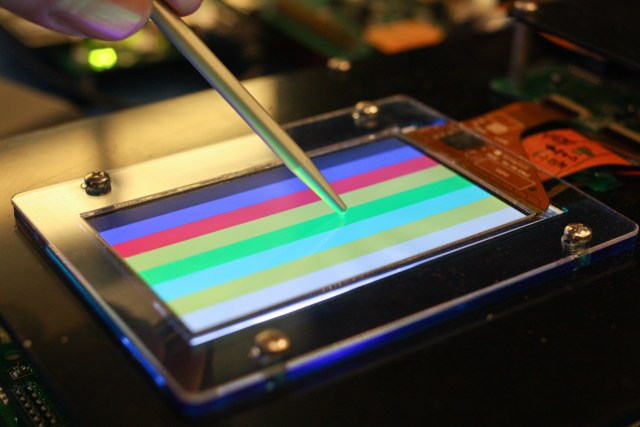

One thing I saw earlier this year at Mobile World Congress in Barcelona was a touch system by Atmel’s arch-enemy Synaptics that fairly blew me away. A capacitive screen that could detect both conductive and non-conductive items (say, a gloved hand or stylus), but passively, unlike Atmel and others’ active solutions (this has its own substantial shortcomings).

Latency was also reduced by integrating the touch sensor with the display sensor. You have probably noticed that when you write something with a pen, the line appears immediately. The fact that it doesn’t do so when you use a stylus on a touchscreen is probably more disorienting than you think; you can’t error-check your own small movements at your own rate, you must wait for the machine to catch up. Low latency is a step in the right direction, and it’s one place where high-Hz display rates could be truly useful.

Resolution is also important, as in so many other things to do with exactness and design. When I draw a short line and the aliasing makes it look like a tiny lightning bolt, I feel like giving up. The rumors of an iPad with a vastly higher resolution are nice, but they don’t help the stylus, since Apple has inoculated itself, rightly or wrongly, against stylus support for the rest of time. But Apple doesn’t make the displays, and these mega-resolution screens could help make the stylus worth using again.

The touch ecosystem and the people within it need to realize their limitations, as well. Right now finger-based interaction is still novel, still being fleshed out (so to speak), optimized, still being applied to different models. But we’re already bumping into the borders beyond which this kind of touch, the iPhone kind of touch, will be useless.

For typing, it has already proven a painful technology to use — so we have an accessory, not unlike the stylus we have mocked, for this basic act of computing. For any kind of actions that require precision, such as illustration, the capacitive screen is also useless, failing as it does to provide that feedback loop. Our interactions with tablets and phones are for the most part coarse and inexact, and entire UIs (witness iOS, which some would argue falls more on the side of simplicity than elegance) have been designed around this fact.

We’ve gotten around some of these problems with clever little tricks, and we’re constantly trying to invent new ones to expand the capabilities of what must be recognized as a very limited interaction method. Sooner or later someone will stand on a stage, as Jobs did, and ask “why are we still pointing and jabbing at our icons and applications like kindergarteners doing finger-painting?” And maybe he’ll show us, as Jobs did, how long we’d been rationalizing our poor choice in interface. Will it be Atmel on stage? Synaptics? E-Ink? Microsoft?

Whoever it is, it won’t be for a while. The stylus today, let us admit, is impractical for a number of reasons, both design and technical, as Atmel’s video and every device available shows. But as touch goes from novel to normal to mundane, the angst of users stymied by its limitations will grow, and with that angst, demand for something new. The mouse rode a wave in the 80s. The iPhone rode the wave a few years ago, leaving the mouse behind. The next one will leave the iPhone behind, an artifact of the late aughts. What of the stylus? If we have truly exhausted its applications, it won’t return, but I think it’s manifest that we have not.

That was a long and winding rationalization for a perhaps irrational love of the stylus. But I firmly believe that its days are not done. Its weaknesses became a problem before its strengths were given a chance to shine. The stylus is as ageless as the wedge, the wheel, the projectile. We’ve reinvented all these multiple times. When technology catches up yet again to the pen, the pen will be ready.