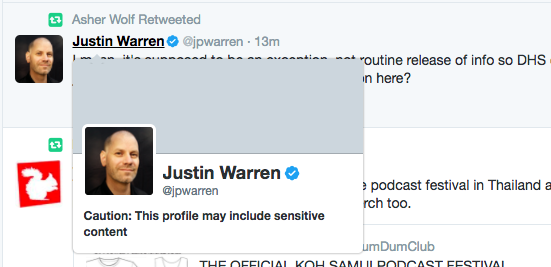

Twitter confirmed it’s testing a new feature that flags users’ profiles as potentially including “sensitive content.” When you click on one of these profiles from a link on Twitter, or if you visit the profile’s web page directly, you won’t be immediately shown the users’ tweets. Instead, a warning message displays, reading “Caution: This profile may include sensitive content.”

When you click a link to the profile on Twitter, the message appears in a pop-up window. And if you visit the profile directly, the warning message is all that displays until you agree to view the content by clicking the “Yes, view profile” button.

A reporter at Mashable first spotted the feature when trying to view the profile of technology analyst Justin Warren, but could not determine how the content was flagged.

Image credit: Mashable

That’s fairly difficult to do in this case — after all, Warren’s tweets seem fairly innocuous, except for a little swearing at times.

Twitter tells us the new feature works similarly to how other sensitive content on Twitter gets flagged, based on users’ settings.

Currently, the company permits content that contains violence or nudity, but it draws the line at “pornography or excessive violence in live video, or in your profile image or header image,” according to its page on sensitive media. It doesn’t mention profanity, racism, bigotry and other types of offenses, however. But sensitive content is not limited to “violence or nudity,” we’re told.

Users can choose to mark themselves as someone who tweets sensitive content through their “Privacy and Safety” settings.

https://twitter.com/Bro_Pair/status/839706277626462212

https://twitter.com/A_M_Perez/status/839624199568244736

In addition, other Twitter users can report tweets to the Twitter team for review. In this case, if the tweet is determined to be potentially sensitive, Twitter will label the content appropriately — or remove it, if it’s a live video. It may also adjust your account setting for you, so your future tweets are marked accordingly, if it deems it necessary.

For repeat violations, Twitter may permanently adjust that setting on your behalf, it says.

The process for marking entire profiles as sensitive follows a similar set of guidelines and processes, including the fact that Twitter can take an active role in identifying these accounts, based on the content of the account’s tweets.

The feature is still in testing, and not widely rolled out at this time.

A Twitter spokesperson confirmed the new feature, saying “this is something we’re testing as part of our broader efforts to make Twitter safer.”

In recent days, Twitter has taken a number of steps to address the issues of safety and abuse on its network. It has rolled out new filters for hiding harassing content, safer search results, a “time out” feature for bullies, user interface tweaks to hide low-quality and abusive tweets, a better Mute option, more transparency around abuse reporting and smarter algorithms for identifying and handling abusive content, as well as those that prevent abusers from coming back after it bans using new accounts.

Warning users about select individuals is not necessarily another change aimed at quelling abuse, but rather making the network feel more friendly. It’s not exactly a novel idea, of course. Plenty of networks flag content that’s not appropriate for all to see — like YouTube’s warnings on age-restricted content or Facebook’s warnings about graphic content, for example.

As with other new anti-abuse features, some people seem genuinely baffled as to why they were flagged, not seemingly able to connect the dots between their tweets and their consequences.

Case in point (um, sensitive content warning):

https://twitter.com/anaisnin/status/839841795018272768

https://twitter.com/anaisnin/status/838854434226647041

https://twitter.com/anaisnin/status/838855088751915010

https://twitter.com/anaisnin/status/838644915491979264

https://twitter.com/anaisnin/status/838545933256228864

https://twitter.com/anaisnin/status/838857009348882434

https://twitter.com/anaisnin/status/838535877760548864

https://twitter.com/anaisnin/status/838533505550282753

https://twitter.com/anaisnin/status/838536580990124032

Oh, such as…?

https://twitter.com/anaisnin/status/839610149169991680

(I mean, perhaps someone didn’t like the tweets amid the posts of “art and literature and Italy” and reported them? Idk, wild guess!!)

Twitter did not say when the new feature would be more broadly available.