Facebook’s News Feed has decided that I like gruesome murders. Actually, senseless deaths and gruesome murders. I’m not exactly sure when the problem started, but I imagine it was around the time of one of the now too-common stories of mass shootings like Sandy Hook. Or at least, that’s what I like to tell myself – that surely, I was following a nationwide news story of importance rather than clicking sensational headlines that trade human tragedy in exchange for pageviews.

I’m not really sure.

But what I do know is that now any extensive visit to my Facebook News Feed has grown really depressing.

Amid tech news and baby photos, I’m now bound to come across some shared headline of something so horrific, so odd and unbelievable, that I actively have to stand up and walk away from my computer or put down my phone to keep myself from clicking. After commenting on a friend’s mobile photo upload, I scroll down to find the worst of humanity only a few items below. I don’t want to know why this man put a pig mask on his wife after stabbing her 82 times, but I’m going to click. I’m sorry, I just am. (Did you?)

And in clicking, I feed the machine. The emotionless algorithm that simply matches my interests to the stories that display. The algorithm that feels no remorse in abusing my sometimes precarious psychological state in exchange for increased time-on-site or pageviews.

Okay, I admit it. I’m human. And human curiosity can be a damning thing. I’m weak, and I’m ashamed. I am the person who is going to slow down and look at the wreck on the side of the road. And sometimes, I’m going to click the linkbait. It’s not always of the UpWorthy variety.

What is Facebook’s responsibility to stop reinforcing the worst of my online behavior? Really, how many times should the most tabloid-esque murder-du-jour story rise up to the top of my News Feed? Today alone, I’ve seen stories of a boy who choked his cheerleader girlfriend, of dismembered body parts strewn around Long Island, of a man who killed a teenaged neighbor over a lawnmower. I don’t need to know these things. They don’t improve my life or my understanding of the world in any way. They only reinforce my belief that people are horrible, and that evil is real.

Today, I unfollowed Gawker as one of my “news” sources on Facebook, which should go a long way to fixing this problem, as they seed a lot of this kind of human-death-for-content. (You can do this from the drop-down box on the post – just click the “Unfollow” option.) I hope it’s a permanent solution, but I’m still wary of loading my News Feed. What terrible thing awaits me below?

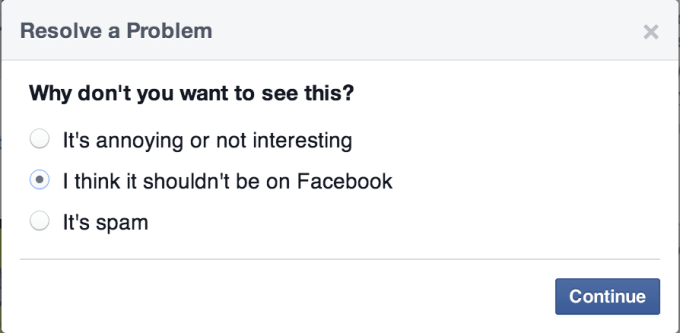

After the deed is done, Facebook asks me why did I unfollow this source? I click the link to tell it exactly why I did such a thing, but the only options are “annoying or not interesting,” “shouldn’t be on Facebook,” or “spam.”

Where is the option for “it’s just too much?” Where is the option for “psychological abuse?” Where is the option for “because I can’t trust myself around linkbait?”

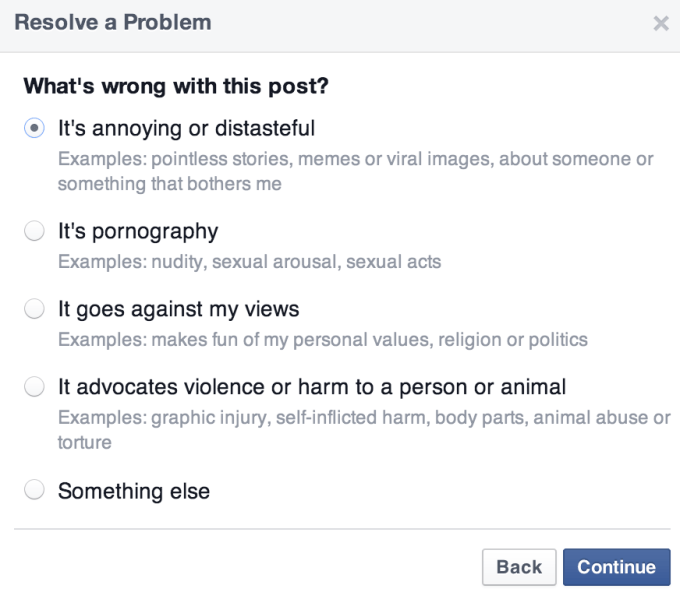

Oh, it’s on the next screen:

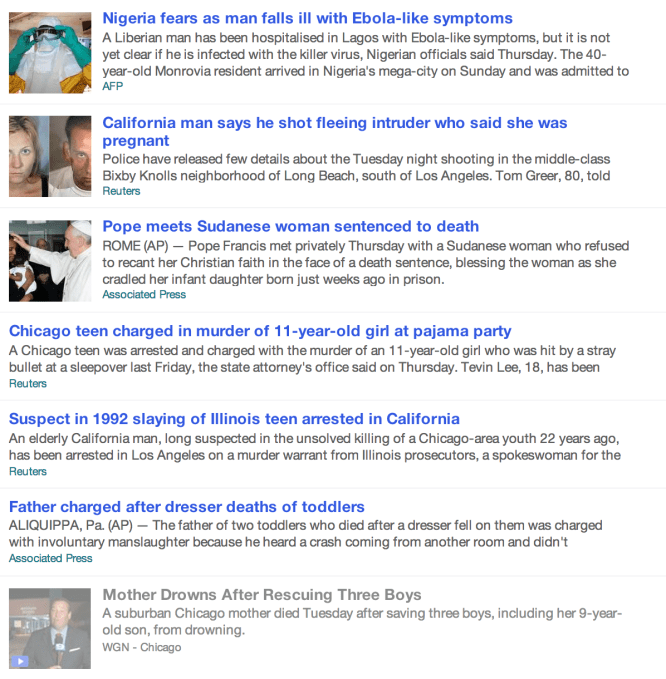

Let’s be fair: Facebook is not the only site that takes the personalization element to its worst possible extreme.

I read a story about a Taiwanese plane crash on Yahoo News, and my “Recommended for you” section is immediately filled with other tales of death and horror. I scroll down and see these suggestions: a man who killed a neighbor over a tree-trimming feud; a mother and daughter who die from a food truck blast; an inmate’s botched execution; a Nigerian man with Ebola; the man who shot a pregnant intruder; the father whose toddlers died when a dresser fell on them; the teen who murdered an 11-year old at a slumber party – and all that’s after just a scroll or two.

Thanks, Yahoo. I’m now hiding under my bed afraid of the world.

I don’t want to be uniformed. I want to read the news. There are wars going on. There is political upheaval. What are we doing about the child refugees? These are things I need to know more about. These are things I should have spent my random few minutes of news browsing on, but yet I’m instead fed the “stories I like” via algorithms that have taken the place of human editors – editors who would have known not to create entire front pages filled with the faces of death.

This is not just a minor annoyance. It’s a very real concern – not only about the narrowing window I have on the news of the world, but a concern about the impacts of emotionally damaging content on unsuspecting victims. This is especially worrisome since Facebook, it seems, has no problem running psychological experiments on unwitting users. A test, which no Facebook user knew about until it wrapped and was discovered by the media, was a study that was meant to discover whether Facebook’s News Feed content could impact a user’s emotional makeup. Spoiler alert! It does.

Consider me just another data point. I read Facebook; I feel sad.

More seriously, consider the impacts of unfeeling technology and impersonal algorithms on the vast numbers of those suffering from mental illnesses. Whether they’re browsing self-harm content on Tumblr and Instagram, or vlogging their most heinous thoughts – as did the Santa Barbara shooter who threatened to “punish” “you girls” in his YouTube videos before his terrible spree.

While our legislators argue over gun control, the U.S. is suffering from a mental health epidemic – a direct result of mental health reforms that were originally meant to stop the abuses of human rights that took place in the psych wards of the past. But now we have a system that actively prevents people from getting help.

People who suffer from distorted – and dangerous – mental states, or even with more treatable conditions like depression, are also using computers, browsing the web, and reading sites like Yahoo News and Facebook. And we don’t know the long-term impacts of them being subjected to the outright manipulations that take place when a site like Facebook decides to study them like lab rats. Nor do we fully understand the impacts to the rest of us when Facebook or some other news portal simply rolls out a new “recommendation algorithm” as part of its everyday experimentations.

When the machines over-feed someone stories about death and violence, do they get partial credit later for their psychological break – or do they only get lauded for helping catch the killers through digital debris or uniting the victims via online support groups?

This is one of the many fallouts from our linkbait addiction – the result of a culture too overwhelmed with content to read anything at length. But to the humans building these systems, I ask you to remember this: We are an easily tricked and manipulated species. When all you count is what gets clicked, the algorithm fails. At best, a news room loses focus. At worst…well, look around.

Image credits: Shutterstock; Facebook; Yahoo