The next generation of apps will require developers to think more of the human as the user interface. It will become more about the need to know how an app works while a person stands up or with their arms in the air more so than if they’re sitting down and pressing keys with their fingers.

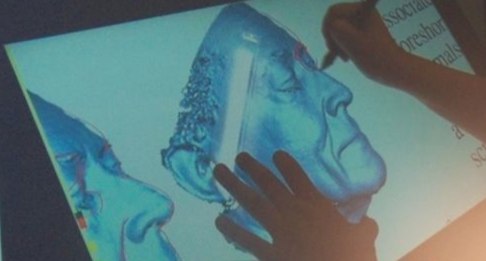

Tables, counters and whiteboards will eventually become displays. Meeting rooms will have touch panels, and chalk boards will be replaced by large systems that have digital images and documents on a display that teachers can mark up with a stylus.

Microsoft General Manager Jeff Han gave developers at the Build Conference last week some advice for ways to think about building apps for this next generation of devices and displays. I am including his perspectives as well as four others who have spent time researching and developing products that fit with these future trends.

Han talked about the need to think how people will interact with apps on a smartphone or a large display. That’s a pretty common requirement now, but the complexity will change entirely as we start seeing a far wider selection of devices in different sizes. He said, for instance, developers need to think how people stand next to a large display, how high they have to reach and the way they use a stylus to write. The new devices we use will soon all have full multi-touch capabilities so a person can use all fingers and both hands to manipulate objects and create content. Here’s the full talk he gave at Build, which includes a discussion about the types of devices we can expect from Microsoft going forward.

Jim Spadaccini of Ideum, which makes large interactive displays, said in an email the primary consideration that his team thinks about is how multiple individuals interact together around a table. Does the application encourage collaboration? Is the application designed in such a way that multiple people can interact?

He said that in looking at these questions, issues such as the orientation of information and overall structure of the application become really critical:

We look to design applications that have elements that are omni-directional or symmetric. These allow individuals to approach a table from any direction (or the two “long” sides) to interact together. The interaction is as much social engagement — visitors interacting with each other — as it is about interaction with the program.

Cyborg Anthropologist Andrew Warner said to me in an email that there’s a tendency in computing to think that we are becoming increasingly disembodied. In some senses this is true. In other senses, though, this is becoming less true. Every generation of computing becomes more tactile. The first interfaces were awkward keyboards and terminal, then came the mouse (which required the whole arm to move), then the touchpad (which we lovingly stroke with our fingertips), and then the touchscreen (which integrated display and touch).

The next generation of technology will bring new ways for people to get similar tactile experiences that we get when we touch objects or materials. Developers need to remember that people are sensual beings, and our bodies are the best interfaces we have. Even though we have ways to transmit thoughts directly to music, people still use embodied interfaces because we have been fine-tuning our nervous systems throughout our entire lives as we learn to interact with our environment. Additionally, we are “wired” to receive a basic pleasure from physical activity (exercise gives us serotonin and dopamine feedback). Developers need to harness this and make computers sensual again.

Amber Case is the co-founder of Geoloqi, the mobile, location-based company acquired by Esri. Known for her expertise in the field of cyborg anthropology, she is also the founder of Cyborg Camp, which took place in Portland this weekend. She mentioned on Twitter the concept of computer aided synesthesia. For instance, this correlates to the concept of using senses to simulate what another sense is unable to do.

From Artificial Vision:

However, a main drive for investigating artificial synesthesia (and cross-modal neuromodulation) is formed by the options it may provide for people with sensory disabilities like deafness and blindness, where a neural joining of senses can help in replacing one sense by the other, e.g. in seeing with your ears when using a device that maps images into sounds, or in hearing with your eyes when using a device that maps sounds into images.

We’re going to have to test our apps with the boring old keyboard and mouse, as well as touch, voice, different display sizes and resolutions, and assistive technologies to make sure we’re actually still reaching everyone. It’s a very exciting time, and that usually leads to some really bad decisions. It’s important to choose what we use and how we use it wisely so that each user can participate as fully as possible.

People with little interest in computers have come to love their iPhones. They are easy to use because of their touch capabilities. This means we will see far more people using computers because they fit with their lives. Displays will be everywhere. The challenge will be in the UI and how we adapt it to the way we move about in our personal lives and in our work.