Microsoft plans to offer devices based on Perceptive Pixel technology that uses both pen and touch to create what is equivalent to the human as the user interface.

Microsoft General Manager Jeff Han, who sold Perceptive Pixel to Microsoft earlier this year, hinted at the news with a slide in a presentation at this week’s Build conference in Redmond. It said “Devices *are* coming” along with an email contact to get access to the hardware. He did not say when the devices would be available in the market.

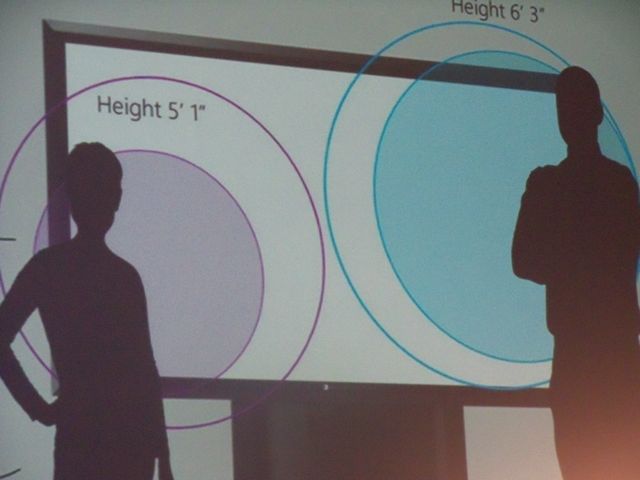

While he did not say it outright in his presentation, Han clearly outlined the role multi-touch will have for almost any device Microsoft develops going forward. He said the enterprise and education markets have particular promise because they can be used in meeting roooms and classrooms. Multiple peole can touch the displays, which can be be networked so people may interact remotely. This will all mean a new generation of apps that require us to think of the human as the interface. Interactions will vary for different people.

Han said in his presentation that multi-touch is the standard across the market, because touch is dead due to a number of challenges it faces, including: the “fat finger” problem (not precise enough); the “Midas touch” problem (no hover/tracking state); and the inability to discern which touch is which, a problem that makes for difficult UI choices. Further, touch is great for content manipulation but not as much for content creation.

Han said the opportunity will come with devices that integrate both the hardware and the software that enables touch and the use of a stylus.

Windows 8 allows for both touch and stylus with a Slate-style experience.

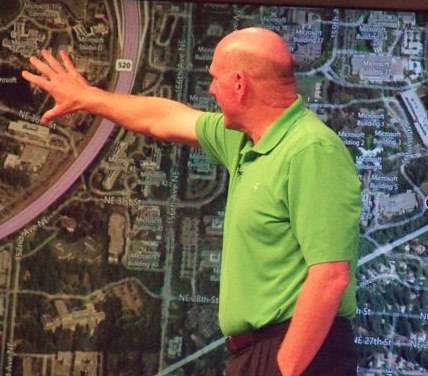

Han’s influence is spreading at Microsoft. CEO Steve Ballmer, in his keynote Thursday at Build, showed off an 82-inch Slate PC display that featured the Perceptive Pixel multi-touch technology that he said is “shipping.”

Han has a vision that is as much about the technology as it is about the people who use it to interact. That creates a fascinating opportunity for developers who, over the next several years, will do more to create apps that integrate with the way we sit, stand and use our arms more so than how to use machines with keyboards to type commands.