File this one under “future toys.” We hear about a lot of these super-low-level advances in processing and storage (whenever I see the word “holographic,” I reach for the salt), and while they’re usually at best years away from practice and manufacture, they’re good to keep informed on, if nothing else than as cocktail chatter. “Did you hear about those new nanotube speakers?”

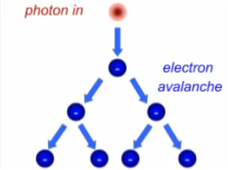

Well. The latest advancement is that IBM is thinking of replacing the conductive copper channels in today’s chips with light. But it’s more than a simple fiber-optic setup or something along those lines; the idea is that a single photon would set off an electron cascade, meaning that a significant charge could be effected on the far side of a gap, for the energy cost of sending out a single photon. The usual “this would enable computers a gazillion times faster” talk follows.

Well. The latest advancement is that IBM is thinking of replacing the conductive copper channels in today’s chips with light. But it’s more than a simple fiber-optic setup or something along those lines; the idea is that a single photon would set off an electron cascade, meaning that a significant charge could be effected on the far side of a gap, for the energy cost of sending out a single photon. The usual “this would enable computers a gazillion times faster” talk follows.

I see one major problem with this. We have systems like this in our bodies; IBM’s design is almost biomimetic. Our brains in particular can take a single “photon” (an action potential) and have it effect a huge change on a target neuron — if that neuron is all charged up and ready to receive. But a few action potentials too many and the charged molecules that allow for a rapid multiplication of electrical power will be exhausted, and it takes some time to recharge. You can actually see this for yourself, literally: stare at a light for a second and the little phosphenes that appear before your eyes are a result of certain cells in your eyes being unable to “recharge” fast enough to propagate the correct signal.

There would be less risk of that if your eyes were plugged into the wall, of course. But on a scale small enough, there may be issues of heat or charge fatigue that would get in the way as in the brain. Anyhow, there’s not much we can say at this point, since the research is at the “CG explanation on YouTube” stage, meaning we won’t hear from them for another couple years.

[via PC World]