Keeping up with an industry as fast-moving as AI is a tall order. So until an AI can do it for you, here’s a handy roundup of recent stories in the world of machine learning, along with notable research and experiments we didn’t cover on their own.

This week in AI, Google paused its AI chatbot Gemini’s ability to generate images of people after a segment of users complained about historical inaccuracies. Told to depict “a Roman legion,” for instance, Gemini would show an anachronistic, cartoonish group of racially diverse foot soldiers while rendering “Zulu warriors” as Black.

It appears that Google — like some other AI vendors, including OpenAI — had implemented clumsy hardcoding under the hood to attempt to “correct” for biases in its model. In response to prompts like “show me images of only women” or “show me images of only men,” Gemini would refuse, asserting such images could “contribute to the exclusion and marginalization of other genders.” Gemini was also loath to generate images of people identified solely by their race — e.g. “white people” or “black people” — out of ostensible concern for “reducing individuals to their physical characteristics.”

Right wingers have latched on to the bugs as evidence of a “woke” agenda being perpetuated by the tech elite. But it doesn’t take Occam’s razor to see the less nefarious truth: Google, burned by its tools’ biases before (see: classifying Black men as gorillas, mistaking thermal guns in Black people’s hands as weapons, etc.), is so desperate to avoid history repeating itself that it’s manifesting a less biased world in its image-generating models — however erroneous.

In her best-selling book “White Fragility,” anti-racist educator Robin DiAngelo writes about how the erasure of race — “color blindness,” by another phrase — contributes to systemic racial power imbalances rather than mitigating or alleviating them. By purporting to “not see color” or reinforcing the notion that simply acknowledging the struggle of people of other races is sufficient to label oneself “woke,” people perpetuate harm by avoiding any substantive conservation on the topic, DiAngelo says.

Google’s ginger treatment of race-based prompts in Gemini didn’t avoid the issue, per se — but disingenuously attempted to conceal the worst of the model’s biases. One could argue (and many have) that these biases shouldn’t be ignored or glossed over, but addressed in the broader context of the training data from which they arise — i.e. society on the world wide web.

Yes, the datasets used to train image generators generally contain more white people than Black people, and yes, the images of Black people in those data sets reinforce negative stereotypes. That’s why image generators sexualize certain women of color, depict white men in positions of authority and generally favor wealthy Western perspectives.

Some may argue that there’s no winning for AI vendors. Whether they tackle — or choose not to tackle — models’ biases, they’ll be criticized. And that’s true. But I posit that, either way, these models are lacking in explanation — packaged in a fashion that minimizes the ways in which their biases manifest.

Were AI vendors to address their models’ shortcomings head on, in humble and transparent language, it’d go a lot further than haphazard attempts at “fixing” what’s essentially unfixable bias. We all have bias, the truth is — and we don’t treat people the same as a result. Nor do the models we’re building. And we’d do well to acknowledge that.

Here are some other AI stories of note from the past few days:

- Women in AI: TechCrunch launched a series highlighting notable women in the field of AI. Read the list here.

- Stable Diffusion v3: Stability AI has announced Stable Diffusion 3, the latest and most powerful version of the company’s image-generating AI model, based on a new architecture.

- Chrome gets GenAI: Google’s new Gemini-powered tool in Chrome allows users to rewrite existing text on the web — or generate something completely new.

- Blacker than ChatGPT: Creative ad agency McKinney developed a quiz game, Are You Blacker than ChatGPT?, to shine a light on AI bias.

- Calls for laws: Hundreds of AI luminaries signed a public letter earlier this week calling for anti-deepfake legislation in the U.S.

- Match made in AI: OpenAI has a new customer in Match Group, the owner of apps including Hinge, Tinder and Match, whose employees will use OpenAI’s AI tech to accomplish work-related tasks.

- DeepMind safety: DeepMind, Google’s AI research division, has formed a new org, AI Safety and Alignment, made up of existing teams working on AI safety but also broadened to encompass new, specialized cohorts of GenAI researchers and engineers.

- Open models: Barely a week after launching the latest iteration of its Gemini models, Google released Gemma, a new family of lightweight open-weight models.

- House task force: The U.S. House of Representatives has founded a task force on AI that — as Devin writes — feels like a punt after years of indecision that show no sign of ending.

More machine learnings

AI models seem to know a lot, but what do they actually know? Well, the answer is nothing. But if you phrase the question slightly differently… they do seem to have internalized some “meanings” that are similar to what humans know. Although no AI truly understands what a cat or a dog is, could it have some sense of similarity encoded in its embeddings of those two words that is different from, say, cat and bottle? Amazon researchers believe so.

Their research compared the “trajectories” of similar but distinct sentences, like “the dog barked at the burglar” and “the burglar caused the dog to bark,” with those of grammatically similar but different sentences, like “a cat sleeps all day” and “a girl jogs all afternoon.” They found that the ones humans would find similar were indeed internally treated as more similar despite being grammatically different, and vice versa for the grammatically similar ones. OK, I feel like this paragraph was a little confusing, but suffice it to say that the meanings encoded in LLMs appear to be more robust and sophisticated than expected, not totally naïve.

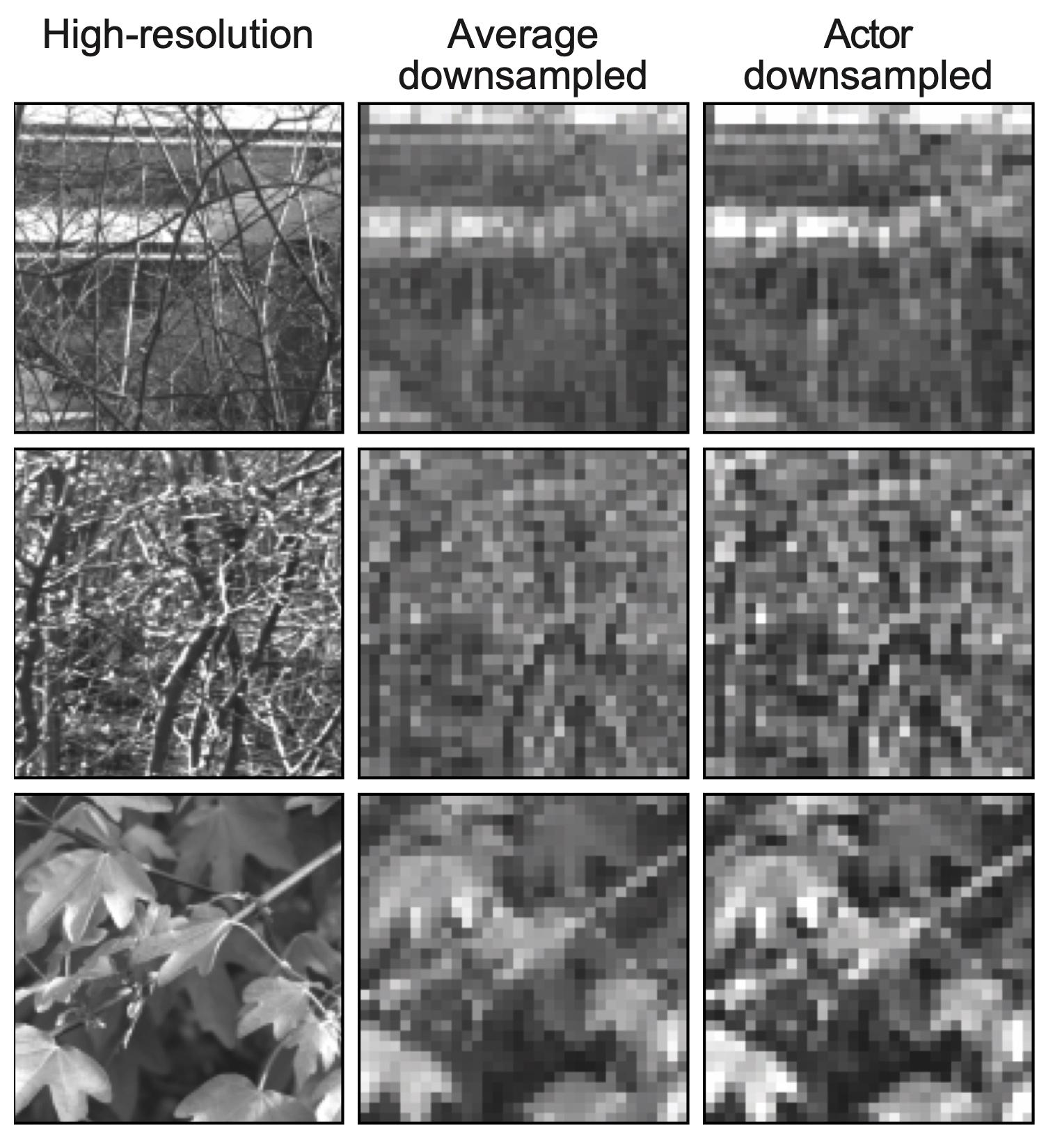

Neural encoding is proving useful in prosthetic vision, Swiss researchers at EPFL have found. Artificial retinas and other ways of replacing parts of the human visual system generally have very limited resolution due to the limitations of microelectrode arrays. So no matter how detailed the image is coming in, it has to be transmitted at a very low fidelity. But there are different ways of downsampling, and this team found that machine learning does a great job at it.

Image Credits: EPFL

“We found that if we applied a learning-based approach, we got improved results in terms of optimized sensory encoding. But more surprising was that when we used an unconstrained neural network, it learned to mimic aspects of retinal processing on its own,” said Diego Ghezzi in a news release. It does perceptual compression, basically. They tested it on mouse retinas, so it isn’t just theoretical.

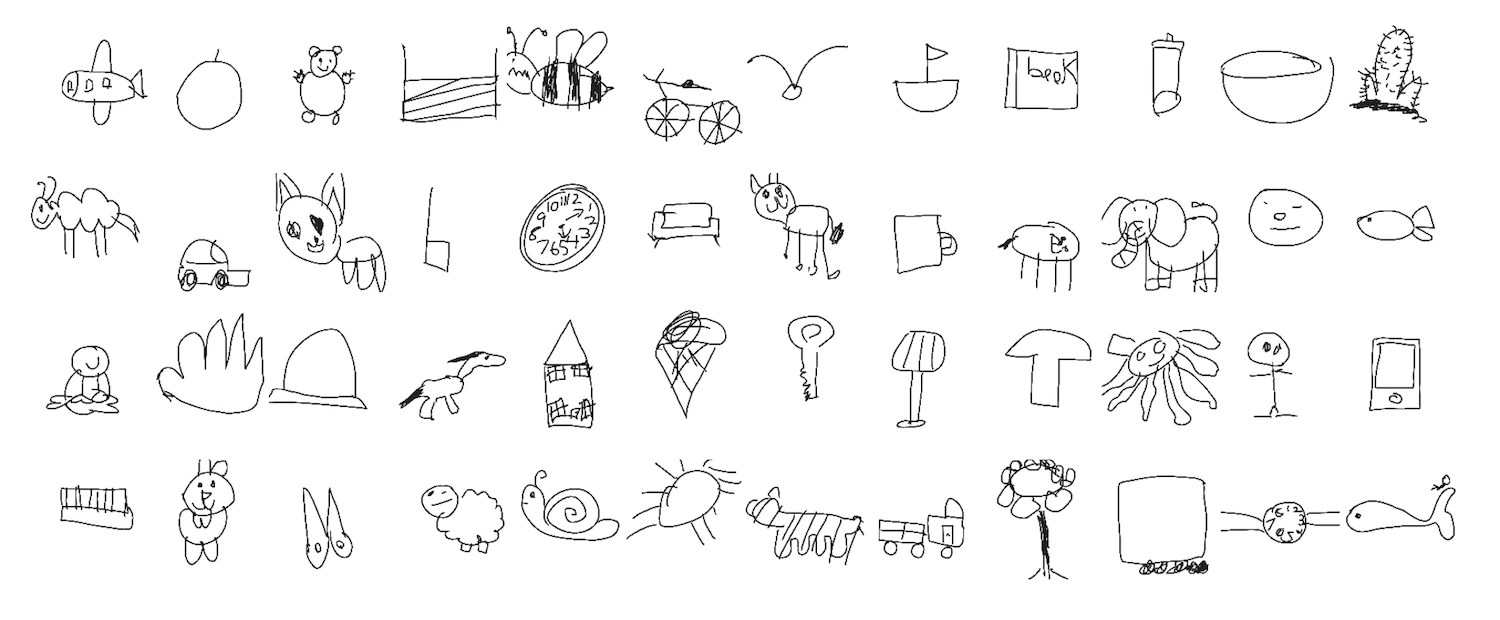

An interesting application of computer vision by Stanford researchers hints at a mystery in how children develop their drawing skills. The team solicited and analyzed 37,000 drawings by kids of various objects and animals, and also (based on kids’ responses) how recognizable each drawing was. Interestingly, it wasn’t just the inclusion of signature features like a rabbit’s ears that made drawings more recognizable by other kids.

Image Credits: Stanford

“The kinds of features that lead drawings from older children to be recognizable don’t seem to be driven by just a single feature that all the older kids learn to include in their drawings. It’s something much more complex that these machine learning systems are picking up on,” said lead researcher Judith Fan.

Chemists (also at EPFL) found that LLMs are also surprisingly adept at helping out with their work after minimal training. It’s not just doing chemistry directly, but rather being fine-tuned on a body of work that chemists individually can’t possibly know all of. For instance, in thousands of papers there may be a few hundred statements about whether a high-entropy alloy is single or multiple phase (you don’t have to know what this means — they do). The system (based on GPT-3) can be trained on this type of yes/no question and answer, and soon is able to extrapolate from that.

It’s not some huge advance, just more evidence that LLMs are a useful tool in this sense. “The point is that this is as easy as doing a literature search, which works for many chemical problems,” said researcher Berend Smit. “Querying a foundational model might become a routine way to bootstrap a project.”

Last, a word of caution from Berkeley researchers, though now that I’m reading the post again I see EPFL was involved with this one too. Go Lausanne! The group found that imagery found via Google was much more likely to enforce gender stereotypes for certain jobs and words than text mentioning the same thing. And there were also just way more men present in both cases.

Not only that, but in an experiment, they found that people who viewed images rather than reading text when researching a role associated those roles with one gender more reliably, even days later. “This isn’t only about the frequency of gender bias online,” said researcher Douglas Guilbeault. “Part of the story here is that there’s something very sticky, very potent about images’ representation of people that text just doesn’t have.”

With stuff like the Google image generator diversity fracas going on, it’s easy to lose sight of the established and frequently verified fact that the source of data for many AI models shows serious bias, and this bias has a real effect on people.