This week, I heard from an ex-colleague I hadn’t heard from in a few years. Let’s call him Jeremy. He wrote me a lovely email, referring to a moment I have such a clear memory of. He asked about my health, mentioned that he’d seen I recently had pneumonia. After telling me a little bit about his own life, he tried to sell me a consulting service I absolutely did not need.

There were three strange things about that interaction: I know this person’s writing style pretty well, and, well, he’s a man of few words. This email was a lot more eloquent than the many other emails I’ve received from him. It was also a little odd to receive an email from him out of the blue. We were never quite friends, but we were good colleagues who’d share coffee a handful of times per month. And finally, it was weird to be sold to when I suspect Jeremy knows better; I’m a journalist and a consultant, and I definitely am not in need of outsourced software development.

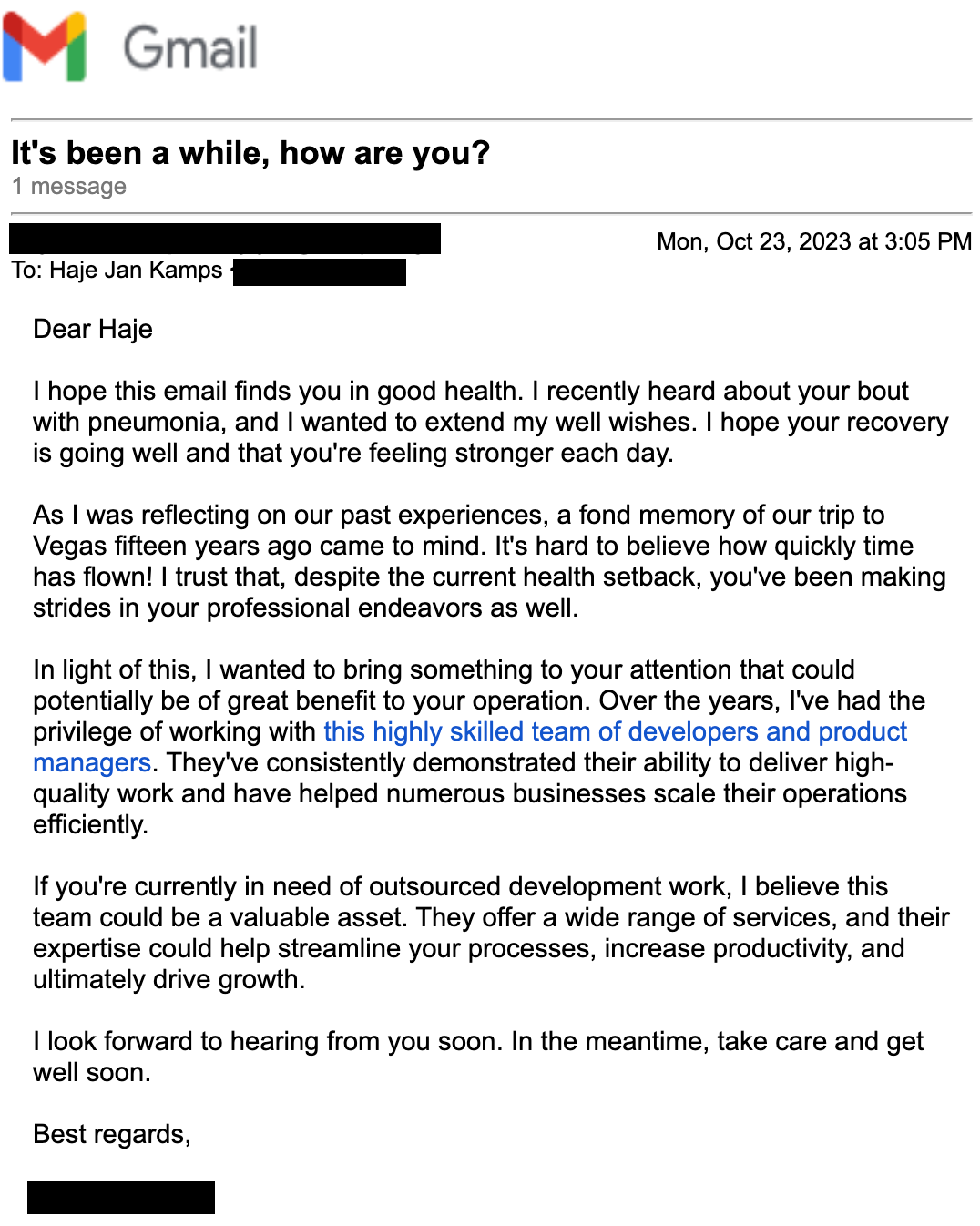

The email from my ex-colleague “Jeremy” that had me fooled for way too long. Image Credits: Screenshot from Gmail

After reading this email twice, I realized what had happened. Jeremy had used some sort of an AI-powered writing tool to write the email to me. It was good enough that I didn’t immediately realize it. Doubly so because it seems like this tool was reading my Twitter and other public data sources to build up a picture of what was happening in my life.

That’s when it fully clicked: Sales email, phishing and spam are about to go to a whole new level.

And that makes a lot of sense.

As artificial intelligence continues to evolve, it’s becoming more adept at generating human-like text. This means that the days of easily identifying spam emails due to their awkward phrasing or blatant sales pitches are fading. Instead, we’re moving toward an era where AI, specifically generative AI, can craft convincing, personalized emails that are difficult to distinguish from those written by a human.

Generative AI uses machine learning algorithms to analyze large amounts of data and understand patterns, context and nuances in human language. It can then use this understanding to generate new, original content that mimics human writing style. This includes everything from the tone of voice, specific phrasing, and even the use of colloquialisms, making the generated text seem incredibly authentic.

In the context of spam sales emails, this means that the email I received from Jeremy was particularly tricky. AI can — and often does — generate emails that are from a familiar contact, like an old colleague or friend.

This level of personalization and authenticity could make spam sales emails far more convincing, increasing the likelihood that recipients will engage with the content. It could also make it harder for spam filters to detect these emails because they lack the obvious signs usually associated with spam.

The wild thing is this, I reached out to Jeremy, and he told me he hadn’t sent me an email. When I forwarded him what I had received, he was completely befuddled. And then we realized something: The link in the email for more information was to a company Jeremy has nothing to do with. The email itself was sent from a Gmail address that Jeremy says he has never used. In other words: This was spam, but it was the most sophisticated spam I’ve seen in a while.

It threw me for a loop, too! I knew something was off, but I couldn’t quite put my finger on what. And if I had been slightly less on the ball, I would have definitely fallen for this, and I can imagine a number of less tech-savvy people in my life would have, too. Of course, someone trying to sell me an irrelevant service isn’t really going to go anywhere, but what if this were some sort of a more nefarious scam? There are many, and retirees are targeted particularly often. Research from 2017 suggests that 5.4% of older people fall victim to scams. That’s around one out of every 18 people; as AI gets more sophisticated, it’s hard to imagine that number dropping.

Generative AI holds immense potential for a range of applications, but its ability to craft convincing spam sales emails (and realistic-looking fake news) presents a significant challenge that must be addressed to ensure online safety and security. Part of the solution will be education — teaching people what to look for so they don’t fall for scams — but the tech world has been trying to educate the less tech-savvy for as long as the internet has existed, and so far there’s been moderate success at best. The other part of the solution will be technology: The AI being built that’s trying to stop AI spam needs to keep running at high speed.

Suffice it to say, things just got a lot more interesting for anyone working in spam mitigation, and the spammers have both a head start and a higher incentive to win, at least in the short-term.

Keep an eye on that inbox, and talk to your friends and family about how to stay safe(r).