YouTube Shorts is making a change to address the growing issues around spam on the short-form video platform. The company says that starting on August 31st, links that appear in the Shorts comments section, Shorts descriptions, and the vertical live feed will no longer be clickable. This new policy is meant to be a preventative measure that makes it harder for scammers and spammers to mislead and scam users via links.

The company notes the change was necessary because the spammy links could drive users to dangerous content, like malware, phishing, or other scams.

The measure is fairly extreme, given YouTube already has existing systems and policies designed to detect and remove spammy links. However, instead of relying on this technology, it’s disabling these links altogether. The changes will roll out gradually, which means not all links will be disabled as of August 31st.

In addition, the company is removing the clickable social media icons from all desktop channel banners, as those have also been used to scam users with misleading links.

However, legitimate creators have a use for links at times, particularly when it comes to monetizing their content by recommending products and brands to their followers. To address this, YouTube says it will introduce new ways for those users to safely include links in their content.

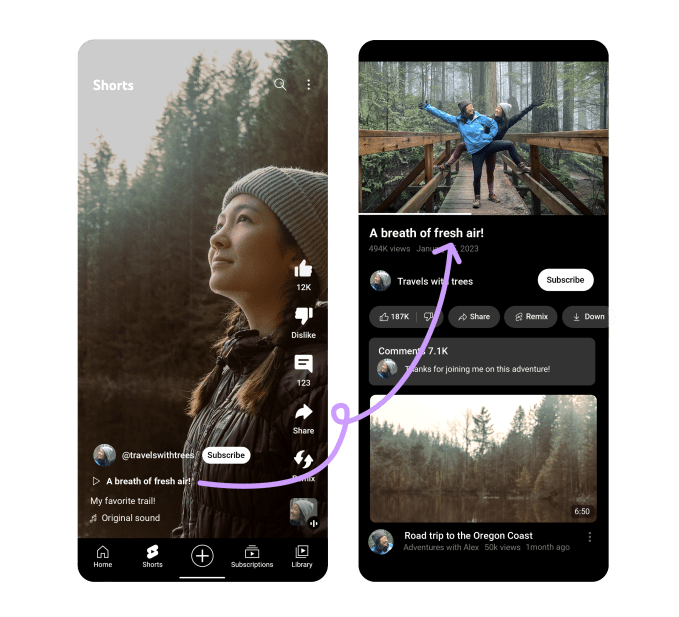

Image Credits: YouTube

Starting on August 23, YouTube viewers on mobile and desktop will begin to see “prominent” clickable links on creators’ channel profiles near their ‘Subscribe’ button. Creators can use this space to link out to websites, their other social profiles, merchandise sites, and other links that adhere to YouTube’s Community Guidelines.

In addition, Shorts creators who like to use links to point viewers to their long-form videos will still be able to do so in the near future. By the end of September, YouTube says it will introduce a new and safer way for those creators to drive viewers from Shorts to their other YouTube content, as well.

These changes follow other measures YouTube has enacted to cut down on spam across the platform, including improvements to systems that detect impersonation channels. From Q1 2022 to Q1 2023, YouTube says it’s increased the number of impersonation-related removals and terminations by over 35%.

It’s also improved its feature that detects and holds potentially spammy and inappropriate comments for optional review by creators, resulting in a 200% increase in comments held for review when comparing the first week of June after the changes went live, with the first week of May.