Nvidia today announced that it has been working with VMware to bring its virtual GPU technology (vGPU) to VMware’s vSphere and VMware Cloud on AWS. The company’s core vGPU technology isn’t new, but it now supports server virtualization to enable enterprises to run their hardware-accelerated AI and data science workloads in environments like VMware’s vSphere, using its new vComputeServer technology.

Traditionally (as far as that’s a thing in AI training), GPU-accelerated workloads tend to run on bare metal servers, which were typically managed separately from the rest of a company’s servers.

“With vComputeServer, IT admins can better streamline management of GPU accelerated virtualized servers while retaining existing workflows and lowering overall operational costs,” Nvidia explains in today’s announcement. This also means that businesses will reap the cost benefits of GPU sharing and aggregation, thanks to the improved utilization this technology promises.

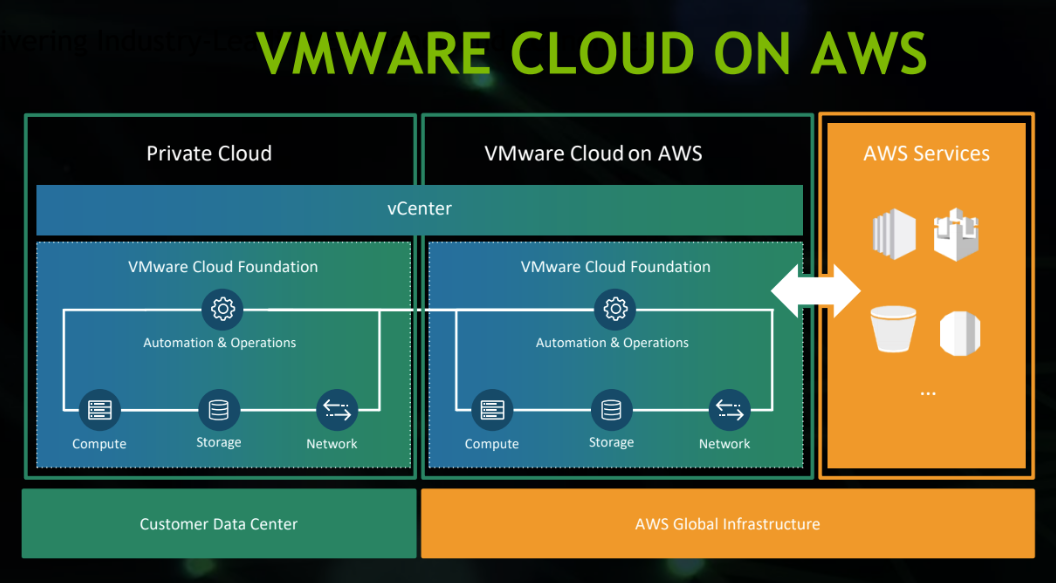

Note that vComputeServer works with VMware Sphere, vCenter and vMotion, as well as VMware Cloud. Indeed, the two companies are using the same vComputeServer technology to also bring accelerated GPU services to VMware Cloud on AWS. This allows enterprises to take their containerized applications and from their own data center to the cloud as needed — and then hook into AWS’s other cloud-based technologies.

“From operational intelligence to artificial intelligence, businesses rely on GPU-accelerated computing to make fast, accurate predictions that directly impact their bottom line,” said Nvidia founder and CEO Jensen Huang. “Together with VMware, we’re designing the most advanced and highest performing GPU- accelerated hybrid cloud infrastructure to foster innovation across the enterprise.”