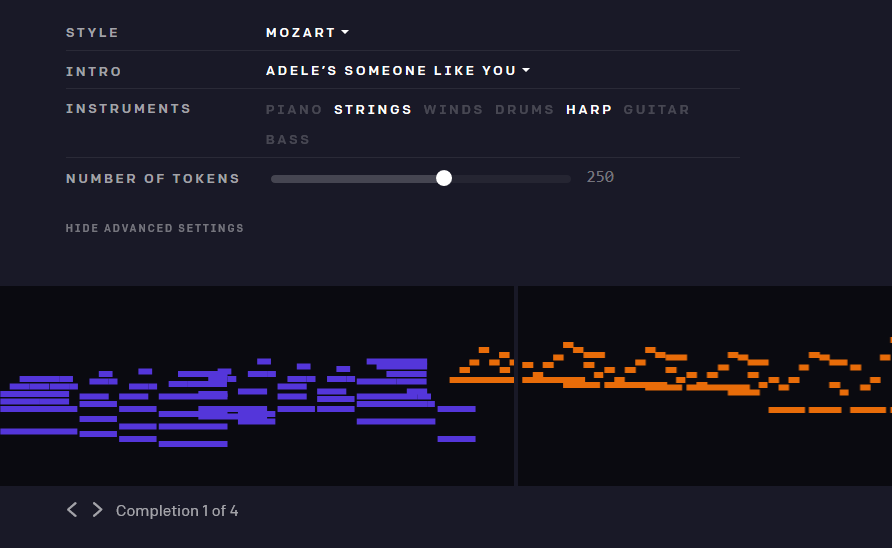

Have you ever wanted to hear a concerto for piano and harp, in the style of Mozart by way of Katy Perry? Well, why not? Because now you can, with OpenAI’s latest (and blessedly not potentially catastrophic) creation, MuseNet. This machine learning model produces never-before-heard music based on its knowledge of artists and a few bars to fake it with.

This is far from unprecedented — computer-generated music has been around for decades — but OpenAI’s approach appears to be flexible and scalable, producing music informed by a variety of genres and artists, and cross-pollinating them as well in a form of auditory style transfer. It shares a lot of DNA with GPT2, the language model “too dangerous to release,” but the threat of unleashing unlimited music on the world seems small compared with undetectable computer-generated text.

MuseNet was trained on works from dozens of artists, from well-known historical figures like Chopin and Bach to (comparatively) modern artists like Adele and the Beatles, plus collections of African, Arabic and Indian music. Its complex machine learning system paid a great deal of “attention,” which is a technical term in AI work for, essentially, the amount of context the model uses to inform the next step in its creation.

Take, for instance, a piece by Mozart. If the model only attended to a couple seconds at a time, it would never be able to learn the larger musical structures of a symphony as it grew and receded, switched tones and instruments. But the model was given enough virtual brainspace to hold onto about four full minutes of sound, more than enough to grasp something like a slow start to a big finish, or a basic verse-chorus-verse structure.

Theoretically, that is. The model doesn’t actually understand music theory, just that this note followed this note, which followed this note, which tends to come after this type of chord, and so on. Its creations are elementary in their structure, but it’s pretty clear listening to them that it is indeed successfully aping the songs it ingested.

What makes it impressive is that a single model does this reliably across so many types of music. AIs have been created, like the fabulous Google Doodle for Bach’s birthday a couple weeks back, that focus on a specific artist or genre. And as a comparison I’ve been listening to Generative.fm, which creates just the type of sparse ambient music I like to listen to while I work (if you like it too, check out one of my favorite labels, Serein). But both those models have their limits very strictly defined. Not so with MuseNet.

In addition to being able to belt out infinite bluegrass or baroque piano pieces, MuseNet can apply a style transfer process to combine the characteristics of both. Different parts of a work can have different attributes — in a painting you might have composition, subject, color choice and brush style to start. Imagine a Pre-Raphaelite subject and composition but with Impressionist execution. Sounds fun, right? AI models are great at doing this because they sort of compartmentalize these different aspects. It’s the same type of thing in music: The note choice, cadence and other patterns of a pop song can be drawn out and used separately from its instrumentation — why not do Beach Boys harmonies on a harp?

It’s a little hard, however, to get a sense of the likes of Adele without her distinctive voice, and the rather basic synths the team has chosen cheapen the effect overall. And after listening to the “live concert” the team gave on Twitch for a bit, I wasn’t convinced that MuseNet is the next hit machine. On the other hand, it pretty regularly hit a good stride, especially in jazz and classical improvisations, where a bit of an off note can be played off and the rhythms don’t feel so contrived.

It’s a little hard, however, to get a sense of the likes of Adele without her distinctive voice, and the rather basic synths the team has chosen cheapen the effect overall. And after listening to the “live concert” the team gave on Twitch for a bit, I wasn’t convinced that MuseNet is the next hit machine. On the other hand, it pretty regularly hit a good stride, especially in jazz and classical improvisations, where a bit of an off note can be played off and the rhythms don’t feel so contrived.

What’s it for? Your idea is as good as anyone’s, really. This field is quite new. MuseNet’s project lead, Christine Payne, is pleased with the model and has already found someone to use it:

As a classically trained pianist, I’m particularly excited to see that MuseNet is able to understand the complex harmonic structures of Beethoven and Chopin. I’m working now with a composer who plans to integrate MuseNet into his own compositions, and I’m excited to see where the future of Human/AI co-composing will take us.

An OpenAI representative also said that the team has started integrating works from contemporary composers who want to see how the model interprets or imitates their style.

MuseNet will be available for you to play with through mid-May, at which point it will be taken offline and adjusted based on feedback from users, and soon (think weeks) it will be at least partially open-sourced. I imagine popular combinations and ones that people listened to all the way through will get a bit more weight in the tweaks. Here’s hoping they add a bit more expression to the MIDI execution as well — it does often feel like these pieces are being played by a robot. But it’s testament to the quality of OpenAI’s work that they frequently sound perfectly good as well.