Google today showed off new ways it’s combining the smartphone camera’s ability to see the world around you, and the power of A.I. technology. The company, at its Google I/O developer conference, demonstrated a clever way it’s using the camera and Google Maps together to help people better navigate around their city, as well as a handful of new features for its previously announced Google Lens technology, launched at last year’s I/O.

The maps integration combines the camera, computer vision technology, and Google Maps with Street View.

The idea is similar to how people navigate without technology – they look for notable landmarks, not just street signs.

With the camera/Maps combination, Google is doing that now, too. It’s like you’ve jumped inside Street View, in fact.

In the interface, the Google Maps user interface is at the bottom of the screen, while the camera is showing you what’s in front of you. There’s even an animated guide (a fox) who you can follow to find your way.

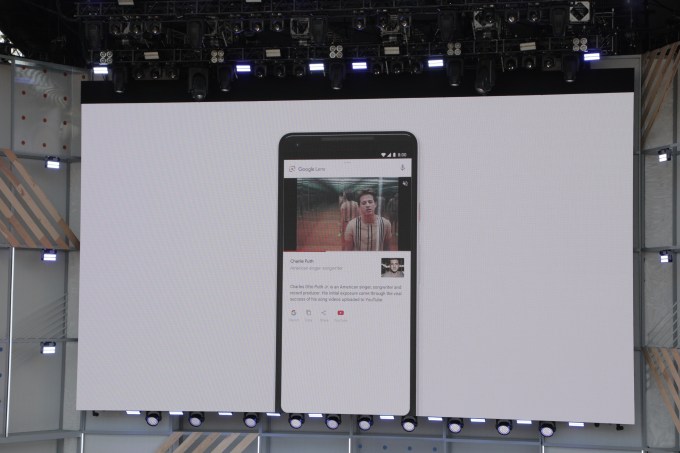

The feature was introduced ahead of several new additions for Google Lens, Google’s smart camera technology.

Already, Google Lens can do things like identify buildings, or even dog breeds, just by pointing your camera at the object (or pet) in question.

With an updated version of Google Lens, it will be able to identify text too. For example, if you’re looking at a menu, you could point the camera at the menu text in order to learn what a dish consists of – in the example on stage, Google demonstrated Lens identifying the components of ratatouille.

However, the feature can also work for things like text on traffic signs, posters or business cards.

Google Lens isn’t just reading the words, it’s understanding the meaning and context behind the words, which is what makes the feature so powerful.

For example, you can also copy and paste text from the real world—like recipes, gift card codes, or Wi-Fi passwords—to your phone. This feature was demonstrated at last year’s Google I/O, but is only now rolling out.

Another new feature called Style Match is similar to the Pinterest-like fashion search option that previously launched in Google Images.

With this, you can point the camera at an item of clothing – like a shirt or pants – or even accessories like a handbag – and Lens will find items that match that piece’s style. It does this by running searches through millions of items, but also by understanding things like different textures, shapes, angles and lighting conditions.

Finally, Google Lens is adding real-time functionality, meaning it will actively seek out things to identify when you point the camera at the world around you, then attempt to anchor its focus to a given item and present the information about it.

This is possible because of the advances in machine learning, using both on-device intelligence and cloud TPUs, which allow Lens to identify billions of words, phrases, places, and things in a split second, says Google.

It can also display the results of what it finds on top of things like store fronts, street signs or concert posters.

“The camera is not just answering questions, but putting the answers right where the questions are,” noted Aparna Chennapragada, Head of Product for Google’s Augmented Reality, Virtual Reality and Vision-based products (Lens), at the event.

Google Lens has previously been available in Photos and Google Assistant, but will now be integrated right into the Camera app across a variety of top manufacturer’s devices, including LGE, Motorola, Xiaomi, Sony Mobile, HMD/Nokia, Transsion, TCL, OnePlus, BQ, and Asus, as well as Google Pixel devices. (See below).

The updated features for Google Lens will arrive in the next few weeks.