Comet.ml allows data scientists and developers to easily monitor, compare and optimize their machine learning models. The New York-based company is launching its product today, after completing the TechStars-powered Amazon Alexa Accelerator program and raising a $2.3 million seed round led by Trilogy Equity partners, together with Two Sigma Ventures, Founders Co-Op, Fathom Capital, TechStars Ventures and angel investors.

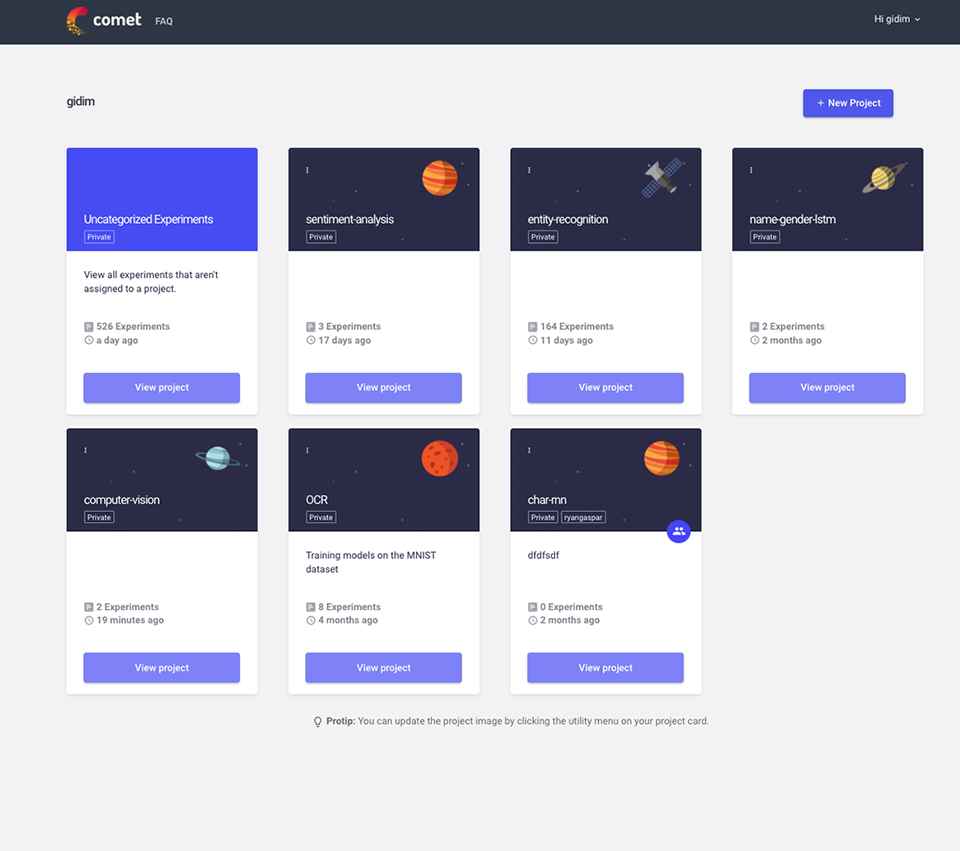

The service provides you with a dashboard that brings together the code of your machine learning (ML) experiments and their results. In addition, the service also allows you to optimize your models by tweaking the hyperparameters of your experiments. As you train your model, Comet tracks the results and provides you with a graph of your results, but it also tracks your code changes and imports them so that you can later compare all the different aspects of the various versions of your experiments.

Developers can easily integrate Comet into their machine learning frameworks, no matter whether they use the Keras API, TensorFlow, Scikit Learn, Pytorch or simply like to write Java code. To get started, developers simply add the Comet.ml tracking code to their apps and run their experiments as usual. The service is completely agnostic as to where you train your models and you can obviously share access to your results with the rest of your team.

Ideally, this means that data scientist can stick with their existing workflow and development tools, but in addition to those, they now have a new tool that gives them better insights into how well their experiments are working.

“We realized that ML teams look a lot like software teams looked ten or fifteen years ago,” Comet.ml co-founder and CEO Gideon Mendels told me. While software teams now have version control and tools like GitHub to share their code, ML teams still often share data and code by email. “The main issue isn’t discipline but the state of tooling,” said Mendels. “Current tools like GitHub are a great solution for software engineering, but for ML teams — even though code is a main component — it’s not everything.”

Mendels tells me that the team signed up about 500 data scientists (including from some top tier tech companies) during its closed beta. So far, these users have developers about 6,000 models on the platform.

Looking ahead, the Comet.ml team plans to give developers more tools to build better and more accurate models, though as Mendels noted, to do that, the company had to get this first building block in place.

Comet.ml is now available to all developers who want to give it a try. There’s a free tier that allows for unlimited public projects and, similar to GitHub, a number of paid tiers for teams that want to keep their project private.