The Google Kubernetes Engine (previously known as the Google Container Engine and GKE) now allows all developers to attach Nvidia GPUs to their containers.

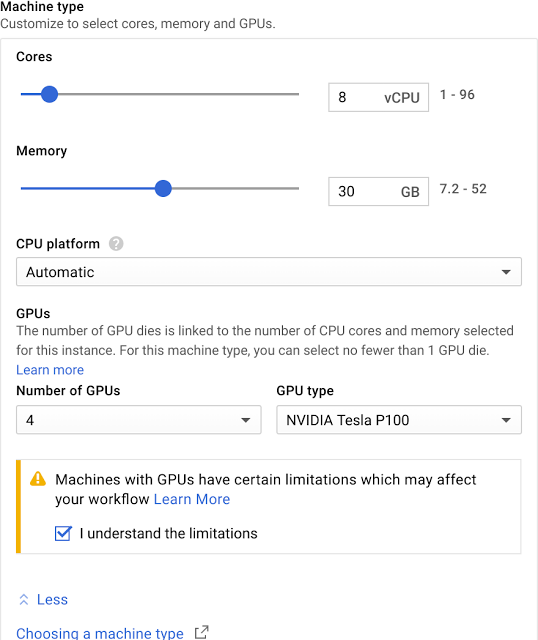

GPUs on GKE (an acronym Google used to be quite fond of, but seems to be deemphasizing now) have been available in closed alpha for more than half a year. Now, however, this service is in beta and open to all developers who want to run machine learning applications or other workloads that could benefit from a GPU. As Google notes, the service offers access to both the Tesla P100 and K80 GPUs that are currently available on the Google Cloud Platform.

The advantages of the kontainer/GPU combo is that you can easily scale your workloads up and down as needed. Most GPU workloads probably aren’t all that spikey, but in case yours are, this looks like a good option to evaluate.

The advantages of the kontainer/GPU combo is that you can easily scale your workloads up and down as needed. Most GPU workloads probably aren’t all that spikey, but in case yours are, this looks like a good option to evaluate.

Google makes it easy to monitor GPU jobs through its API and its Stackdriver monitoring and logging service.

Overall, the Kubernetes Engine (which seems to be Google’s preferred nom du guerre for the GKE now) saw its core-hours grow 9x year over year in 2017. That’s no surprise, given the hype around containers and the fact that the GKE, as it was called at the time, only launched in 2016, but it does show that Google may just have a winner here.