Back in November, Google showcased a few of its funky machine learning experiments, and among them was Quick, Draw! (their bang, not mine) — a game where you sketch something and an image recognition system guesses what it is. Now the company is releasing the millions upon millions of sketches players submitted as an open data set for AI developers to play with.

Now, if the prospect of browsing through a bunch (I’m talking 50 million here) of terrible drawings of hats, shoes, and cats doesn’t sound like fun to you, don’t worry. That’s not really the point.

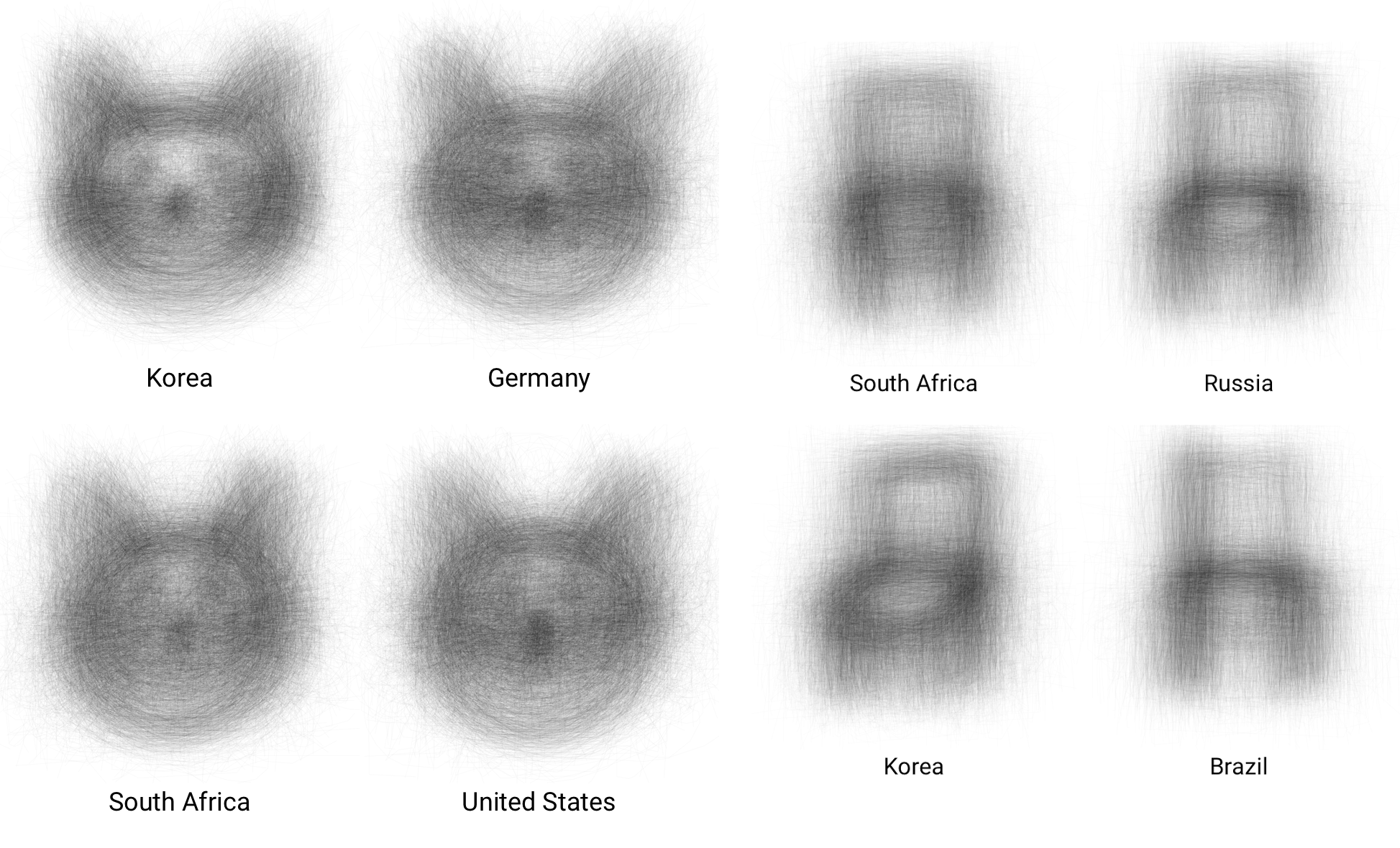

Really it’s about the metadata. Those drawings came from lots of different countries, and it’s fun to check out how differently, say, Germany and Korea think of cats. Or chairs!

Well look, there are patterns in there worth sussing out. Clearly Korean and Russian sketchers were more likely to draw the chair at an angle or sideways. Why? That’s what you’re supposed to find out with your machine learning system!

There are actually lots of interesting differences. As Google’s post points out, a bias appeared toward the sneaker-type shoe, so much so that the system would have trouble recognizing a high heel or sandal. And what about cats? Surely there are sub-cat-egories of how people chose to draw them. I think I did one, actually, and I drew the whole cat. Am I some strange, handsome exception? If only I knew how to create a machine learning system to find out (hint).

Google suggests you use their new Facets tool to visualize the enormous amount of data in the set. And that’s really the interesting part of this whole thing. When you have data sets this big — in the hundreds of millions of examples — how can you sort it and observe it even at a gross level so that people can find coarse patterns and ideas worth pursuing? And how can you spot things like systematic biases or opportunities for improvement?

The 50 million drawings are just a start — the other 750 million or so will be released over time, and presumably interesting data from other projects as well. Keep an eye on the Google Research blog (or TechCrunch, obviously) for the latest.