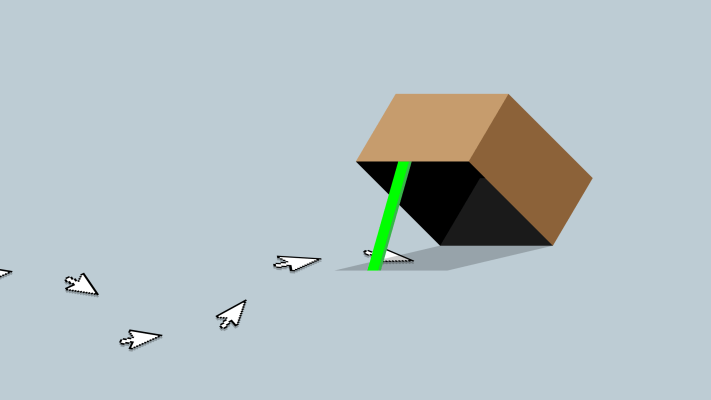

“Cloaking” sounds sci-fi, but it’s actually a trick used today by spammers to show content moderators or search engine spiders an innocent-looking version of their site while real visitors just see ads and scams. For example, some spammers try to fool Facebook’s review team and tech by showing any of its staffers’ IP addresses a benign landing page for links or ads, while everyone else sees diet pill scams or porn that violate Facebook’s community standards and ad policies.

So today, Facebook is cracking down on cloaking. Facebook ads product director Rob Leathern tells me now when it discovers a site using cloaking, “We’ll deactivate their ad counts, we’ll kick them off, we’ll get rid of their Pages.” Facebook will use both humans and expanded artificial intelligence systems to root out cloakers. However, it’s not publicly disclosing the signals it uses to identify cloaking so it doesn’t tip-off the spammers.

So today, Facebook is cracking down on cloaking. Facebook ads product director Rob Leathern tells me now when it discovers a site using cloaking, “We’ll deactivate their ad counts, we’ll kick them off, we’ll get rid of their Pages.” Facebook will use both humans and expanded artificial intelligence systems to root out cloakers. However, it’s not publicly disclosing the signals it uses to identify cloaking so it doesn’t tip-off the spammers.

Innocent businesses should see no impact. “There’s no legitimate use case for cloaking,” Leathern says. “If we find it, it doesn’t really matter who that actor is. They’re usually bad actors and spammers by definition. So the line is if anyone does this in any way, shape, or form, we want them off the platform.” Here, Facebook is merely seeking out cloaking, rather than passing judgement on site content.

The change comes as part of a multi-pronged attack on hoaxes, clickbait, spam and low-quality sites following criticism that Facebook didn’t prevent fake news from influencing the 2016 presidential election. By cutting off traffic to spam sites, Facebook can choke out the financial lifeblood of bad actors spreading misinformation for profit or political motives.

According to a recent study of 4 million posts by more than 450 Facebook Pages spreading hyperpartisan political news, BuzzFeed concluded that “Publishers are obsessed with Facebook’s algorithm changes and with avoiding getting caught up in the social network’s stepped-up initiative to reduce clickbait and misinformation in the News Feed.”

Cloaking isn’t just a Facebook problem, though. That’s why it plans to work with other tech companies to share strategies for defeating cloakers. Facebook tells me it’s early days in these conversations with the industry about how to address this more collectively. But if it shares the fingerprints of cloakers the way it does to thwart uploads of terrorist content or child pornography, Facebook could use experience from its massive scale to inoculate fellow fixtures of the internet.