The concept of artificial intelligence has been around for a long time.

We’re all familiar with HAL 9000 from 2001: A Space Odyssey, C-3PO from Star Wars and, more recently, Samantha from Her. In written fiction, AI characters show up in stories from writers like Philip K. Dick, William Gibson and Isaac Asimov. Sometimes it seems like it’s touched on by every writer who has written sci-fi.

While many predictions and ideas put forward in sci-fi have come to life, artificial intelligence is probably the furthest behind. We are nowhere near true artificial intelligence as exemplified by the characters mentioned above.

Sometimes it seems like we’ve been waiting forever. We can ask Siri or Google or Cortana simple questions and they will answer, but everyone who’s used that technology eventually comes away disappointed. We thought Siri was the future when it first came out, but these days, most of us barely use it beyond simple Google searches and dead simple tasks, like setting timers.

http://imgs.xkcd.com/comics/ai.png

http://imgs.xkcd.com/comics/ai.png

The reason these software programs leave so much to be desired comes down to language. This is where natural language processing (NLP) comes into play. Artificial intelligence can grasp the meaning of simple language, and speak back to you, but it is limited by its literal interpretations of our questions. A computer can know the definition of a word, but it doesn’t understand the meaning of words within a larger context.

If you’re interested in tech or sci-fi, you’ve probably heard of the Turing test. Alan Turing was one of the first people to take the potential of AI seriously, and he knew that one day machines would match human intelligence. He had an idea for a simple test: If a human can’t distinguish between a machine and another human in conversation, then the machine has reached the level of human intelligence.

The Turing test is a bit more complicated than that, but the concept is still useful as a benchmark for natural language processing. In other words, if it can think like a human, it can process language like a human. (Given the complexity of the human brain, a machine able to think like a human will be a huge accomplishment).

Do we want androids who spout poetry?

Think of Scarlett Johansson’s character Samantha in Her. She’s a great example of AI that can understand language fluently. She understands everything said by Theo, played by Joaquin Phoenix. There are a few things she didn’t know, but when explained to her, she understood immediately and incorporated it into her existing knowledge. Just like a human would.

The replicants in Blade Runner are another interesting form of AI. Not only do they process language easily, they’re even poetic. Consider this quote from the replicant Roy Batty:

“I’ve seen things you people wouldn’t believe. Attack ships on fire off the shoulder of Orion. I watched C-beams glitter in the dark near the Tannhäuser Gate. All those moments will be lost in time, like tears…in…rain. Time to die.”

It’s a famous line because it’s so beautiful, and so human. Do we want androids who spout poetry? Do we need them? That’s a topic for a sci-fi story, but the fact remains that Roy has a thorough understanding of language, and the emotion that comes with it.

These types of AI are common throughout science fiction, and have been for decades. But we’ve failed to deliver on that. The more we’ve learned about how to build true AI and NLP, the more we’ve realized that we know next to nothing. This is largely because we understand next to nothing about the human brain. We haven’t been able to build anything that thinks like a human because we have no idea how the human brain thinks.

At this point we’ve distinguished three levels of AI. I can’t put it much better than Tim from Wait But Why, so I’ll quote him here:

AI Caliber 1) Artificial Narrow Intelligence (ANI): Sometimes referred to as Weak AI, Artificial Narrow Intelligence is AI that specializes in one area. There’s AI that can beat the world chess champion in chess, but that’s the only thing it does. Ask it to figure out a better way to store data on a hard drive, and it’ll look at you blankly.

AI Caliber 2) Artificial General Intelligence (AGI): Sometimes referred to as Strong AI, or Human-Level AI, Artificial General Intelligence refers to a computer that is as smart as a human across the board — a machine that can perform any intellectual task that a human being can. Creating AGI is a much harder task than creating ANI, and we’re yet to do it.

AI Caliber 3) Artificial Superintelligence (ASI): Oxford philosopher and leading AI thinker Nick Bostrom defines superintelligence as “an intellect that is much smarter than the best human brains in practically every field, including scientific creativity, general wisdom and social skills.”

Something I heard awhile back that has stuck in my head is that humans are capable of calculating physics and trigonometry on the fly. When a football is flung into the air, we can tell when and where it will land; quarterbacks also know when they throw the ball. They make complex calculations and apply it to their physical movement. When you think about it, it’s incredible. And we have no idea how we’re able to do this.

How do we develop AI that can do things we don’t even understand?

Donald Knuth, a computer scientist and former Stanford professor, once said, “AI has succeeded in doing what requires thinking but nothing that we do without thinking.” That’s really what this all comes down to, because we don’t understand how the human brain processes things without thinking. Including language. When listening or reading in a language in which we’re fluent, we don’t think about processing the words. It just happens.

So how do we develop AI that can do things we don’t even understand? That’s what giants like Google and Palantir and many startups, including X.ai, MetaMind, Feedzai, Signal n, Lilt and many, many others are working on.

We’ve tried a few ways to move past this roadblock.

Imitate evolution

Although we don’t know much about how the human brain works, we know a bit more about how it got to this state: natural selection. So some people are trying to artificially replicate natural selection with machines — although it won’t take millions of years, because it’s less random.

It’s called evolutionary computation, or genetic algorithms, and it sets up machines to do certain tasks; when one is successful through trial and error, it’s combined with other machines that are successful. But it’s an iterative process, which presents a problem: We don’t know how long it will take to create intelligence equal to our own.

So far, this method has proved unsuccessful and it was mostly abandoned in the 1990s.

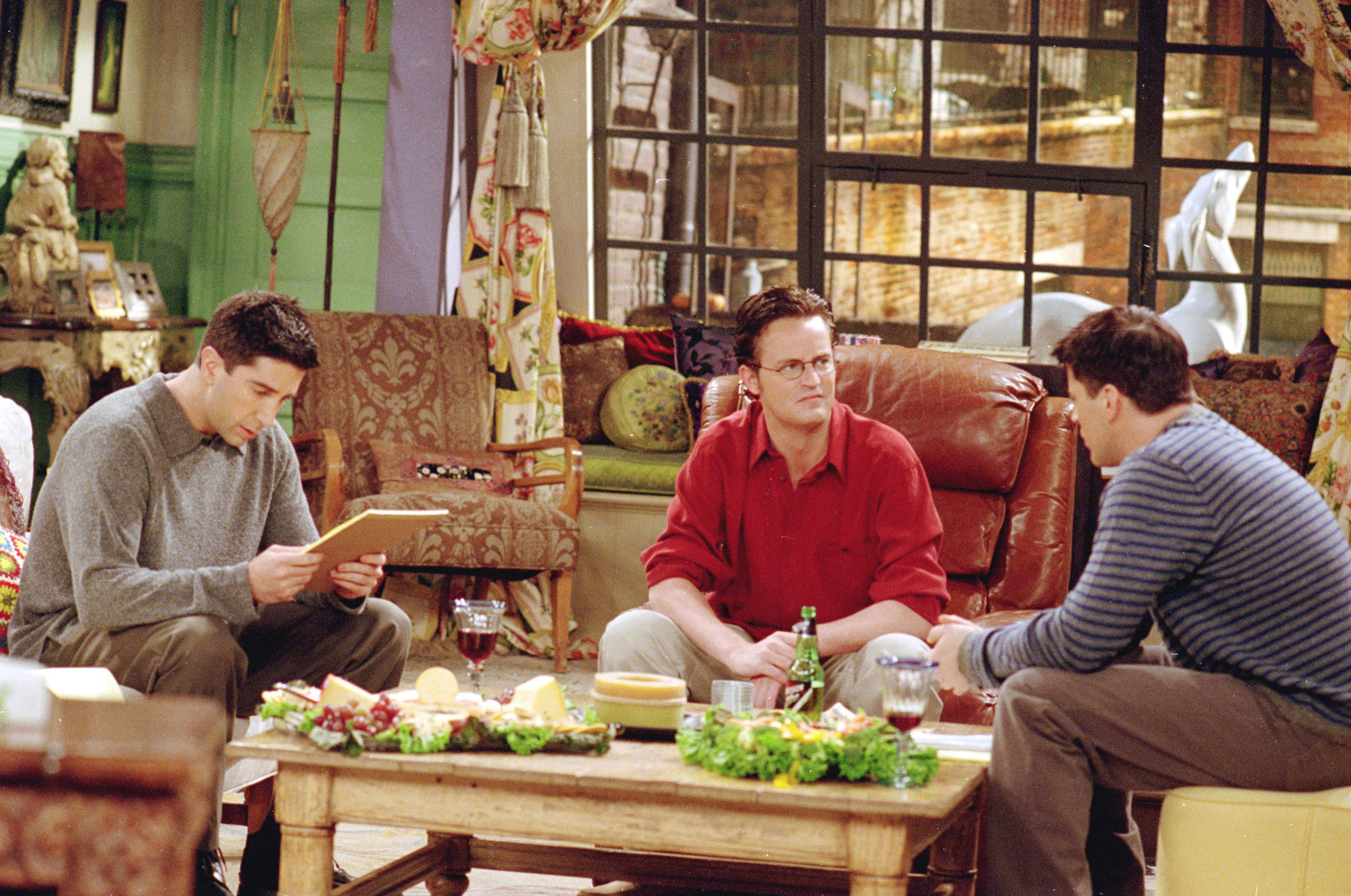

Image: Warner Bros. Television

Image: Warner Bros. Television

Let nature inspire us

Our brain is a biological neural network, so companies are building artificial neural networks. They’re trying to replicate the way the brain processes information by learning though trial and error which neural pathways lead to the right answer. In reality, artificial neural networks have much less in common with biological brains than the name might indicate. Artificial neural networks are a rough mathematical model, a draft, inspired by the little we know about the brain.

Nonetheless, people are doing some crazy stuff with neural networks. Perhaps the most fun, or silly, application of the technology recently made the rounds online. A man named Andy Herd fed all of the scripts from the TV show Friends into a recurrent neural network. It was able to learn the style of the writing and the characters’ personalities and spit out scripts of its own.

They are pretty ridiculous, and don’t make too much sense, but the fact that it’s able to do this at all represents a huge step forward from where we were just a few years ago. And through machine learning, the AI will continue to get better. But at this point, it’s captured the spirit of Chandler, at least: “Chandler: (in a muffin) (Runs to the girls to cry) Can I get some presents.” Anyone who’s watched Friends knows this is classic Chandler, even if the narrative is… nonsensical, to say the least.

Breaking open the language barrier will blow the potential of AI wide open.

Andy used Google’s open source TensorFlow machine learning software library to build his hilarious and important script generator. Google has built it into many of their products, from Photos to Search to Gmail, and obviously Google Now, an app that essentially takes everything Google knows about you and uses it to provide helpful and relevant information. It’s also where the Google version of Siri lives.

Deep learning has massive potential to revolutionize AI and help get us to the next step. But there are other solutions people are working on.

Image: Warner Bros. Entertainment

Image: Warner Bros. Entertainment

Make the machine design themselves

It’s clear that replicating human intelligence is not easy, and no one knows if our other methods will work at all, or do it in a reasonable amount of time. So some people want to design machines to make themselves smart, by researching, learning and revising themselves. This is seemingly how Samantha from Her works. She is capable of learning just like a human is, although much faster.

At the beginning of the movie, Theo needs to teach her a lot. But by the end, she has blown past him on an intelligence level. It’s an exponential process. In layman’s terms, the more she learns, the more she is able to learn, and so on. Perhaps this will lead to radically new types of intelligence, created by machines rather than humans.

This brings us to the concept that artificial intelligence depends on: Moore’s law; the idea that computational power doubles every two years. Talk about exponential power. While the growth rate has started to slow, it’s still advancing exponentially. This already shows; deep learning was already around in the 1970s, but the exponential increase in computational power and data was largely responsible for the breakthroughs we are experiencing now.

This is similar to Facebook’s new M service that lies within the Messenger app, aiming to be your personal assistant. Facebook says that M can do anything a human can — and that’s because their software is working with real humans. After all, AI isn’t capable of calling a restaurant and making a reservation, but the humans on the other side can. When you make a request, if M can’t do it alone, it sends the message to a Facebook contractor and, as they work with the software, the AI learns. M isn’t available to the public yet, but it looks like it has a lot of potential.

Facebook is all in on AI. They’re developing a lot of different technology (like a feature that identifies what is in photographs so blind people can “see” them), but one of the coolest is their attempt to solve the “understanding” part of natural language processing. As we touched on before, AI isn’t yet capable of reading or listening like a human can — it only knows specific things. It knows what a word or sentence means, but it can’t summarize a paragraph.

So Facebook is trying to tackle this. Last year they showed off some cool software. They fed in a synopsis of Lord of the Rings and the AI was able to answer some questions that look straightforward for us, but are very complex for a computer to answer.

But one of the coolest applications of natural language processing is coming from Microsoft: they recently pushed out a feature in Skype to translate on-the-fly. So you can be on a call with someone who speaks another language, and Skype will translate it for you (almost) immediately.

This is huge for global commerce, and society in general. Without language barriers, imagine how much more productive we could be, or how many people we could learn from or talk to that we previously couldn’t, or how much more successful global businesses could be — especially smaller companies that can’t afford a large staff of translators.

Without language barriers, the world opens up, especially to those who don’t have the privileges of people in first-world countries.

There’s a long way to go before computers can understand language. Every language is complex, with subtleties, dialects, slang, implications, emotion, tone, narrative and context, all of which are hard for machines to understand. While software like TensorFlow and CNDK are a massive step forward, we need human interaction to get there.

And we will get there, but it will take at least 15 years. Samantha from Her, HAL from 2001, C-3PO from Star Wars — and all the other artificial intelligence we’ve been promised — are inevitable. It doesn’t need to be presented physically, as an android or robot. But it has to think like a human. Breaking open the language barrier will blow the potential of AI wide open. Until then, AI and humans working together is the best way to reap the benefits of existing technology. We don’t have to wait. We can use AI to change the world right now.

Illustrations: Bryce Durbin

image:

image: