When YouTube first launched its dedicated “Kids” app earlier this year, parents welcomed the opportunity to be able to keep their children away from the more adult content found on the larger YouTube network, thanks to the new app’s curated selection of kid-friendly videos. But the app soon came under fire from consumer watchdog groups who claimed YouTube was not doing enough to protect kids from inappropriate content, including references to sex, drugs, alcohol and more.

Now, YouTube is beginning to address this problem with an update that will help parents better restrict the type of content a child can access on YouTube Kids.

This feature and more, including Chromecast and Apple TV support as well as guest-curated playlists, will arrive in an new version of the app due in a few weeks’ time, says YouTube.

The problem YouTube Kids means to address was significant.

Children today regularly visit YouTube for things like educational videos, cartoons, music videos, web series, and much more. But because the site lacked easy-to-implement parental controls, kids would often stumble upon content through YouTube’s recommendations on via search which was not exactly “kid-safe.” For example, an innocent search for Elmo videos could see kids ending up on a video of Elmo cursing.

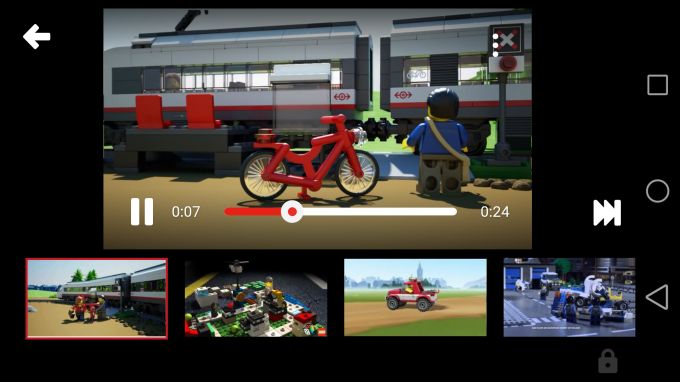

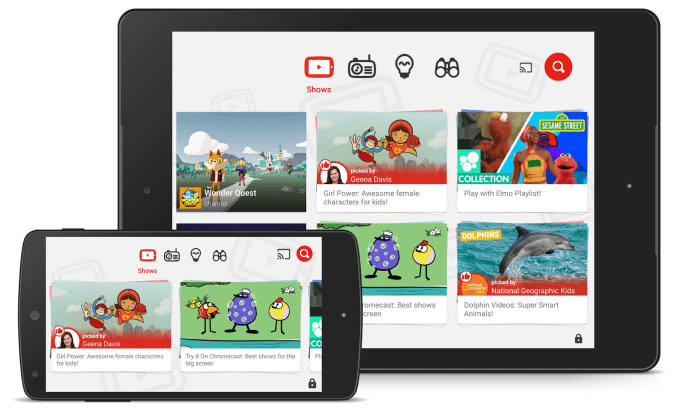

Instead, with YouTube Kids, the idea was to offer a simplified user interface for interacting with YouTube where videos were vetted and curated into high-level categories like “Shows,” “Music,” “Learning,” and “Explore.”

Developed after consultation with Common Sense Media, ConnectSafely.org, the Family Online Safety Institute, the Internet Keep Safe Coalition (iKeepSafe) and WiredSafety.org, YouTube Kids’ app is filled with content from well-known children’s entertainment brands like DreamWorks TV, Jim Henson TV, Mother Goose Club, Talking Tom and Friends, National Geographic Kids, Reading Rainbow, and Thomas the Tank Engine, plus other shows from YouTubers like Vlogbrothers and Stampylonghead.

Dealing With Inappropriate Content

But the problem with the app – and one which led to FTC complaints – was that YouTube Kids offered a search feature that could lead kids to videos that hadn’t been explicitly included and organized into the app’s various sections. While that option could be disabled in parental controls, it seemed that many didn’t know it existed.

Two consumer groups, the Center for Digital Democracy and the Campaign for a Commercial-Free Childhood, teamed up to show how that search feature could be used to point kids to very inappropriate videos.

They used the app to find videos that included sexual language, unsafe behaviors (like playing with matches or juggling knives), profanity (e.g. in a parody of the film “Casino” featuring Bert and Ernie from Sesame Street), adult discussions about family violence, pornography, and child abuse, jokes about drug use and pedophilia, and alcohol ads.

YouTube today says it will make a change to YouTube Kids that will now explain to parents how videos are chosen and how they can be flagged, and it will force parents to make a decision about how much access they want their child to have. In short, parents will be asked to either enable or disable the search feature. When disabled, kids will be restricted to a more limited set of videos in the app.

Also new is the ability for parents to set a passcode instead of a spelled-out code.

However, as YouTube reminds parents in a blog post, “no system is perfect” – while noting that parents should flag concerning videos if they leak through. These flagged videos are manually reviewed 24/7 and any that shouldn’t be included are removed within hours.

The larger issue at hand is that YouTube houses a lot of content, and its catalog grows at an incredible pace with hundreds of hours of video being uploaded every minute. That means that YouTube can’t filter all the content for the Kids app by hand – instead, it uses a combination of algorithmic filtering, user input and human review. And without 100 percent manual curation, bad videos can slip through.

That means simply installing the “Kids” version of YouTube on your mobile device will never be a replacement for actual parental supervision. It’s just an improvement over the main YouTube app.

YouTube Kids And Ads

Though not mentioned in today’s post, YouTube is taking a stand on another issue raised by consumers in complaints to the FTC – advertising. The groups said YouTube was mixing content and ads in a way that was deceptive to children. In particular, the groups were concerned with paid endorsements where the relationship between a video’s star and product manufacturer was not disclosed.

YouTube’s position is that paid ads are allowed in the app and they will be marked as such. But any video uploaded by a user is not considered an ad and won’t be subject to YouTube’s advertising guidelines. That, we understand from those familiar with the situation, means the user videos can include “certain commercials and other promotional materials.”

For example, a search for “cookies” on the app may show a television commercial from a cookie company on a user’s channel — but YouTube would not consider this video a Paid Ad and it would not be subject to YouTube’s Ads policies.

The only requirement for the user videos is that they’re kid-friendly.

That being said, creators are asked to disclose if videos are paid endorsements, and those that are are excluded from the YouTube Kids app as a matter of policy (if not practice.) Creators are also pointed to a page that details how such videos should be labeled.

We understand the FTC investigation into the complaints against YouTube is ongoing but are waiting to hear back on the detailed status.

Along with these more serious issues, YouTube today is also promoting a handful of other features in the updated app including support for watching YouTube Kids on TV via Chromecast, Apple TV, game consoles and smartTVs as well as new guest-created playlists from National Geographic Kids, Kid President, a girl power-themed playlist from actor Geena Davis, creators including Vsauce and Amy Poehler’s Smart Girls, and others.

The company says the app has seen over 8 million downloads since its February launch.