Amazon has been amassing computer vision expertise for a long time. And continues to do so. A LinkedIn search for computer vision jobs at the company currently returns more than 50 posts — mostly split across research and software engineering roles. But is there more than meets the eye to the ecommerce giant’s interest in technologies that can sense the world around them?

Amazon’s nascent drone delivery program, Prime Air, is one clear driver to hire scientists with a grounding in machine learning and computer vision, since drones need to navigate in real world environments. And if you’re chasing a dream of autonomous delivery drones, as Amazon is, then accurate object detection and dynamic collision avoidance are essential.

Likewise there’s Amazon’s thus far ill-fated foray into smartphones, with last year’s debut Fire Phone. The handset’s flagship feature was a 3D interface that uses data from four front-facing cameras to generate three dimensional effects on screen, computed by figuring out where the viewer’s gaze is falling.

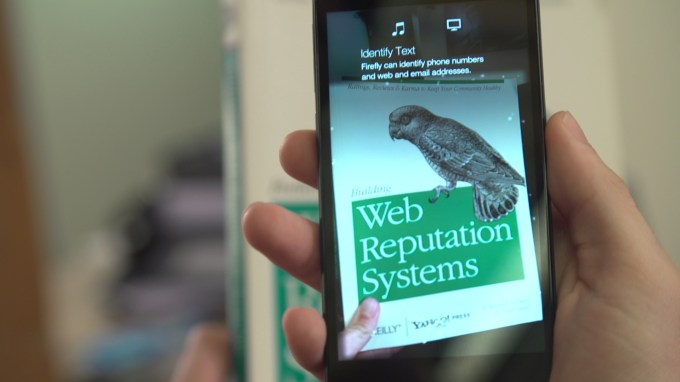

The device also has a button-triggered barcode- and object-scanning feature, called Firefly, that lets you use the phone as a sort of ‘ecommerce wand’ — with its rear camera becoming an eye for identifying stuff in the real world (again powered by computer vision).

After which it’s but a single tap to buy the thing you’ve just IDed. Which of course remains the priority for the ecommerce giant, in lock step with expanding its services to provide ever more incentives to keep people shopping on Amazon.com.

At a basic level computer vision refers to the research field looking for ways to furnish computers with the ability to process and understand visual data — much like the human brain interprets light taken in through the human eye. Add in machine learning to CV systems and you have an adaptive algorithm that can interpret more dynamic visual data, such as Microsoft’s Kinect sensor being able to recognize all sorts of people’s body postures, for instance. Or Google’s driverless cars being able to autonomously navigate in various environments and road conditions.

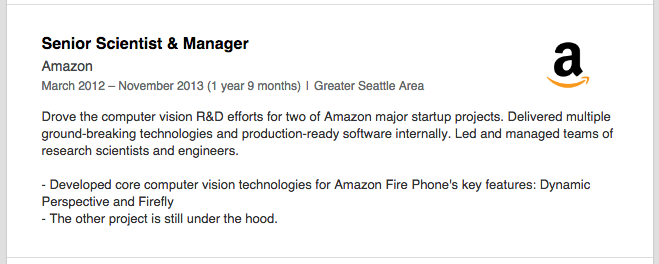

One intriguing detail (screengrabbed below) on the LinkedIn profile of former Amazon senior scientist, who writes that he worked on Firefly before leaving the company in November 2013, points to a “major” computer vision project that Amazon still has “under the hood”:

Meanwhile Amazon’s relatively new CTO of devices, Jon McCormack, formerly VP of engineering for Yahoo, who joined the company in March this year, has a more generic note on his LinkedIn profile — saying he’s working on “building products that will amaze and delight you”. Products, plural.

TechCrunch understands the company has contacted at least one U.K. startup applying computer vision tech to the fashion ecommerce space — although it’s not clear what its intentions in reaching out are at this stage. i.e. whether it’s seeking to establish partnerships or sniffing around for acquisition potential — perhaps as a way to fill some of its outstanding computer vision vacancies.

Beefing up its drones expertise remains a likely possibility, especially given that Prime Air drone tech is an R&D focus for Amazon’s Cambridge, U.K.-based R&D lab, as we reported last November. That lab is staffed with many ex-Broadcom engineers, hired by Amazon after the latter closed its Cambridge-based image processing department. And high end computer vision smarts would certainly be needed to perform delicate drone delivery operations autonomously. But Prime Air is definitely not an Amazon project that’s still under the hood. Jeff Bezos himself went on prime time U.S. TV to unbox his vision for autonomous drone delivery back in December 2013.

So, drones aside, what else might Amazon be looking at disrupting with computer vision tech?

Put an eye on it

Another existing product that stands out to me as having potential here is Amazon Echo: its connected-speaker-plus-voice-assistant (Alexa) which can fetch info and respond to various commands, as well as controlling some other smart home devices.

Given that the first generation Echo does not include a camera, any future version that did would effectively be something rather new and different. A connected speaker with a brain, a ear and an eye, as it were…

Amazon Echo is interesting for several reasons. Firstly it seems to have been something of a hardware success for the company — at least compared to the Fire Phone. It’s also not really a product that competes directly with any rival gizmos at this point. Or none with mass market penetration as yet. And it stands out by being different, while the opposite is true in smartphones where Amazon faces an uphill climb to make any sort of mark.

The Echo is also interesting because of where it lives. It’s a product for the home, perhaps typically sited somewhere in the living room. Maybe next to the user’s TV, within earshot of their couch. It’s in a prime location, as it were, for acting as a voice-activated device that can stream media content to another screen (say a TV or the user’s smartphone).

Now imagine if you add a camera to the Echo, it could also show the user real-time video of themselves and their living room. Why is that interesting? Augment those real-time visuals with digitized products from Amazon’s online marketplace and the Echo could be the bridging tech to unbox Amazon’s virtual marketplace, and bring digital try-ons and visualizations right into shoppers’ homes — just say ‘Alexa, show me wearing the red dress’ and let computer vision tech do the rest…

Reducing return rates by helping consumers visualize what suits and fits remains a persistent challenge for ecommerce, especially when it comes to sartorial purchases. Fashion is fickle and fit is tricky.

Multiple startups are playing in the virtual fitting room space trying to fix this problem, with lots of different approaches in play — from the freshly Rakuten-acquired Fits.me‘s robot mannequins, which adjust to a consumer’s size to indicate how clothes might look, to eBay-owned PhiSix which developed 3D clothes modeling tech, to London-based Metail which creates photorealistic 3D body models of shoppers from 2D photographs and digitizes clothes so consumers can try retailers’ products on virtually on a digital likeness of themselves.

Given that other online marketplace giants like eBay and Rakuten are getting involved in the virtual fitting room space it’s surely only a matter of time before Amazon makes its own play.

What’s better than a likeness? The real-deal of course. Augmenting live video of a person with digitized clothes or accessories is certainly a technical challenge if you want it to look really slick. But it doesn’t require big new technological leaps (the challenge is more about graphical processing power on the back end, and software to render and model fabrics/materials moving in a realistic way). Indeed, virtual try-on by webcam exists already for more static products like glasses. And Amazon could always implement this sort of feature in staggered stages, say with more static products such as accessories or furniture available for try on/visualizing first.

All of the technologies required to do this exist already — it’s a question of putting them all together and packaging them up nicely.

It could even offer the option without adding a camera to Echo — say by partnering with a startup that already creates 3D body models for fashion shopping, and going down the virtual try-on via digital likeness route. But using any kind of digital mannequin, however realistic, as a stand in for the consumer still puts a layer between shopper and product. A really personalized virtual shopping experience would be something that is both more realistic and more interactive. In other words a combination of real and digital. So blended virtual reality/AR starts to look like it could be a compelling fit for ecommerce.

“The technology already exists to do that. And actually in quite low power form,” says Oliver Woodford, when I sketch out the scenario of an Echo with a camera used as the bridge to enable Amazon shoppers try on digitized 3D products. “I think all of the technologies required to do this exist already — it’s a question of putting them all together and packaging them up nicely.”

“If you’re looking at turning your living room into a marketplace, essentially you want to place products into that area and that’s exactly what [Microsoft] Hololens does. It computes the geometry of your room in real time and it projects things virtually into that room. Microsoft have this technology already,” he adds.

“The real-time bit is the tracking, and that’s what [Microsoft] Kinect can do already… I think the technology behind Kinect is known and certainly I think it would be possible for Amazon to replicate that relatively straightforwardly.”

Woodford has a PhD in computer vision-related research and is currently senior scientist for the 3D image capture app Seene, having previously worked for Toshiba and Sharp on different applications of computer vision. Talking about power requirements, he notes that Seene is using smartphone hardware to compute and capture 3D imagery.

“We are giving people the power to capture the world in 3D on their phone. In particular at the moment we’re working on capturing their own face which they’ll then be able to use in videogames and virtual environments,” he says, adding: “Phones are becoming ever more powerful. And in particular if you can harness the power of their GPUs they’re extremely powerful.”

Undoubtedly, the processing complexity could step up pretty steeply if the aim is to accurately model fabric drapery in 3D, in real-time on a moving body. But Amazon has plenty of back-end processing power at its disposal, via its AWS cloud computing platform. It also has a whole lot of computer vision expertise on staff.

Woodford says Amazon has amassed “a huge amount of expertise” within its R&D labs and at AWS. “They keep providing new technologies, like machine learning tool boxes,” he notes. “So they’ll certainly have a great deal of expertise to put this stuff together, and if not buying a small startup with the expertise is something they’re used to doing.”

What about timeframes? The product development process for Microsoft’s Kinect sensor took around four to five years from conception to shipping a consumer product. The computer vision field has clearly gained from a lot of research since then, and Woodford reckons Amazon could ship an Echo sensor in an even shorter timeframe — say, in the next two years — provided the business was entirely behind the idea and doing everything it could to get such a product to market.

Perhaps the bigger challenge to unboxing Amazon’s marketplace in shoppers’ homes via the power of computer vision is not so much developing effective sensing and modeling tech, but rather having enough digital inventory that can make use of it. After all, Kinect has arguably been held back by the limited quantity of games and other content that makes effective use of its body-tracking abilities. But again startups are already working on streamlining the processes of bringing products like clothes to life in digital 3D and reducing the costs involved in turning a real-world object into a 3D model.

We’re working on capturing the world in 3D on your mobile phone. So that’s a way that businesses on the Amazon marketplace can get their products in 3D into the marketplace very cheaply.

“There are lots of businesses working on that problem, actually,” says Woodford. “It’s scalable from a software perspective, but it’s the hardware that you want to be cheap in order to be able to capture lots of products.”

While some startups are using “relatively expensive” dedicated camera set-ups to digitize products, Woodford notes other companies, including Seene, are just using mobile phones to do this. “There are a couple of startups… they sell technology to virtualize your store. So you just go round with a camera and you can capture the products,” he says. “And obviously Seene technology, we’re working on capturing the world in 3D on your mobile phone. So that’s a way that businesses on the Amazon marketplace can get their products in 3D into the marketplace very cheaply.

“So I guess Amazon would look at a range of technologies, depending on how expensive your item is. And how much you want to spend digitizing it you can get higher or lower quality models, using bespoke hardware or a mobile phone.”

Glasses vs screens

Another reason an Echo with an eye is interesting is that it could offer an alternative delivery mechanism for virtual reality — one which might have more mass market appeal because it does not require the user to relinquish control over their visual environment by donning a pair of connected specs.

Google Glass’ failure to gain consumer traction is hardly surprising. The product hasn’t gone away entirely but Google now looks to be pushing it in a more niche, enterprise-focused direction. (Amazon has also patented a pair of smart specs, although this could be a defense punt — or also with an enterprise use-case in mind, given its own warehouse staff need to quickly identify and pick products off shelves.)

Mountain View is also the lead investor in stealthy AR startup Magic Leap, which is building some kind of lightweight wearable to allow what mostly appears to be gamers to get a blended view of interactive virtual content in their real-world environment.

And Microsoft has its eye on this space too of course, as noted above, with HoloLens, the AR headset it unboxed earlier this year. Meanwhile, there are scores of companies building fully immersive VR headsets that remove the wearer from their real world environment entirely — a la Facebook’s Oculus Rift. But if Google Glass was too nerdy for the general public, a fully immersive headset isn’t going to win more friends in the mainstream. None of these reality disrupting headsets has a hope of being as popular and mainstream, in terms of mass market adoption, as the multifaceted smartphone.

On the surface it may seem like a subtle distinction to make but, when you really think about it, there is a world of difference between entirely immersing a person within a digitally augmented environment by requiring they wear a piece of technology on their face vs empowering a consumer to be able to control augmented reality views on a screen they can choose to look at — as a camera-equipped, voice-controlled Echo could.

Add to that, glasses are inherently about looking outwards, away from the body; whereas an in-home, remote-controlled camera offers the ability to survey the self, reflecting how a person looks back to that same person like a high-tech mirror. (Two words to underline the sizable consumer appetites for doing that? Selfie stick…)

Magic Leap’s future wearable purportedly involves shooting lasers directly onto the retina suggesting the user may not even have to look through a lens to get their vision augmented (although, presumably, they’re still going to need to wear a contraption close to their eyes to house the lasers). Such a method may be fiendishly clever but it also removes the user’s agency entirely — given that there’s literally no air-gap between them and the technology (short of them ripping the contraption off their face).

There will be a group of people who really dig that idea, and — I’d wager — a much larger group that says ‘not for me thanks’. So if virtual reality is going to be more than a niche plaything for geeks and gamers, it’s going to need to be applied in a way that’s both useful and does not feel personally or socially alienating. Using the tech as a reflective enabling layer for ecommerce to personalize and improve the online shopping experience certainly feels like a more mainstream application for AR/VR, than blasting zombies at the office.

Who’s watching who?

That’s not to say there aren’t concerns attached to the notion of allowing Amazon to have an eye into your living room. If VR headsets are nerdy, a connected camera looking right at you and your stuff could be very, very creepy. Just look at the furore, earlier this year, over the wording of a privacy policy for a Samsung connected TV which people dubbed ‘Orwellian’. An Echo with a connected camera would certainly need clear user controls and robust privacy safeguards built in to avoid being perceived as overly invasive.

During our conversation Woodford himself poses the question of to what extent I think that Amazon having “a device in your home looking at you, watching what you’re doing, would be creepy”? And I have to agree it wouldn’t be something I’d be rushing to sign up for. But the general utility and convenience of a device that could cut some of the guesswork out of online shopping may make it easy for some consumers to turn a blind eye to inviting Amazon to peek into their homes — at least when they want it to. Connected technologies typically entail some kind of trade off between convenience and privacy. And plenty of people continue to be wowed by the former, apparently enabling them to set aside worries about how much personal data they’re giving up to use a particular service.

Amazon’s Echo plays you music. Would it be able to play you music to suit your mood?

That said, a sensing eye is a very powerful thing. One possible scenario sketched by Woodford is that in-home computer vision hardware could also be used to analyze the mood of the person it’s looking at — and use those emotional observations to tailor product and service recommendations based on how it thinks the person is feeling. “Amazon’s Echo plays you music. Would it be able to play you music to suit your mood?” he posits. “In the future if it can see what you’re doing, and see what you look like — and the expression on your face — it’ll be able to tailor the music to suit your mood. That would be quite an advancement I think.”

“If you have a high enough face image you can compute someone’s emotive state. In fact there’s already technology to do that on a mobile phone by a company called Affectiva,” he adds.

Now things are either getting really interesting, or really dystopic, depending on your view. That kind of ’emotionally intelligent’ connected tech could, for example, be used to target shopping recommendations based on when it thinks someone might be in a “shopaholic mode because you’re feeling down”, jokes Woodford. At which point the Echo owner might find themselves questioning Alexa’s motives for piping up to offer them a little retail therapy at that particular moment in time.

One thing is clear, fashion ecommerce is a huge focus for Amazon. Just this week the company opened a new fashion photography studio in London, which will doubtless be used to smarten up the quality of the 2D imagery of products sold on its marketplace. But a dedicated studio full of high end photography kit could also be deployed for digitizing 3D models of clothes to power a next-gen, augmented reality-enabled clothes buying experience on Amazon.com.

The so-called ‘Everything Store‘ is definitely in ecommerce for the long haul. Almost a decade ago, Bezos himself was quoted as saying Amazon needs to learn how to sell clothes and food in order to become a Walmart-sized $200 billion company. And, well, no one needs to know what a carrot looks like before they buy it — they’ll take it on trust that the grocery delivery service will send a fresh one. But a carrot-colored shirt? That’s a whole different kettle of fish.

Last word: In its Q2 earnings this week Amazon’s value pushed north of $250 billion — some $20 billion more than Walmart. Don’t expect Bezos to be content to stop there though. Amazon’s vast vision really is about flipping commerce on its head, and bringing the fruit market, the high street and the changing room — the whole kit and caboodle — into the living room.