At the core of Google’s freshly announced experimental Project Tango smartphone platform is a vision processor called the Myriad 1, manufactured by chip startup Movidius and its CEO Remi El-Ouazzane. The chip is being used by Google’s Advanced Technology And Projects Group, retained in the Motorola split, to enable developers to access computer vision processing never before seen on a phone.

I’ve been talking to El-Ouazzane about the possibilities of the Myriad 1 and computer vision on mobile devices for some time. The way that low-powered computer vision systems like this will change the phones that we all use cannot be overstated.

The revolutionary part of the Myriad 1 vision processor? Power, pure and simple.

Most vision-processing platforms — like the PrimeSense chip inside Microsoft’s original Kinect — have a comparatively enormous power draw, usually over 1 watt. That’s orders of magnitude higher than what’s needed in order to make it a viable option for use in mobile devices, where power is always at a premium. The iPhone’s battery hovers around 1,500 mAh, which is many, many times smaller than is needed to power such a chip for any length of time. The Myriad 1 operates in the range of a couple hundred milliwats — making putting this kind of chip on a phone possible.

Putting 3D sensing on a phone has been impossible up until this point purely because of this power issue. Now, it’s a reality.

Perceptual Computing And Vision Processing, Sitting In A Tree

When I took a deep dive into Apple’s acquisition of PrimeSense, everyone I spoke to about 3D sensing on mobile devices put it years away — because the power costs were too high. Movidius has leapfrogged ahead in the 3D-sensing market by manufacturing a ready-to-wear chip that has enormously lower power consumption. It produces over 1 teraflop of processing power on only a few hundred milliwatts of power.

This kind of low-power technology is integral to getting 3D sensing onto phones, and spatial and topographic contextual awareness is table stakes for the next generation of phones. Apple’s working on it, Google’s working on it and even Amazon is toying with it.

Perceptual computing encompasses the whole field of analyzing data captured with sensors and visualization systems like cameras and infrared light. Computer vision is one component of the field, which allows devices to ‘see’ the areas around it more like a human does — or better.

For more on what perceptual computing means for our smartphones, be sure to read that piece on Apple and PrimeSense. But for now, we’re interested in what Google’s ATAP group is doing with computer vision.

The Power Of Myriad

El-Ouazzane was a manager of Texas Instruments’ OMAP division, now shuttered. He and the team at Movidius, which was founded by three Trinity College students in Ireland, have been working on the chip for years. The company’s board is also rich with experience — including Dan Dobberpuhl, who co-founded PA Semi, the chip design firm that sold to Apple for $278 million and formed the basis of its A-series processor efforts.

The Myriad 1 chip is based on custom architecture. If you’re not familiar with the way that chips are made — this is insane. Most companies work off of a standard architecture like whatever Intel is pushing or the ARM platform that Apple uses a modified version of for iOS devices. Apple recently started to use custom layouts for its own processors — but Movidius built its chips from the ground up.

Essentially, El-Ouazzane and his team came to a point in the chip’s development where they had to fish or cut bait. They could shop the design out to other companies with access to foundries, basing it on an existing architecture, or they could go full-tilt and build it themselves with no restrictions based on existing chips. And that’s what they did, raising around $50 million to date and going toe-to-toe with big boys like ARM and Qualcomm.

The Myriad 1 is a co-processor. You’ll be hearing a lot more about these kinds of side-car chips as phones get more powerful and more power-hungry in the future. Apple already uses one such co-processor — the M7 — which tracks and reports motion data to the main A7 chip. This enables your iPhone to record health-related activity data even while the system is idle — greatly conserving power.

The Myriad 1 works in a similar manner, processing vision data from the onboard optics to perform advanced calculation jobs that the CPU doesn’t know how to do without sucking down power.

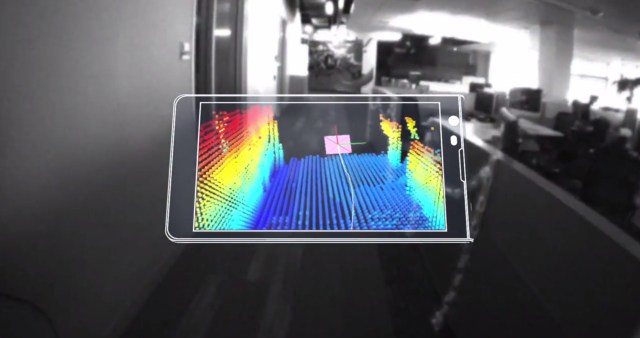

What does this mean in practical terms? A chip that allows the phone to do things like motion detection and tracking, depth mapping, recording and interpreting spatial and motion data. That power will be provided to developers in Google’s Project Tango program via APIs to let them integrate these things into image-capture apps, games, navigation systems, mapping applications and a lot more.

Movidius and Google have put the power of a Kinect-like system into a practical phone platform for the first time, ever.

There are a variety of things that this could be used for, but one prime example is indoor mapping. A computer vision system is capable of reading not just the size and shape of a room, but also all of the items in the room and treating them as discrete objects.

El-Ouazzane says that the strengths of the Myriad 1 lay in three parts. First, it offers your smartphone “intelligent vision,” which can read and interpret the real world via the camera. Second, it’s hyper power-efficient, making these kinds of systems viable on mobile for the first time ever.

This makes it ideal not only for smartphones, but also for being built into wearable devices. The applications for computer vision inside a device like Google Glass, for instance, are pretty staggering. If we pull the thread out a bit, we could be seeing Google enabling Glass wearers to map indoor environments in 3D just by walking around and looking at things.

And third, Movidius is offering tools for developers to tap into the Myriad 1 to develop new apps, which is pretty much the point of the new Tango platform. This is Google’s smartphone version of Glass. A platform for developers to experiment on to determine what the next era of computing will look like.

The Myriad 1 is responsible for hardware accelerated computer vision, image processing and sensor data management inside Project Tango, but it’s only one of many partners that came together to make the phone possible — as led by the ATAP group.

“I think it is safe to assume Apple is looking to experiment with the question: What does a world look like when our device can see and hear us,” Creative Strategies Analyst and Techpinions columnist Ben Bajarin told us about Apple’s PrimeSense acquisition. And the same is true with what Google is doing in this space. It already has one of the most advanced contextual computing offerings with Google Now on Android devices — and adding computer vision to its toolkit will open up massive doors for mapping the world and using that information to make it feel even more prescient.

For now, Project Tango will be rolling out to just a couple hundred developers, but that should expand into the thousands. And Movidius is shopping the processor out to other customers and software partners as well as Google. Soon, any new flagship smartphone will have a co-processor very much like the Myriad 1 and a system designed to capture and contextualize your surroundings.

Illustrations by Bryce Durbin

Article updated to clarify the role of Movidius in Project Tango.