Earlier today, Google announced that it is about to ship its first Google Glass units and just in time for them to arrive, Google has now also posted the developer guidelines for its Glass Mirror API.

Access to the API itself, it’s worth noting, is still in limited preview and only developers who have access to the Glass hardware will be able to work with the API. Everybody else can also start working on apps based on the documentation but won’t be able to test them.

This first version of the API, which will allow developer to write what Google likes to call “Glassware,” is still relatively limited and the most advanced feature developers get access to is probably the Glass wearer’s location. Because every application communicates with Glass through Google’s servers, the API provides developers with a set of RESTful services and is completely cloud-based and none of the code will actually run on Glass itself.

At its core, the API allows developers to send and receive information from Glass devices. Using the API looks to be relatively straightforward, though also a bit limited. Users will subscribe to new app from the developer’s website (and Google has launched a set of icons developers can use on their sites for this, too).

Google currently offers starter projects for Java and Python developers, as well as client libraries for Go, PHP, .NET, Ruby and Dart.

Glassware

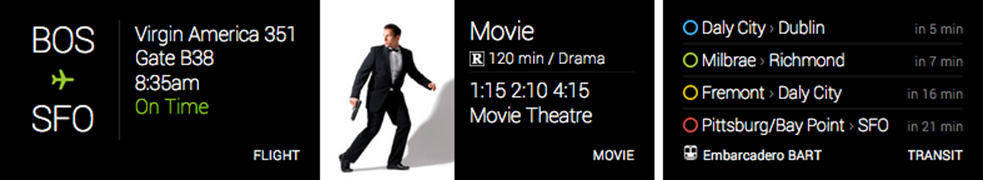

The API will give developers the option to communicate with users through timeline cards, which can include text, rich HTML, images and video. These timeline cards are not unlike Google Now cards on Android and can be bundled into packages – and users can navigate between them – or they can come as stand-alone cards.

The API will give developers the option to communicate with users through timeline cards, which can include text, rich HTML, images and video. These timeline cards are not unlike Google Now cards on Android and can be bundled into packages – and users can navigate between them – or they can come as stand-alone cards.

The API also provides developers with the ability to add menu items to their apps. These, Google says, can be system commands like “read aloud,” but developers can also write custom commands for their specific apps that users can bring up through the menu system or through voice commands.

As for the user interface, Google gives developers the option to use their own custom HTML, but the Glass team also provides developers with a base CSS file. As Google notes, “crafting your own template gives you the power to control how your content is rendered, but with that power comes responsibility.” Because of this, Google “strongly encourages” developers to use its own template.

Photos and videos (H.264 and H.264 baseline) should have a 16×9 aspect ratio and should have a resolution of about 640x360px (that’s also the maximum resolution of the Glass display). Audio can be delivered in the AAC and MP3 formats.

Best Practices

Given that Glass is a new concept, Google also posted some general guidelines for how apps should interact with Glass, though it’s not clear if the company plans to enforce any of these directly.

Developers, Google says, should make sure they apps are designed with glass in mind and should always test their apps on Glass before releasing them. The glass experience should also never get in the user’s way and apps shouldn’t annoy users with frequent and loud notifications. Apps should also focus on real-time notifications and apps should react to a user’s action as soon as possible. Most importantly, given that Glass is meant to be worn all day, developers should not surprise users with “unexpected functionality.”

Overall, many developers will surely feel as if Google is limiting how much they can do with Glass, but it’s worth remembering that this is only a first release and chances are that Google will make more features available to developers in the future.

Here are a few of the videos Google posted today that explain some of the Mirror API’s features in more detail: