I’ve been meaning to address this Lytro thing since it hit a few weeks ago. I wrote about omnifocus cameras as far back as 2008, and more recently in 2010, and while at the time I was more interested in the science behind the systems, though it appears that Lytro uses a different method than either of those.

Lytro has been slightly close-lipped about their camera, to say the least, though that’s understandable when your entire business revolves around proprietary hardware and processes. Some of it can be derived from Lytro founder Ren Ng’s dissertation (which is both interesting and readable), but in the meantime it remains to be shown whether these “living pictures” are truly compelling or something which will be forgotten instantly by consumers. A recent fashion shoot with model Coco Rocha, the first in-vivo demonstration of the device, is dubious evidence at best.

A prototype camera was loaned out for an afternoon with Lytro’s photographer Eric Cheng, and while the hardware itself has been carefully edited or blurred out of the making-of video, it’s clear that the device is no larger than a regular point-and-shoot, and it seems to function more or less normally, with an LCD of some sort on the back, and the usual framing techniques. No tripod required, etc. It’s worth noting that they did this in broad daylight with a gold reflector for lighting, so low light capability isn’t really addressed — but I’m getting ahead of myself.

Speaking from the perspective of a tech writer and someone interested in cameras, optics, and this sort of thing in general, I have to say the technology is absolutely amazing. But from the perspective of a photographer, I’m troubled. To start with, a large portion of the photography process has been removed — and not simply a technical part, but a creative part. There’s a reason focus is called focus and not something like “optical optimum” or “sharpness.” Focus is about making a decision as a photographer about what you’re taking a picture of. It’s clear that Ng is not of the same opinion: he describes focusing as “a chore,” and believes removing it simplifies the process. In a way, it does — the way hot dogs simplify meat. Without focus, it’s just the record of a bunch of photons. And saying it’s a revolution in photography is like saying dioramas are a revolution in sculpture.

I’m also concerned about image quality. The camera seems to be fundamentally limited to a low resolution — and by resolution I mean true definition, not just pixel count. I say fundamentally because of the way the device works. Let me get technical here for a second, though there’s a good chance I’m wrong in the particulars.

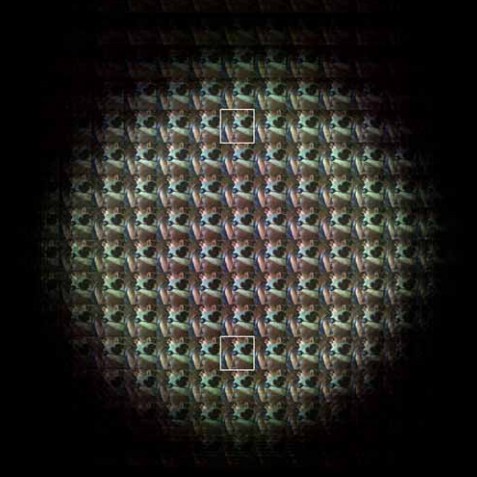

The way the device works is more or less the way I imagined it did before I read Ng’s dissertation. To be brief, the image from the main lens is broken up by a microlens array over the image sensor, and by analyzing (a complex and elegant process) how the light enters various pixel wells underneath the many microlenses (which each see a slightly different picture due to their different placements), a depth map is created along with the color and luminance maps that make up traditional digital images. Afterwards, an image can be rendered with only the objects at a selected depth level rendered in maximum clarity. The rest is shown with increasing blur, probably according to some standard curve governing depth of field falloff.

Immediately it must be perceived that an enormous amount of detail is lost, not just because you are interposing an extra optical element between the light and the sensor (and one which simultaneously must be extremely low in faults and yet is very difficult to make so), but also because the system fundamentally relies on creating semi-redundant data to compare with one another, meaning pixels are yielding less data for a final image than they would be in a traditional system. They are of course providing information of a different kind, but as far as producing a sharp, accurate image, they are doing less. Ng acknowledges this in his paper, and the reduction of a 16-megapixel sensor to a 296×296 image (a reduction of some 95.5% of the pixel count) in the prototype is testament to this reducing factor.

The process has no doubt been improved along the lines he suggests are possible: square pixels have likely been replaced with hexagonal, the lenses and pixel widths made complementary, and so on. But the limitation still means trouble, especially on the microscopic sensors being deployed to camera phones and compact point and shoots. I’ve complained before that these micro-cameras already have terrible image quality, smearing, noise, limited exposure options, and so on. The Lytro approach solves some of these problems and exacerbates others. On the whole downsampling might be an improvement, now that I think of it (the resolutions of cheap cameras exceed their resolving power immensely), but I’m worried that the cheap lenses and small size will limit Lytro’s ability to make that image as versatile as their samples — at least, for a decent price. There’s a whole chapter in Ng’s paper about correcting for micro-optical aberrations, though, so it’s not like they’re unaware of this issue. I’m also worried about the quality of the blur or bokeh, but that’s an artistic scruple unlikely to be shared by casual shooters.

The limitation of the aperture to a single opening simplifies the mechanics but also leaves control of the image to ISO and exposure length. These are both especially limited in smaller sensors, since the tiny, densely-packed photosensors can’t be relied on for high ISOs, and consequently the exposure times tend to be longer than is practical for handheld shots. Can the Lytro camera possibly gain back in post-processing what it loses in initial definition?

Lastly, and this is more of a question, I’m wondering whether these images can be made to be all the way in focus, the way a narrow aperture would show it. My guess is no; there’s a section in the paper on extending the depth of field, but I’m not sure the effect will stand scrutiny in normal-sized images. It seems to me (though I may be mistaken) that the optical inconsistencies (which, to be fair, generate parallax data and enable the 3D effect) between the different “exposures” mean that only slices can be shown at a time, or at the very least there are limitations to which slices can be selected. The fixed aperture may also put a floor on how narrow your depth of field can be. Could the effect achieved in this picture be replicated, for instance? Or would I have been unable to isolate just that quarter-inch slice of the world?

All right, I’m done being technical. My simplified objections are two in numer: first, is it really possible to reliably make decent photos with this kind of camera, as it’s intended to be implemented (i.e. as an affordable compact camera)? And second, is it really adding something that people will find worthwhile?

As to the first: designing and launching a device is no joke, and I wonder whether Ng, coming from an academic background, is prepared for the harsh realities of product. Will the team be able to make the compromises necessary to bring it to shelves, and will those compromises harm the device? They’re a smart, driven group so I don’t want to underestimate them, but what they’re attempting really is a technical feat. Distribution and presentation of these photos will have to be streamlined as well. When you think about it, a ton of the “living photo” is junk data, with the “wrong” focus or none at all. Storage space isn’t so much a problem these days, but it’s still something that needs to be looked at.

The second gives me more pause. As a photographer I’m strangely unexcited by the ostensibly revolutionary ability to change the focus. The fashion shoot, a professional production, leaves me cold. The “living photos” seem lifeless to me because they lack artistic direction. I’m afraid that people will find that most photos they want to take are in fact of the traditional type, because the opportunities presented by multiple focus points are simply few and far between. Ng thinks it simplifies the picture-taking process, but it really doesn’t. It removes the need to focus, but the problem is that we, as human beings, focus. Usually on either one thing or the whole scene. Lytro photos don’t seem to capture either of those things. They present the information from a visual experience in a way that is unfamiliar and unnatural except in very specific circumstances. A “focused” Lytro photo will never be as good as its equivalent from a traditional camera, and a “whole scene” view presents no more than you would see if the camera was stopped down. Like the compound insect eye it partially mimics, it’s amazing that it works in the first place, and its foreignness by its nature makes it intriguing, but I wouldn’t call it a step up.

“Gimmick” is far too harsh a word to use on a truly innovative and exciting technology such as Lytro’s. But I fear that will be the perception when the tools they’ve created are finally put to use. It’s new, and it’s powerful, yes, but is it something people will actually want to use? I think that, like so many high-tech toys these days, it’s more fun in theory than it is in practice.

That’s just my opinion, though. Whether I’m right or wrong will of course be determined later this year, when Lytro’s device is actually delivered, assuming they ship on time. We’ll be sure to update then (if not before; I have a feeling Ng may want to respond to this article) and get our own hands-on impressions of this interesting device.