Google’s monthly self-driving car reports are fun to read through, and gives transparent accounts of what the team is up to, how the cars are performing, and any lessons learned along the way. Last month focused on pedestrians with Halloween being a helper.

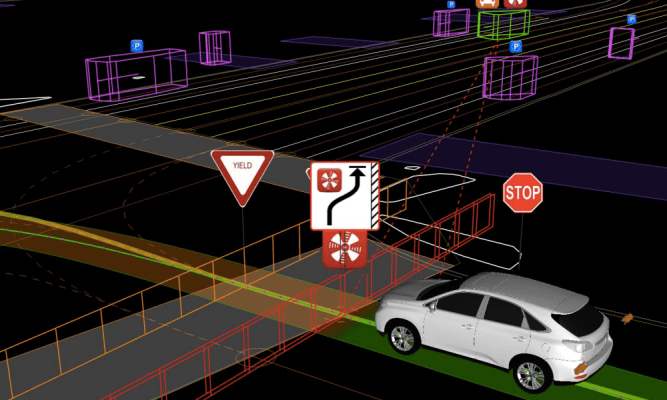

This month? The company says its currently averaging 10,000-15,000 autonomous miles per week on public streets with 23 Lexus RX450h SUVs and 30 prototypes on the road. We also learned more about that car that was going too slow and got pulled over, as well as one of the cars being rear-ended at a red light.

On the former, Google explains why their cars were going so damn slow:

From the very beginning we designed our prototypes for learning; we wanted to see what it would really take to design, build, and operate a fully self-driving vehicle — something that had never existed in the world before. This informed our early thinking in a couple of ways. First, slower speeds were easier for our development process. A simpler vehicle enabled us to focus on the things we really wanted to study, like the placement of our sensors and the performance of our self-driving software. Secondly, we cared a lot about the approachability of the vehicle; slow speeds are generally safer (the kinetic energy of a vehicle moving at 35mph is twice that of one moving at 25mph) and help the vehicles feel at home on neighborhood streets.

The Mountain View police now knows why Google’s cars are driving around at that speed…and so do we.

On the rear-end accident, one of Google’s Lexus model autonomous vehicles or “Google AV” was hit from behind by a car going 4 MPH while the Google AV waited to take a right at a red light. The car that hit it rolled after its stop, thus hitting the car. Nobody was hurt. The team went on to explain how it trains its software to handle situations such as knowing when it’s OK to turn right on red:

On the rear-end accident, one of Google’s Lexus model autonomous vehicles or “Google AV” was hit from behind by a car going 4 MPH while the Google AV waited to take a right at a red light. The car that hit it rolled after its stop, thus hitting the car. Nobody was hurt. The team went on to explain how it trains its software to handle situations such as knowing when it’s OK to turn right on red:

Our vehicles can identify situations where making a right turn on red is permissible and the position of our sensors gives us good visibility of left-hand traffic. After coming to a complete stop, we nudge forward if we need to get a better view (for example, if there’s a truck or bus blocking our line of sight). Our software and sensors are good at tracking multiple objects and calculating the speed of oncoming vehicles, enabling us to judge when there’s a big enough gap to safely make the turn. And because our sensors are designed to see 360 degrees around the car, we’re on the lookout for pedestrians stepping off curbs or cyclists approaching from behind.

It’s all fascinating stuff. It’s like teaching a kid how to drive for the first time, except this kid is a big damn car driving itself around. Google feeds its software with all types of data, collecting more and more information as the cars drive the streets.

You can read the full report here.