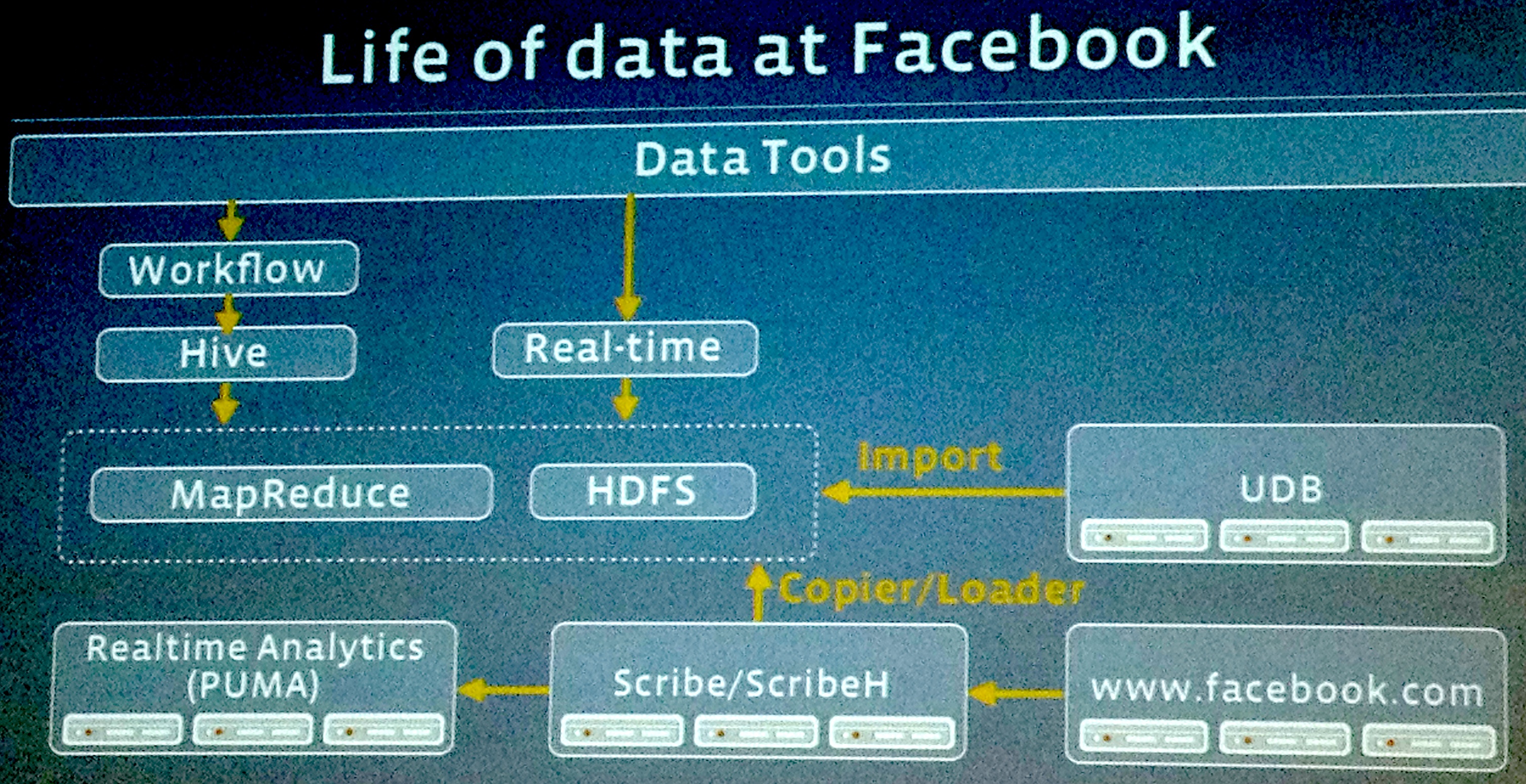

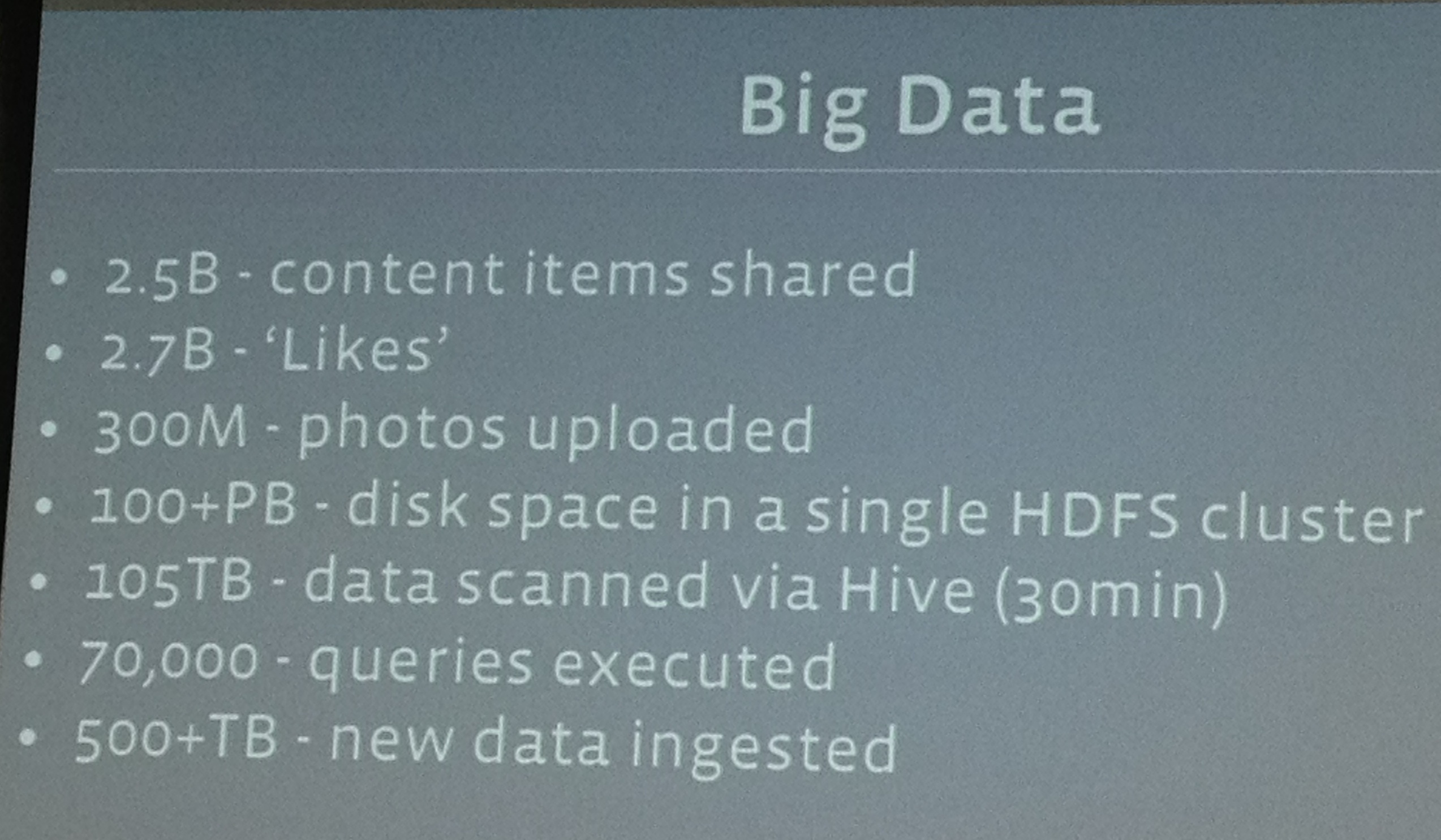

Facebook revealed some big, big stats on big data to a few reporters at its HQ today, including that its system processes 2.5 billion pieces of content and 500+ terabytes of data each day. It’s pulling in 2.7 billion Like actions and 300 million photos per day, and it scans roughly 105 terabytes of data each half hour. Plus it gave the first details on its new “Project Prism”.

VP of Engineering Jay Parikh explained why this is so important to Facebook: “Big data really is about having insights and making an impact on your business. If you aren’t taking advantage of the data you’re collecting, then you just have a pile of data, you don’t have big data.” By processing data within minutes, Facebook can rollout out new products, understand user reactions, and modify designs in near real-time.

Another stat Facebook revealed was that over 100 petebytes of data are stored in a single Hadoop disk cluster, and Parikh noted “We think we operate the single largest Hadoop system in the world.” In a hilarious moment, when asked “Is your Hadoop cluster bigger than Yahoo’s?”, Parikh proudly stated “Yes” with a wink.

While that sounds like a lot to smaller businesses, he noted that in a few months “No one will care you have 100 petabytes of data in your warehouse”. The speed of ingestion keeps on increasing, and “the world is getting hungrier and hungrier for data.”

And this data isn’t just helpful for Facebook. It passes on the benefits to its advertisers. Parikh explained, “We’re tracking how ads are doing across different dimensions of users across our site, based on gender, age, interests [so we can say] ‘actually this ad is doing better in California so we should show more of this ad in California to make it more successful.'”

Facebook doesn’t even need to push changes to see their impact now. “By looking at historical data, We can validate a model before putting it into production. We put data in a simulation, and can see ‘will this increase CTR by X?'” It also has a system called Gatekeeper that lets it simultaneously test different changes on tiny percentages of the user base.

Then there’s “Project Prism”. Right now Facebook actually stores its entire live, evolving user database in a single data center, with others used for redundancy and other data. When the main chunk gets too big for one data center it has to move the whole thing to another that’s been expanded to fit it. This shuttling around is a waste of resources.

Parikh says “Project Prism lets us take this monolithic warehouse…and physically separate [it] but maintain one view of the data.” The means the live dataset can be split up and hosted across Facebook’s data centers in California, Virginia, Oregon, North Carolina, and Sweden.

Internally, Facebook has chosen not to partition data or erect barriers between different business units like ads and customer support. Product developers can look at data across departments to assess whether their latest little tweak increased time-on-site, triggered complaints, or generated ad clicks.

Users might be a little bit uneasy about the idea that Facebook employees could look so deep into their activity, but Facebook assured me there are numerous protections against abuse. All data access is logged so Facebook can track which workers are looking at what. Only those working on building products that require data access get it, and there’s an intensive training process around acceptable use. And if an employee pries where they’re not supposed to, they’re fired. Parikh stated strongly “We have a zero-tolerance policy.”