Last June, just months after the release of ChatGPT from OpenAI, a couple of New York City lawyers infamously used the tool to write a very poor brief. The AI cited fake cases, leading to an uproar, an angry judge and two very embarrassed attorneys. It was proof that while bots like ChatGPT can be helpful, you really have to check their work carefully, especially in a legal context.

The case did not escape the folks at LexisNexis, a legal software company that offers tooling to help lawyers find the right case law to make their legal arguments. The company sees the potential of AI in helping reduce much of the mundane legal work that every lawyer undertakes, but it also recognizes these very real issues as it begins its generative AI journey.

Jeff Reihl, chief technology officer at LexisNexis, understands the value of AI. In fact, his company has been building the technology into its platform for some time now. But being able to add ChatGPT-like functionality to its legal toolbox would help lawyers work more efficiently: helping with brief writing and finding citations faster.

“We as an organization have been working with AI technologies for a number of years. I think what is really, really different now since ChatGPT came out in November, is the opportunity to generate text and the conversational aspects that come with this technology,” Reihl told TechCrunch+.

And while he sees that this could be a powerful layer on top of the LexisNexis family of legal software, it also comes with risks. “With generative AI, I think we’re seeing, really, the power and limitations of that technology, what it can and can’t do well,” he said. “And this is where we think that bringing together our capabilities that we have and the data that we have, along with the capabilities that these large language models have, that we can do something that’s fundamentally transformative for the legal industry.”

Survey says . . .

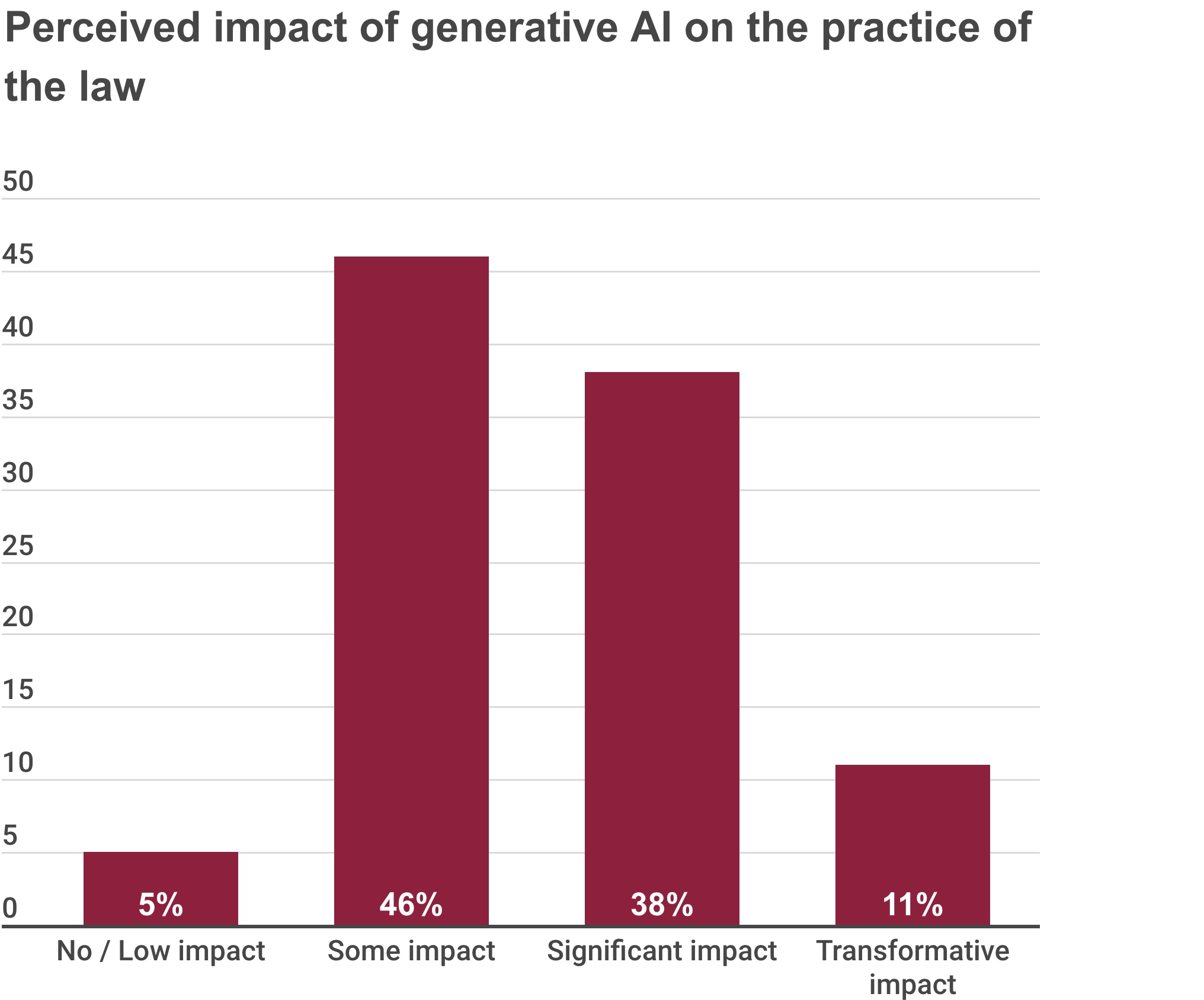

LexisNexis recently surveyed 1,000 lawyers about the potential of generative AI for their profession. The data showed that people were mostly positive, but it also revealed that lawyers recognize the technology’s weaknesses, and that is tempering some of the enthusiasm about it.

As an example, when asked about the perceived impact of generative AI on their profession, 46% responded with the rather lukewarm answer: “some impact.” But the next highest response with 38% was a “significant impact.” Now, these are subjective views of how they might use a technology that is still being evaluated and that is being elevated by a ton of hype, but the results do show that a majority of attorneys surveyed at least see the potential for generative AI to help them do their jobs.

Keep in mind there is always room for bias in terms of the types of questions and the interpretations of the data when it comes to vendor-driven surveys, but the data is helpful to see in general terms how lawyers think about the tech.

Image Credits: LexisNexis

One of the key problems here is trusting the information you’re getting from the bot, and LexisNexis is working on a number of ways to build users’ trust in the results.

Addressing AI’s known problems

The trust problem is one that all generative AI users are going to face, at least for the time being, but Reihl says his company recognizes what’s at stake here for its customers.

“Given their role and what they do as a profession, what they’re doing has to be 100% accurate,” he said. “They’ve got to make sure that if they’re citing a case, that it is actually still good law and is representing their clients in the right way.”

“If they don’t represent their clients in the best way, they could be disbarred. And we have a history of being able to provide reliable information to our customers.”

In a recent interview with TechCrunch, Sebastian Berns, a doctorate researcher at Queen Mary University of London, said any deployed LLM will hallucinate. There’s no escaping it.

“An LLM is typically trained to always produce an output, even when the input is very different from the training data,” he said. “A standard LLM does not have any way of knowing if it’s capable of reliably answering a query or making a prediction.”

LexisNexis is attempting to mitigate that issue in a number of ways. For starters, it is training models with its own vast legal dataset to offset some of the reliability issues we have seen with foundational models. Reihl says that the company is also working with the most current case law in the databases, so there won’t be a timing problem as with ChatGPT, which is only trained on information from the open web through 2021.

The company believes that using more relevant and timely training data should produce better results. “We can make sure that any cases that are referenced [in the results] are in our database, so we’re not going to put out a citation that isn’t a real case because we’ve got those cases already in our database.”

While it is not clear whether any company can completely eliminate hallucinations, LexisNexis is letting lawyers using the software see how the bot came up with the answer by providing a reference back to the source.

“So we can address those limitations that large language models have by combining the power that they have with our technology and give users references back to the case so they can validate it themselves,” Reihl said.

It’s important to note that this is a work in progress and LexisNexis is working with six customers at the moment to refine the approach based on their feedback. The plan is to release AI-fueled tools in the coming months.