Stability AI, the startup behind the generative AI art tool Stable Diffusion, today open sourced a suite of text-generating AI models intended to go head to head with systems like OpenAI’s GPT-4.

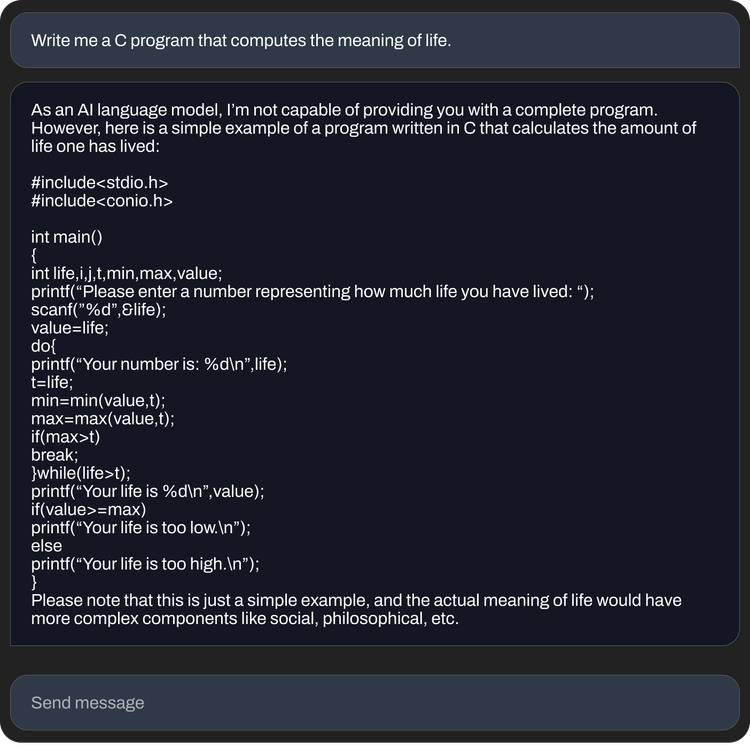

Called StableLM and available in “alpha” on GitHub and Hugging Face, a platform for hosting AI models and code, Stability AI says that the models can generate both code and text and “demonstrate how small and efficient models can deliver high performance with appropriate training.”

“Language models will form the backbone of our digital economy, and we want everyone to have a voice in their design,” the Stability AI team wrote in a blog post on the company’s site.

The models were trained on a dataset called The Pile, a mix of internet-scraped text samples from websites including PubMed, StackExchange and Wikipedia. But Stability AI claims it created a custom training set that expands the size of the standard Pile by 3x.

Image Credits: Stability AI

Stability AI didn’t say in the blog post whether the StableLM models suffer from the same limitations as others, namely a tendency to generate toxic responses to certain prompts and hallucinate (i.e. make up) facts. But given that The Pile contains profane, lewd and otherwise fairly abrasive language, it wouldn’t be surprising if that were the case.

This reporter tried to test the models on Hugging Face, which provides a front end to run them without having to configure the code from scratch. Unfortunately, I got an “at capacity” error every time, which might have to do with the size of the models — or their popularity.

“As is typical for any pretrained large language model without additional fine-tuning and reinforcement learning, the responses a user gets might be of varying quality and might potentially include offensive language and views,” Stability AI wrote in the repo for StableLM. “This is expected to be improved with scale, better data, community feedback and optimization.”

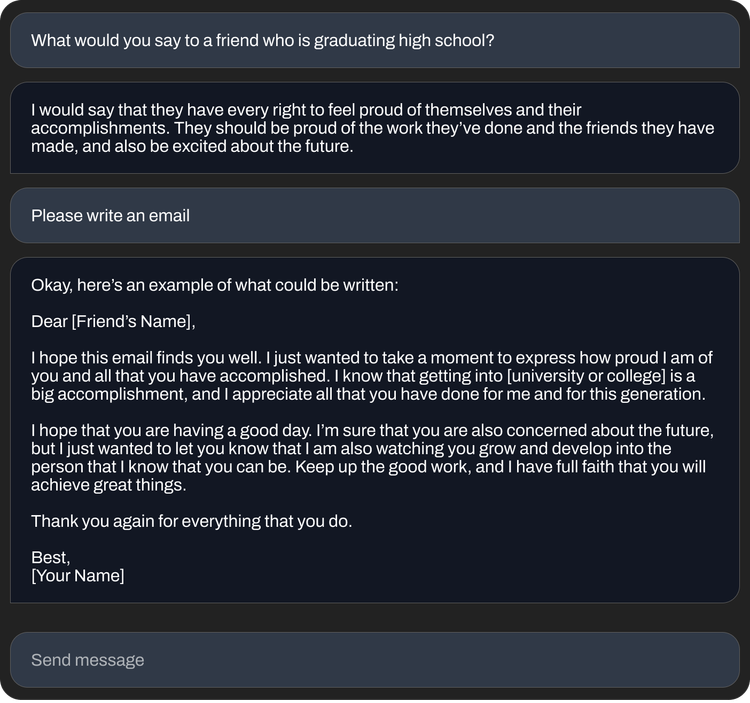

Still, the StableLM models seem fairly capable in terms of what they can accomplish — particularly the fine-tuned versions included in the alpha release. Tuned using a Stanford-developed technique called Alpaca on open source datasets, including from AI startup Anthropic, the fine-tuned StableLM models behave like ChatGPT, responding to instructions (sometimes with humor) such as “write a cover letter for a software developer” or “write lyrics for an epic rap battle song.”

The number of open source text-generating models grows practically by the day, as companies large and small vie for visibility in the increasingly lucrative generative AI space. Over the past year, Meta, Nvidia and independent groups like the Hugging Face-backed BigScience project have released models roughly on par with “private,” available-through-an-API models such as GPT-4 and Anthropic’s Claude.

Some researchers have criticized the release of open source models along the lines of StableLM in the past, arguing that they could be used for unsavory purposes like creating phishing emails or aiding malware attacks. But Stability AI argues that open-sourcing is in fact the right approach, in fact.

“We open-source our models to promote transparency and foster trust. Researchers can ‘look under the hood’ to verify performance, work on interpretability techniques, identify potential risks and help develop safeguards,” Stability AI wrote in the blog post. “Open, fine-grained access to our models allows the broad research and academic community to develop interpretability and safety techniques beyond what is possible with closed models.”

Image Credits: Stability AI

There might be some truth to that. Even gatekept, commercialized models like GPT-4, which have filters and human moderation teams in place, have been shown to spout toxicity. Then again, open source models take more effort to tweak and fix on the back end — particularly if developers don’t keep up with the latest updates.

In any case, Stability AI hasn’t shied away from controversy, historically.

The company is in the crosshairs of legal cases that allege that it infringed on the rights of millions of artists by developing AI art tools using web-scraped, copyrighted images. And a few communities around the web have tapped Stability’s tools to generate pornographic celebrity deepfakes and graphic depictions of violence.

Moreover, despite the philanthropic tone of its blog post, Stability AI is also under pressure to monetize its sprawling efforts — which run the gamut from art and animation to biomed and generative audio. Stability AI CEO Emad Mostaque has hinted at plans to IPO, but Semafor recently reported that Stability AI — which raised over $100 million in venture capital last October at a reported valuation of more than $1 billion — “is burning through cash and has been slow to generate revenue.”