Israel-based AI healthtech company, DiA Imaging Analysis, which is using deep learning and machine learning to automate analysis of ultrasound scans, has closed a $14 million Series B round of funding.

Backers in the growth round, which comes three years after DiA last raised, include new investors Alchimia Ventures, Downing Ventures, ICON Fund, Philips and XTX Ventures — with existing investors also participating, including CE Ventures, Connecticut Innovations, Defta Partners, Mindset Ventures, and Dr Shmuel Cabilly. In total, it has taken in $25 million to date.

The latest financing will allow DiA to continue expanding its product range and go after new and expanded partnerships with ultrasound vendors, PACS/Healthcare IT companies, resellers and distributors while continuing to build out its presence across three regional markets.

The health tech company sells AI-powered support software to clinicians and healthcare professionals to help them capture and analyze ultrasound imagery — a process which, when done manually, requires human expertise to visually interpret scan data. So DiA touts its AI technology as “taking the subjectivity out of the manual and visual estimation processes being performed today”.

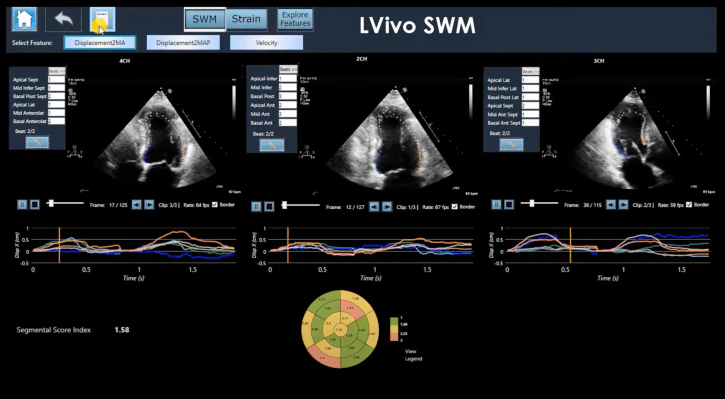

It has trained AIs to assess ultrasound imagery so as to automatically home in on key details or identify abnormalities — offering a range of products targeted at different clinical requirements associated with ultrasound analysis, including several focused on the heart (where its software can, for example, be used to measure and analyze aspects like ejection fraction; right ventricle size and function; plus perform detection assistance for coronary disease, among other offerings).

It also has a product that leverages ultrasound data to automate measurement of bladder volume.

DiA claims its AI software imitates the way the human eye detects borders and identifies motion — touting it as an advance over “subjective” human analysis that also brings speed and efficiency gains.

“Our software tools are supporting tool for clinicians needing to both acquiring the right image and interpreting ultrasound data,” says CEO and co-founder Hila Goldman-Aslan.

DiA’s AI-based analysis is being used in some 20 markets currently — including in North America and Europe (in China it also says a partner gained approval for use of its software as part of their own device) — with the company deploying a go-to-market strategy that involves working with channel partners (such as GE, Philips and Konica Minolta) which offer the software as an add on on their ultrasound or PACS systems.

Per Goldman-Aslan, some 3,000+ end-users have access to its software at this stage.

“Our technology is vendor neutral and cross-platform therefore runs on any ultrasound device or healthcare IT systems. That is why you can see we have more than 10 partnerships with both device companies as well as healthcare IT/PACS companies. There is no other startup in this space I know that has these capabilities, commercial traction or many FDA/CE AI-based solutions,” she says, adding: “Up to date we have 7 FDA/CE approved solutions for cardiac and abdominal areas and more are on the way.”

An AI’s performance is of course only as good as the data set it’s been trained on. And in the healthcare space efficacy is an especially crucial factor — given that any bias in training data could lead to a flawed model which misdiagnoses or under/over-estimates disease risks in patient groups who were not well represented in the training data.

Asked about how its AIs were trained to be able to spot key details in ultrasound imagery, Goldman-Aslan told TechCrunch: “We have access to hundreds of thousands ultrasound images through many medical facilities therefore have the ability to move fast from one automatic area to another.”

“We collect diverse population data with different pathology, as well as data from various devices,” she added.

“There is a Phrase ‘Garbage in Garbage out’. The key is not to bring garbage in,” she also told us. “Our data sets are tagged and classified by several physicians and technicians, each are experts with many years on experience.

“We also have a strong rejection system that rejects images that was taken incorrectly. This is how we overcome the subjectivity of how data was acquired.”

It’s worth noting that the FDA clearances obtained by DiA are 510(k) Class II approvals — and Goldman-Aslan confirmed to us that it has not (and does not intend) to apply for Premarket Approval (PMA) for its products from the FDA.

The 510(k) route is widely used for gaining approval for putting many types of medical devices into the U.S. market. However, it has been criticized as a light-touch regime — and certainly does not entail the same level of scrutiny as the more rigorous PMA process.

The wider point is that regulation of fast-developing AI technologies tends to lag behind developments in how they’re being applied — including as they push increasingly into the healthcare space where there’s certainly huge promise but also serious risks if they fail to live up to the glossy marketing — meaning there is still something of a gap between the promises made by device makers and how much regulatory oversight their tools actually get.

In the European Union, for example, the CE scheme — which sets out some health, safety and environmental standards for devices — can simply require a manufacturer to self declare conformity, without any independent verification they’re actually meeting the standards they claim, although some medical devices can require a degree of independent assessment of conformity under the CE scheme. But it’s not considered a rigorous regime for regulating the safety of novel technologies like AI.

Hence the EU is now working on introducing an additional layer of conformity assessments specifically for applications of AI deemed “high risk” — under the incoming Artificial Intelligence Act.

Healthcare use-cases, like DiA’s AI-based ultrasound analysis, would almost certainly fall under that classification so would face some additional regulatory requirements under the AIA. For now, though, the on-the-table proposal is being debated by EU co-legislators and a dedicated regulatory regime for risky applications of AI remains years out of coming into force in the region.