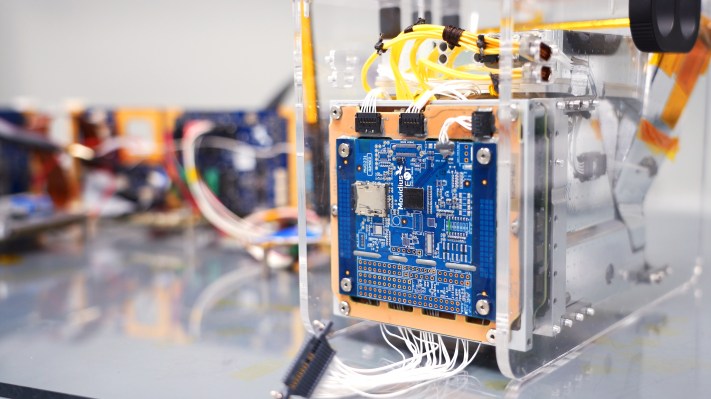

Intel detailed today its contribution to PhiSat-1, a new tiny small satellite that was launched into sun-synchronous orbit on September 2. PhiSat-1 has a new kind of hyperspectral-thermal camera on board, and also includes a Movidius Myriad 2 Vision Processing Unit. That VPU is found in a number of consumer devices on Earth, but this is its first trip to space — and the first time it’ll be handling large amounts of local data, saving researchers back on Earth precious time and satellite downlink bandwidth.

Specifically, the AI on board the PhiSat-1 will be handling automatic identification of cloud cover — images where the Earth is obscured in terms of what the scientists studying the data actually want to see. Getting rid of these images before they’re even transmitted means that the satellite can actually realize a bandwidth savings of up to 30%, which means more useful data is transmitted to Earth when it is in range of ground stations for transmission.

The AI software that runs on the Intel Myriad 2 on PhiSat-1 was created by startup Ubotica, which worked with the hardware maker behind the hyperspectral camera. It also had to be tuned to compensate for the excess exposure to radiation, though, a bit surprisingly, testing at CERN found that the hardware itself didn’t have to be modified in order to perform within the standards required for its mission.

Computing at the edge takes on a whole new meaning when applied to satellites on orbit, but it’s definitely a place where local AI makes a ton of sense. All the same reasons that companies seek to handle data processing and analytics at the site of sensors here on Earth also apply in space — but magnified exponentially in terms of things like network inaccessibility and quality of connections, so expect to see a lot more of this.

PhiSat-1 was launched in September as part of Arianspace’s first rideshare demonstration mission, which it aims to use to show off its ability to offer launch services to smaller startups for smaller payloads at lower costs.