Most marketers believe there’s a lot of value in having relevant, engaging images featured in content.

But selecting the “right” images for blog posts, social media posts or video thumbnails has historically been a subjective process. Social media and SEO gurus have a slew of advice on picking the right images, but this advice typically lacks real empirical data.

This got me thinking: Is there a data-driven — or even better, an AI-driven — process for gaining deeper insight into which images are more likely to perform well (aka more likely to garner human attention and sharing behavior)?

The technique for finding optimal photos

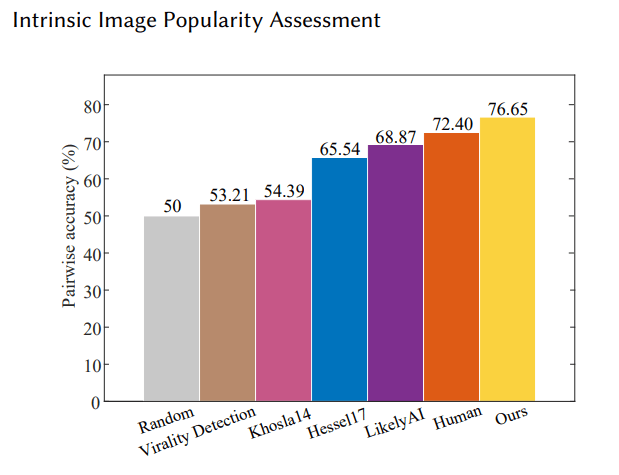

In July of 2019, a fascinating new machine learning paper called “Intrinsic Image Popularity Assessment” was published. This new model has found a reliable way to predict an image’s likely “popularity” (estimation of likelihood the image will get a like on Instagram).

It also showed an ability to outperform humans, with a 76.65% accuracy on predicting how many likes an Instagram photo would garner versus a human accuracy of 72.40%.

Image Credits: Ding/Ma/Wang (opens in a new window)

I used the model and source code from this paper to come up with how marketers can improve their chances of selecting images that will have the best impact on their content.

Finding the best screen caps to use for a video

One of the most important aspects of video optimization is the choice of the video’s thumbnail.

According to Google, 90% of the top performing videos on the platform use a custom selected image. Click-through rates, and ultimately view counts, can be greatly influenced by how eye-catching a video title and thumbnail are to a searcher,

In recent years, Google has applied AI to automate video thumbnail extraction, attempting to help users find thumbnails from their videos that are more likely to attract attention and click-throughs.

Unfortunately, with only three provided options to choose from, it’s unlikely the thumbnails Google currently recommends are the best thumbnails for any given video.

That’s where AI comes in.

With some simple code, it’s possible to run the “intrinsic popularity score” (as derived by a model similar to the one discussed in this article) against all of the individual frames of a video, providing a much wider range of options.

The code to do this is available here. This script downloads a YouTube video, splits it into frames as .jpg images, and runs the model on each image, providing a predicted popularity score for each frame image.

Caveat: It is important to remember that this model was trained and tested on Instagram images. Given the similarity in behavior for clicking on an Instagram photo or a YouTube thumbnail, we feel it’s likely (though never tested) that if a thumbnail is predicted to do well as an Instagram photo, it will similarly do well as a YouTube video thumbnail.

Let’s look at an example of how this works.

Current thumbnail. Image Credits: YouTube (opens in a new window)

We had the intrinsic popularity model look at three frames per second of this 23-minute video. It took about 20 minutes. The following were my favorites from the 20 images that had the highest overall scores.

Popularity score: 5.42. Image Credits: YouTube

Popularity score: 4.62. Image Credits: YouTube

Popularity score: 4.37. Image Credits: YouTube

Popularity score: 4.28. Image Credits: YouTube

Popularity score: 4.26. Image Credits: YouTube

Popularity score: 4.12. Image Credits: YouTube

The image the YouTuber chose was not a frame from the video but instead looks like a custom photo they took specifically for the thumbnail. I ran this photo through the intrinsic popularity model, and it achieved a score of 3.37.

Thumbnail used by the YouTuber. This image does not appear in the video and appears to be a custom photo taken to be used as the thumbnail. This image achieved a score of 3.37, which beats out ~75% of captured frames, but it was still not in the top 50 scoring frames. Image Credits: YouTube

Thumbnail generated by YouTube. Score: 2.74. Image Credits: YouTube

Thumbnail generated by YouTube. Score: 1.79. Image Credits: YouTube

Thumbnail generated by YouTube. Score: 1.19. Image Credits: YouTube

Overall, the thumbnails selected by Google were images with intrinsic popularity scores around the average for all frames in the video.

It is unclear if the intrinsic popularity model might outperform Google’s thumbnail selector. Still, the fact remains it only provides three options. Using the process I explained, you get a great deal of additional control in selecting from many potential best thumbnail options.

Finding the best stock photos for a blog post or social media post

Finding the best possible stock photo for a post can be a tedious process, especially if you want to go beyond finding an image that makes sense for your content and instead finding an image that’s compelling and encourages people to read more.

With the Intrinsic Popularity model and Google image search, there is a much better data-driven approach to finding better stock photos for your given situation.

The process is pretty straightforward:

First, come up with a few good search queries related to your stock photo needs.

In the example of the topic area of hunting, a good search query might be, “hunting stock photos.”

Then, for each query, run a Python script that scrapes the first 100 returned images. For each scraped image, run the intrinsic popularity model and retrieve the scores.

The code needed to run the above functions can be found here.

From this image search, out of the first 100 returned image results, these were the images with the top five highest intrinsic popularity scores:

Popularity score: 5.66. Image Credits: Google image search

Popularity score: 5.51. (Probably not great for hunting, but shows the bias of the model for human faces.) Image Credits: Google image search

Popularity score: 4.57. Image Credits: YouTube

Popularity score: 4.54. Image Credits: Shutterstock

By sorting the results by their score and then evaluating each on how well it fits your specific need, this could be a great new approach to figuring out which image/images will be best for your articles and blog posts.

Final thoughts

We are entering a new age where we can now leverage AI to help us make better decisions as marketers.

The code used in this article was all taken off the shelf from open-source projects and cobbled together by a nonexpert Python tinkerer (me).

There are certainly many other ways this state-of-the-art model for intrinsic image popularity could be leveraged. I’m excited to hear other ideas for extending the value of this model. Cheers!