Training computers and robots to not only understand and recognize objects (like an oven, for instance, as distinct from a dishwasher) is pretty crucial to getting them to a point where they can manage the relatively simple tasks that humans do every day. But even once you have an artificial intelligence trained to the point where it can tell your fridge from your furnace, you also need to make sure it can operate the things if you want it to be truly functional.

That’s where new work from Intel AI researchers, working in collaboration with UCSD and Stanford, comes in — in a paper presented at the Conference on Computer Vision and Patter Recognition, the assembled research team details how they created “PartNet,” a large data set of 3D objects with highly detailed, hierarchically organized and fully annotated part info for each object.

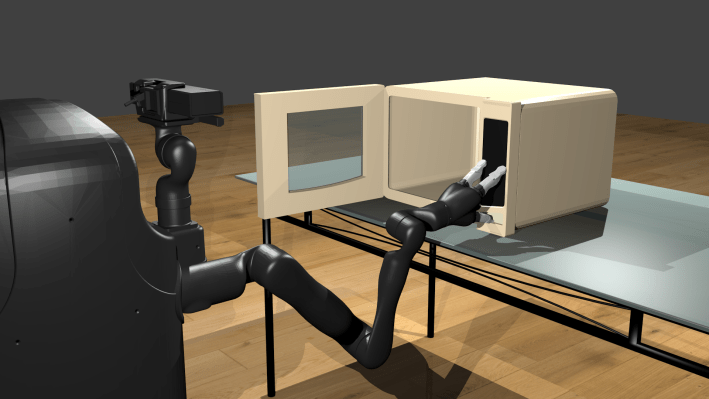

The data set is unique, and already in high demand among robotics companies, because it manages to organize objects into their segmented parts in a way that has terrific applications for building learning models for artificial intelligence applications designed to recognize and manipulate these objects in the real world. So, for instance, in the photographed example above, if you’re hoping to have a robot arm manage to turn on a microwave to reheat some leftovers, the robot needs to know about “buttons” and their relation to the whole.

Robots trained using PartNet, and evolutions of this data set won’t be limited to just operating computer-generated microwaves that look like someone found it on a curb with a “free” sign taped to the front. It includes more than 570,000 parts, across more than 26,000 individual objects, and parts that are common to objects across categories are all marked as corresponding to one another — so that if an AI is trained to recognize a chair back on one variety, it should be able to recognize it on another.

That’s handy if you want to redecorate your dining room, but still want your home helper bot to be able to pull out your new chairs for guests, just like it did with the old ones.

Admittedly, my examples are all drawn from a far-flung, as-yet hypothetical future. There are plenty of near-term applications of detailed object recognition that are more useful, and part identification can likely help reinforce decision-making about general object recognition, too. But the implications for in-home robotics are definitely more interesting to ponder, and it’s an area of focus for a lot of the commercialization efforts focused around advanced robotics today.