OK, perhaps the events of the last few months have only moved the needle a bit, but the fact remains that we take a heck of a lot of pictures of ourselves with our hands covering our faces. And it turns out that’s a fairly serious problem for facial recognition.

Yes, I had fun attempting this 47 times at my local coffee shop.

If you’ve ever tried to use a filter in Snapchat or Facebook, you might have noticed how easy it is to throw everything off. Hands tend to be a particular pain for this type of computer vision because they share so many properties with faces — color, texture, etc.

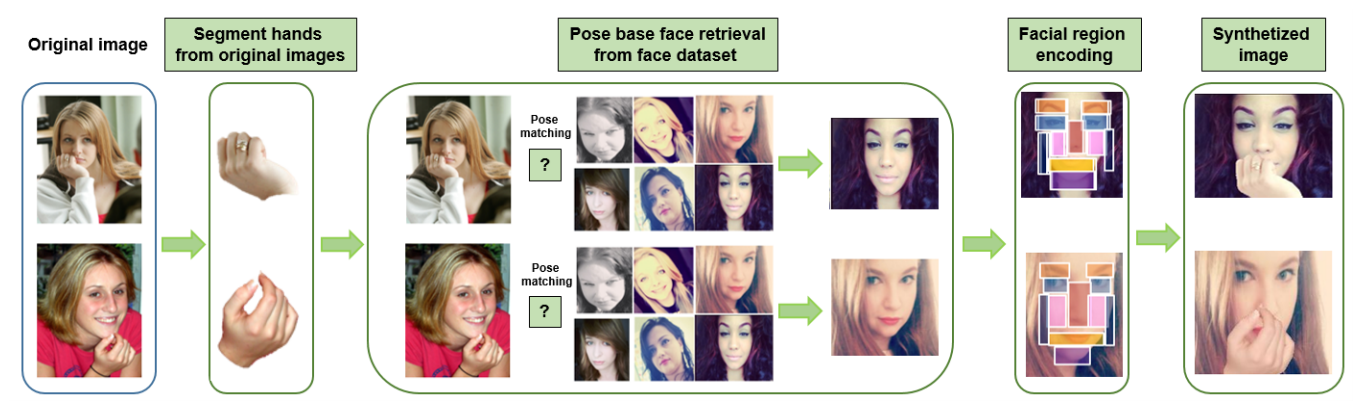

A team of researchers from the University of Central Florida and Carnegie Mellon University dedicated an entire paper to dealing with the problems facial occlusion poses to AI. The team of four created a method for synthesizing images of hands obstructing faces. This data can be used to improve the performance of existing facial recognition models and potentially even enable more accurate recognition of emotion.

Typically, facial recognition models work by identifying landmarks. Though not entirely explicit, the geometric relationship between your mouth and eyes is critical for recognition.

Despite the fact that we occlude our faces with our hands regularly, very little research has been done on performing facial recognition with hand occlusion. There just isn’t very much data available, cleanly organized and naturalized images to satiate the thirst of deep learning models.

“We were building models and we noticed that visual models were failing more than they should,” Behnaz Nojavanasghari, one of the researchers explained to me in an interview. “This was related to facial occlusion.”

This is why creating a pipeline for image synthesis is so helpful. By masking hands away from their original images, they can be applied to new images that lack occlusion. This gets to be pretty tricky because the placement and appearance of the hands have to look natural after digital transplant.

Synthesized images are color corrected, scaled and oriented to emulate a real image. One of the benefits of this approach is that it creates a data set containing the exact same image with both occlusion and no occlusion.

The downside is that the research team had no real, non-synthisiszed, data set to compare to. Though Nojavan was confident that even if the generated images are not perfect, they’re good enough to push research forward in the niche space.

“Hands have a large degree of freedom,” Nojavanasghari added. “If you want to do it with natural data it’s hard to make people do all kinds of gestures. If you train people to do gestures, it is not naturalistic.”

When hands cover the face they create uncertainty and remove critical information that can typically be extracted from a facial image. But hands also add information. Different hand placement can express surprise, anxiety and a complete withdrawal from the world and its disfunction.

Startups like Affectiva make it their business to interpret emotion from images. Improving facial recognition, and emotional recognition in particular, has broad applications in advertising, user research and robotics to name a few. And it might just make Snapchat a tad less likely to mistake your hand for your face.

Of course it also might help machine intelligence keep up with the official gesture of 2017 — the facepalm.

Hat tip: Paige Bailey