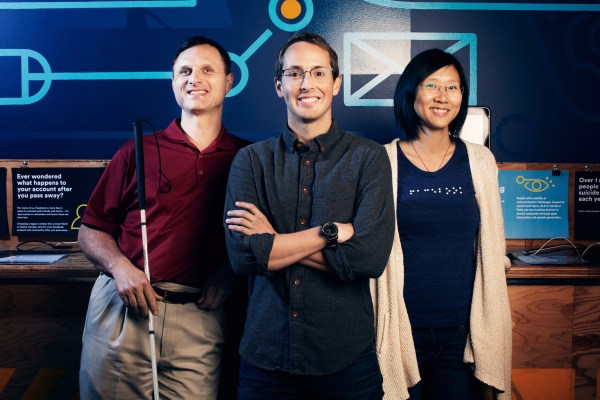

Facebook has launched a tool, Automatic Alternative Text, for blind and visually impaired people to “see” images on the site. For people using screen readers to identify what’s displayed, AAT uses object recognition technology to generate descriptions of photos on Facebook. This tool, led by Facebook’s accessibility team, has been several months in the making.

“You just think about how much of your news feed is visual — and most of it probably is — and so often people will make a comment about a photo or they’ll say something about it when they post it, but they won’t really tell you what is in the photo,” Matt King, Facebook’s first blind engineer, told me back in October. “So for somebody like myself, it can be really like, ‘Ok, what’s going on here? What’s the discussion all about?’”

Before AAT, people using screen readers would only hear the name of the person who shared a photo, along with any accompanying text that the person wrote on Facebook. Now, someone could hear “image may contain three people, smiling, outdoors.”

[gallery ids="1301824,1301825,1301826"]

The object recognition powering Facebook’s AAT is based on a neural network with billions of parameters, and one that is trained with millions of examples. Neural networks are one type of model for machine learning. When it comes to images, you can think of a neural network as a pattern recognition system.

In Facebook’s technology for AAT, it recognizes images and words in transportation (“car,” “boat,” “motorcycle,” etc.), nature (“outdoor,” “mountain,” “wave,” “sun,” “grass,” etc.), sports (‘tennis,” “swimming,” ‘stadium,” etc.) food (“ice cream,” “sushi,” “dessert,” etc.) and descriptive words for appearance (“baby,” “eyeglasses,” “smiling,” “jewelry,” “selfie,” etc.).

AAT is currently available for iOS screen readers set to English because that’s where Facebook sees the most use from blind and visually impaired people. Facebook will soon add the functionality to other platforms and languages.