The lifetime of the computer has been marked by an ongoing struggle to communicate with the machine. When two human conversational participants come from different languages, true communication only occurs when one can learn to speak in the language of the other.

At the beginning of the history of computation, it was the human who had to use the language of the machine; early programmers expressed themselves in binary. But over time, we built better interfaces. Binary machine code was replaced by languages employing commands that took the form of words. Typed command-line interfaces gave way to graphical interfaces and abstractions of file systems that suggested physical space. Commands took the form of mouse or swipe gestures.

And now, the maturation of natural language processing (NLP) technologies means that we have started to “talk” to our phones, our cars and our toys. Through these technologies, we are being introduced to digital assistants and textual interfaces that empower us to help ourselves.

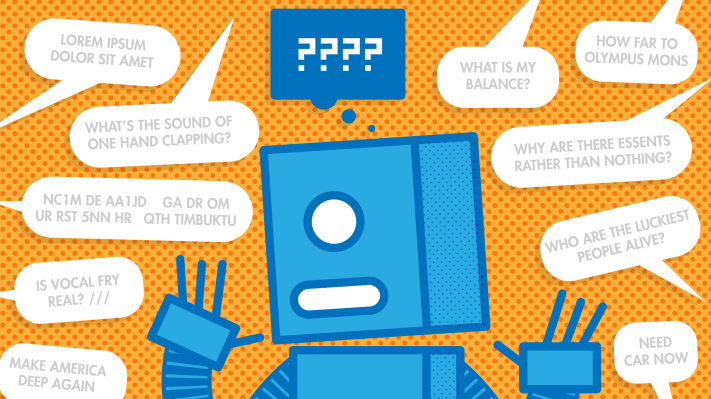

Designing these systems requires overcoming certain challenges, of course. Cognitive ergonomics is a design philosophy recognizing that just as an ergonomic keyboard might bend so that the user’s wrists do not have to, a system’s design should bend so that the user’s natural processes for accomplishing a task do not have to. More and more, users expect not to be forced to bend to communicate to a computer.

When the communication takes the form of natural language, people would prefer to be able to converse as if the dialogue partner were another human. A move away from “interactive responses” and toward “dialogue,” however, entails more than just a shift in thinking away from menus and keyword detection.

Designing systems for natural human communication is challenging; dialogue breaks the “rules.” For example, it is not news to anyone who communicates via any of the text-based channels that these environments have developed a dialect of their own. “Textspeak” often involves deliberate modifications to the spelling and grammatical standards of natural language, and this has spawned entire domains of linguistic research.

The phenomenon, however, is hardly new to human communication. Morse code operators, in the interest of economizing their keystrokes, also developed a shorthand that can still be observed today by listening in on the conversation between any two ham operators, such as:

NC1M DE AA1JD GA DR OM UR RST 5NN HR QTH TIMBUKTU OP IS MATT HW? NC1M DE AA1JD KN

Translation: To NC1M from AA1JD: Good afternoon dear old man (buddy). Your signal is very readable (5) and very strong (9), with very good tone (9)). I’m located in Timbuktu. The operator’s (my) name is Matt. How do you copy? To NC1M from AA1JD, listening for response from a specific station.

Multiple publications have claimed that roughly 15 percent of the words occurring in SMS and Twitter text are not found in the dictionary, which is a significant problem for automated NLP tools hoping to communicate with users via text.

Furthermore, while there will always be the “fat fingers” problem with simple unintentional typos, many of these spelling variants are intentional; for brevity, stylistic or emphasis purposes, texters deviate from standard spellings with acronyms, shortened/simplified versions of words and even lengthened variations. One study found an average of 5.5 shortened spellings per text message sent by English-speaking millennials.

Designing systems for natural human communication is challenging.

How can a computer understand this? One option is to add “ROTFL” to the dictionary. But while there are many resources listing common slang and abbreviations, this will not cover the many extemporized variations. This means we need to augment our slang dictionary with a reliable way to transform unrecognized text into standard spelling.

Most approaches normalize text by generating potential respelling candidates and ranking them from most likely to least likely in order to pick the top one. In typo correction, respelling candidates are generated through knowledge of how fingers or memories typically stumble.

Thankfully, we can do the same with intentional spelling variants; some studies have shown that if you can describe the systematic ways in which people typically modify words in text-based communication, you can use this to generate and rank respelling possibilities effectively. If you augment this domain knowledge with semantic (meaning) and syntactic (grammar) context from applying natural language understanding (NLU) to the rest of the utterance, the ranking will be even more accurate.

Another way in which we are challenged in natural dialogue is the need to handle the fact that dialogue progression is not always strictly linear, as is seen at the beginning of this article. When users are asked a question, it is natural for them to respond with a question of their own (“What is my balance?”).

Natural dialogue often enters into a short digression in which the system must recognize that the dialogue act of the user’s utterance was not an answer, but a query for relevant information; and the system must be able to answer the query. Then the dialogue initiative can return to the system and continue naturally. A user may also provide more information than was strictly asked (“From savings to checking.”), or may speak ambiguously (“What is my balance?” Of which account? In this case, either is relevant, and to save additional dialogue turns clarifying the intent, both can be provided).

Dialogue systems therefore must be flexible about more than spelling; they must also avoid restricting the interaction flow to a prompt-and-response exchange. This is the era for successful and powerful interactions to arrive as these questions and more are addressed; the machine, and not the user, will bend to meet us in the middle ground.

Author’s note: The Morse code example was modified from Wikipedia with thanks to NC1M for additional consultation.