We — humanity, that is — created 4.4 zettabytes of data last year. This is expected to rise to 44 zettabytes by 2020. And no, I didn’t make up the word “zettabytes.” For scale, it is estimated that 42 zettabytes could store all human speech ever spoken. One zettabyte is around 250 billion DVDs — almost enough fit the whole Friends series.

All that data wouldn’t be any use to us if we couldn’t move it around quickly and reliably. We are constantly sending, receiving and streaming. We live in an age where anyone with a smart handheld device — and there are about 2.6 billion — can instantly become a video streamer. This large count doesn’t even include big businesses and governments, most of which now do the majority of their communication digitally, adding to a prodigious amount of bits and bytes.

As connectivity continues to skyrocket, will we be able to move this data fast enough? Are we willing to pay the price to keep moving it faster and more reliably?

To c Or Not To c

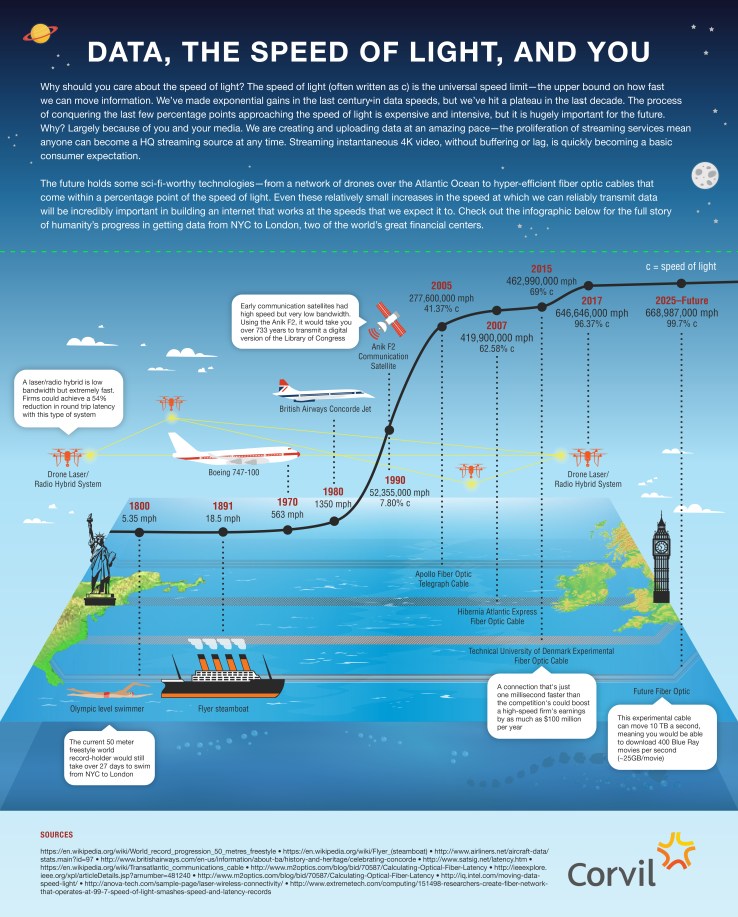

Current technology/wires are very fast, but our data creation and consumption is quickly catching up. Google Fiber, offering one of the fastest speeds available to regular consumers, has a transfer rate of one gigabit per second, and the Hibernia Express, a transatlantic fiber optic cable currently under construction for use in financial markets, will have a rate of 8.8 terabits per second (8800x faster than Google Fiber). So why not just go faster?

We are running up against the universal speed limit.

There are rules to the universe. Unfortunately, we don’t know all of them. What we do know is that information has a speed limit: namely, c, the speed of light. Light travels about 300,000,000 meters per second. The two cables mentioned above, Google Fiber and the Hibernia Express, are both moving data at about 2/3 c (two-thirds the speed of light), limited by the refractive properties of the glass fiber. Basically, light bounces around and slows down inside the cable.

The Need For Speed

Outside of business, milliseconds may not mean millions of dollars, but today’s consumer expectations are higher than ever. Viewers now consider instantaneous, on-demand access the norm. In this ecosystem, the word “buffering” means something has gone wrong. Slow load times for images while online shopping can mean clicking on the next site and never returning. And for the streaming service provider or the online retailer, this means dents in the bottom line.

Are we willing to pay the price to keep moving data faster and more reliably?

The rate at which we create data is not going to slow down anytime soon. The advent of the Internet of Things looms on the horizon — a predicted 25 billion devices (three per person on Earth) each producing, sending and receiving data. Is our infrastructure ready for that cascade of data?

The Age Of The Plateau

Notice how the line on the infographic below flattens out over the last few decades? We’ve made huge advances in previous decades, but now the rate of progress is affected by very different struggles. The most advanced technologies are only a few percentage points away from the speed of light — but the technology to reliably utilize that speed may be many years and many millions of dollars away.

An increase of a small percentage in speed requires intensive resources. The Hibernia Express will shave off 6 milliseconds of latency for transatlantic transmission. It is estimated that the project will cost more than $300 million, and firms will pay millions for access to the line. The secret behind the Hibernia’s speed is quite simple: They laid the cable in a straighter line across the Atlantic.

For the average consumer, and even those with intense speed needs, like financial markets, the faster transfer doesn’t make that much of a difference. But when we consider the sheer volume of data that will need to be moved constantly, reliably and without issue, the importance of these incremental steps toward better infrastructure is clear. Every tenth of a percentage point will be hugely impactful.

Faster, Better, Stronger

Luckily, breakthroughs are coming quickly, albeit often at great price. Researchers in the U.K. have created fiber cables that move data at 99.73 percent the speed light, and combined them with technologies that allow for incredible 73.7 terabits per second — that’s a 5GB HD movie downloaded in 1 second. These cables, however, are so far only efficient for very short distances, so further work is needed.

A good way to avoid losing speed to the physical media you are sending the light through is to avoid it altogether. Microwave relays, which are sent through (mostly) empty air, are able to travel much faster. Relays must be built as a series of stationary towers, so the viability of crossing an ocean in such a manner would make for some serious construction challenges. Some have proposed a series of stationary barges holding towers and, in a truly sci-fi twist, a network of hovering drones that would transfer the signal over short distances using lasers.

All this progress will come at great cost. The financial sector has typically led the charge because of their reliance on absolute top speeds, and those technologies, proven in the fire of the international markets, eventually make their way into general business use.

We are spending billions on this development; progress is slow compared to the increase in speeds in previous decades. Every fraction of the speed of light matters if we expect to keep up with the incredible amount of data we will be capable of producing in the coming years.

Milliseconds don’t matter until there are millions of them, and millions upon millions of people expecting to consume at speeds close to the speed of light.