To some they’re the holy grail of computing; computer chips that work like the brain — opening up a wealth of possibilities from artificial intelligence to the ability to simulate whole artificial personalities.

To others they are another in a long list of interesting but unpractical ideas not too dissimilar to the ubiquitous flying car.They’re called neuromorphic chips and the truth about them lies somewhere in the middle.

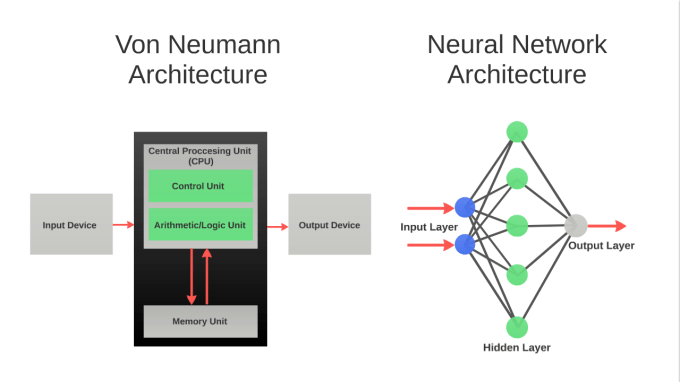

The main force driving interest in neural network technology is an inconvenient truth: the architecture that drove the information age, created by famed physicist & mathematician John von Neumann (known as von Neumann architecture), is running into the fundamental limits of physics.

Image courtesy of NeuroMem

You may have seen these effects already: Notice how your new shiny smartphone isn’t light years ahead of your last upgrade? That’s because chips that use von Neumann architecture face a performance bottleneck and designers must now balance the functionality of the chips they design against how much power they’ll consume. Otherwise you’ll have an insanely powerful phone, with a battery that lasts all of 12 minutes.

In contrast; nature’s own computing device, the brain, is extremely powerful and the one you’re using to read this uses only 20 Watts of power. Access to this sort of powerful efficiency would enable a new era of embedded intelligence. So what’s the hold up?

Neural networks are not a new idea. Research into the technology traces its roots back to the 1940’s alongside regular computer chip technology.

Today, software emulations of neural networks are powering many of the image recognition capabilities of internet giants, like Google and Facebook, who are investing heavily in startups. However, as they need to run on expensive supercomputers their potential is always limited. They run software neural networks on a system architecture that is serial by nature as opposed to being parallel — one of the keys that makes neural networks so appealing in the first place.

So, how long will it be before we get these neural network chips?

Actually a class of neuromorphic chip has been available since 1993. That year, a small independent team approached IBM with an idea to develop a silicon neural network chip called the ZISC (Zero Instruction Set Computer), which became the world’s first commercially available neuromorphic chip.

The independent team had prior experience building software neural networks for pattern recognition at CERN’s Super Proton Synchrotron, a particle smasher and the older sibling of the more famous Large Hadron Collider of Higgs-Boson fame.

Frustrated by the inherent limitations of running neural networks on von Neumann systems they had come to the conclusion that creating neuromorphic hardware was the best way to leverage the unique capabilities of neural networks and, with IBM’s expertise, the ZISC36 with 36 artificial neurons was born. IBM alongside General Vision Inc. sold the chip and it’s successor the ZISC78 (78 neurons) for 8 years from 1993 until, in 2001, IBM exited commercial ZISC chip manufacturing and development.

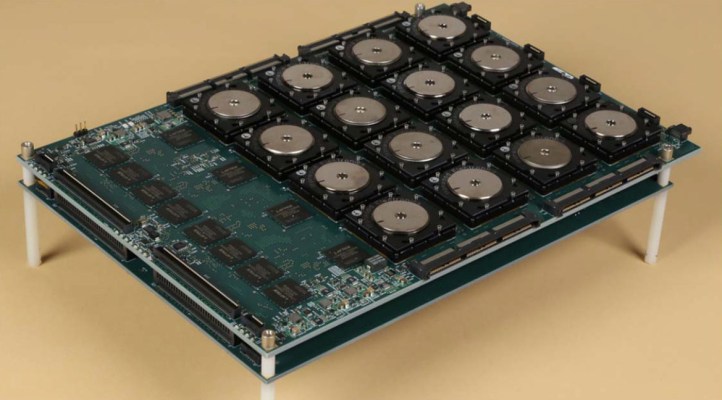

General Vision decided to carry on, as they believed the technology had many unexplored applications. They leveraged their expertise to continue developing the neuromorphic technology until, after 5 years of project work; they managed to raise enough capital to build a successor to the ZISC. In 2007 they launched the Cognitive Memory 1000, aka the CM1K: A neuromorphic chip with 1,024 artificial neurons working in parallel while consuming only 0.5 Watts and able to recognize and respond to patterns in data (images/code/text/anything) in just a few microseconds. It would seem that this gamble on neuromorphic chips could pay off because soon after, neuromorphic research stepped up several gears.

In 2008, just one year after the CM1K was developed, DARPA announced the SyNAPSE program – Systems of Neuromorphic Adaptive Plastic Scalable Electronics – and awarded contracts to IBM and HRL Labs to develop neuromorphic chips from the ground up.

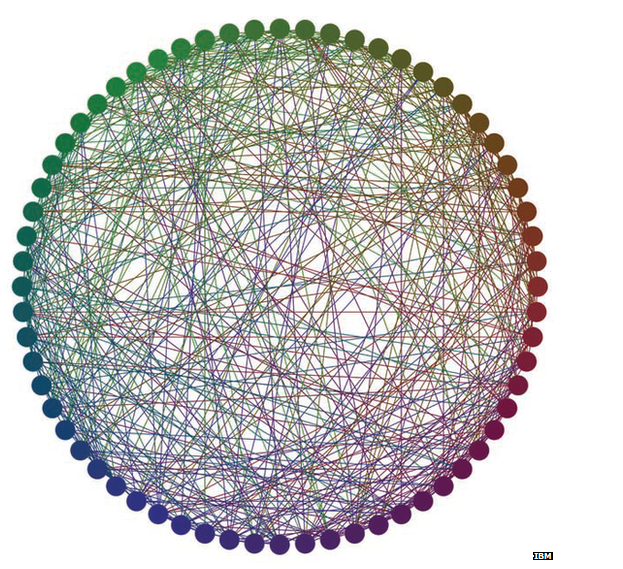

Another year later in 2009 a team in Europe reported developing a chip with 200,000 neurons and 50 million synaptic connections resulting from the FACETS (Fast Analog Computing with Emergent Transient States) program which in turn has lead to a new European initiative called The Human Brain Project which launched in 2014.

Numerous Universities also began (or renewed old) programs to look into neuromorphic chips and interest has begun to gain momentum.

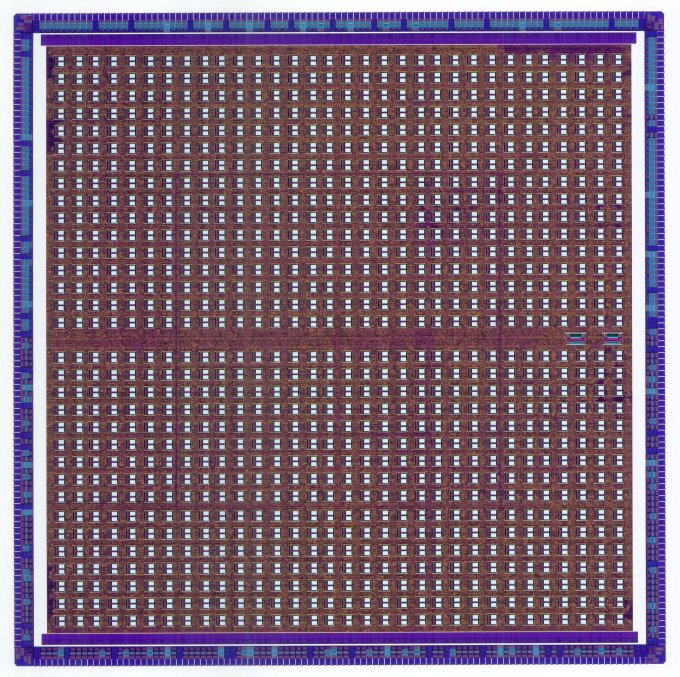

In 2012 Intel announced they were getting involved in neuromorphic chips with a new architecture, while Qualcomm threw their hat into the ring in 2013 backed by freshly acquired neural network start ups. Most recently, in August 2014, IBM announced TrueNorth, a neuromorphic chip with 1,000,000 neurons and 256 million programmable synapses – one of the worlds most powerful and complicated chips. It’s complete with it’s own custom programming language, all of which came from DARPA’s SyNAPSE program.

TrueNorth is deeply impressive technology, a concept that could herald some of the more lofty dreams of neural networks, but sadly it is unlikely to be found in your next tablet for cost reasons. It does certainly prove a point though – we’re entering an age where neural network chips can actually work and are no longer purely in the realms of science fiction.

Why has it taken so many years for these neuromorphic technologies to take off?

In part, the success of Moore’s Law meant engineers didn’t need neural network architectures and it may surprise you to hear this, but many of the issues surrounding the slow adoption of neuromorphic chips have never been technical, the issues stem from a lack of belief. The renewed global interest in neuromorphic chips has opened people’s minds to the idea of ‘brain chips’ – which is why an 8-year-old chip design can still get people excited. A technology that can be taught (yes that’s right, not programmed, taught) to recognize just about anything from a face to a line of code and then recognize what it’s been taught anywhere in enormous volumes of data, in just a few microseconds and can be integrated with almost any modern electronics very easily.

Neuromorphic technologies have the potential to transform everything. One day maybe we will be able to download ourselves onto a neuromorphic brain chip and ‘live forever’ but long before then more practical applications beckon.

From EEG/ECG monitors that automatically recognize the warning signs in an irregular heart beat to a phone that knows the faces of the friends in a picture it’s taken and automatically sends them all a copy. Pet doors that admit only one individual pet, a car that recognizes it’s driver and automatically adjusts to their settings, robots or drones that can follow anything they’re trained to recognize a cookie jap that locks when it recognizes unauthorized hands… the list of applications is literally endless.

We can enable the things we use to ‘know’ us, creating neuromorphic things and beginning an era of true ‘smart’ technologies. Let’s be honest: In reality your smartphone isn’t ‘smart’, it’s just a well-connected tool that you use. For something to be smart: as in, ‘intelligent’, it must first have some way of recognizing the data and inputs that surround it and pattern recognition is something neuromorphic chips are very good at. You could even call it cognition.