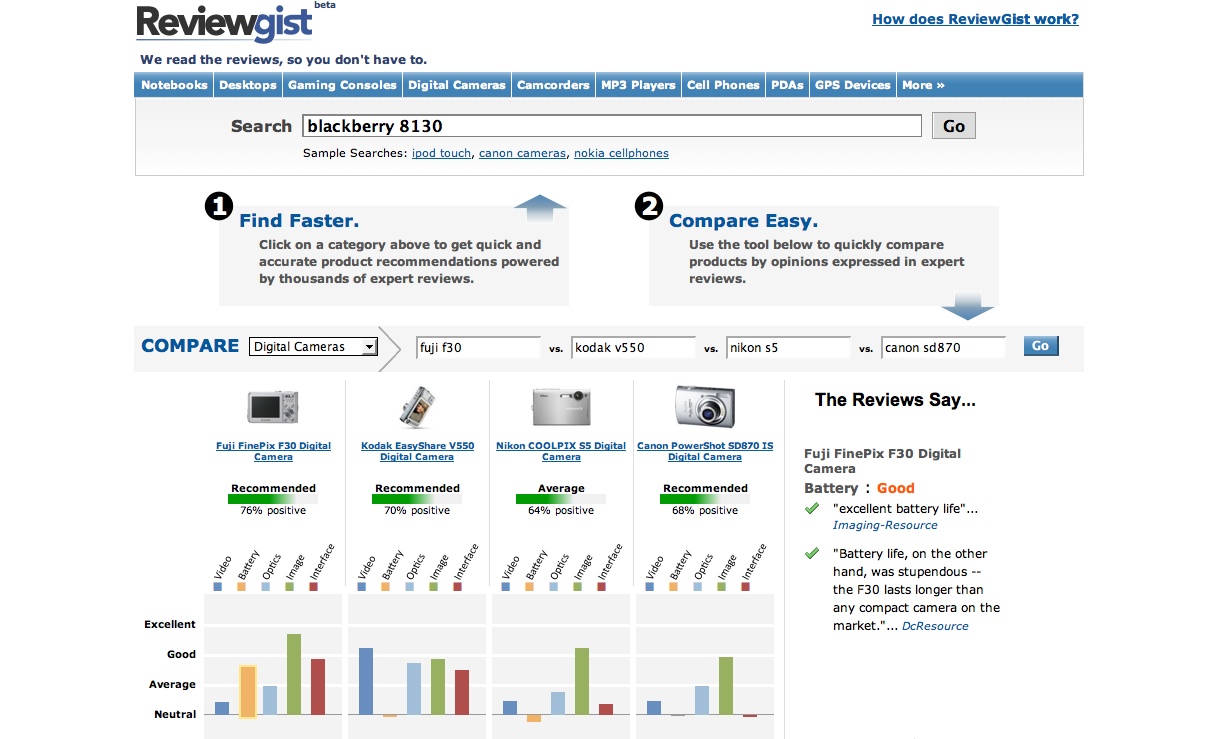

Sites like ReviewGist are great for consumers but not so great for gadget bloggers. By collecting a number of reviews and adding on meta-date like price and features, they automatically create collections of data what are actually quite useful when shopping and, unlike something like Amazon.com, offer more in-depth information than standard user-generated reviews.

ReviewGist is similar to Wize.com and Compete but the creator, Nishant Soni, says:

Our scope might seem similar to Wize.com but we analyze reviews much deeper and try to understand what aspect/part of the product is the reviewer rating good or bad. This deeper semantic analysis then enables our rating algorithms, product comparison and recommendation tools.

Playing with it turned up some holes in its database. For example, it can’t tell the difference between “Kodak EASYSHARE 5300 InkJet Printer” and “Kodak EASYSHARE 5300 All-in-One InkJet Printer” — something that could be remedied by focusing on the model number. You also get gems like “two USB ports”… Review.zdnet.com” when the robot tries to extract interesting information from the reviews it scans. The sites it visits weren’t very broad either. I mostly saw CNET and ZDNET with a few odd blogs and magazine websites in there.

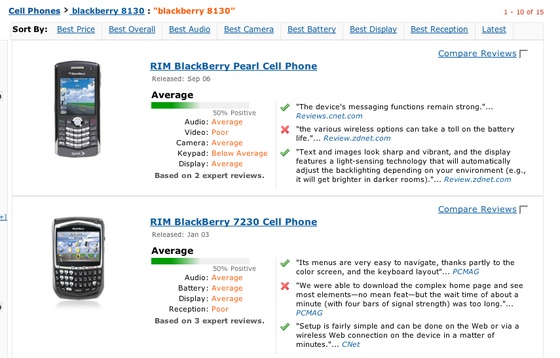

For example, it found the Blackberry Pearl 8130 but only focused on reviews talking about its Sprint incarnation, which means the spider could use some work. Generally, however, you got a quick look at a product without much fanfare and you can compare products using a clever Ajax interface.

The system is partly robotic and partly human:

New cameras typically take some time to come up with expert reviews, so

currently we update every 15 days.Currently we have around 86,606 reviews (8386 products – mentioned in the

footer) and we cover almost all expert review sources (around 250 in all).

As we have recently launched our update cycle is still ramping up. We expect

to have a lag of a week for any new product launch and around 10 days for

any new review.

In the product details page you see a list of product highlights and a blurb about each one from multiple sources. Check marks mean “good” and Xs mean “bad” so you know at a glance what the reviewers didn’t like.

I’ll accept that this is the future of reviewing: robots culling commentary from the web. Is ReviewGist quite there yet? No quite, but it’s getting closer.