If the AIs of the future are, as many tech companies seem to hope, going to look through our eyes in the form of AR glasses and other wearables, they’ll need to learn how to make sense of the human perspective. We’re used to it, of course, but there’s remarkably little first-person video footage of everyday tasks out there — which is why Facebook collected a few thousand hours for a new publicly available data set.

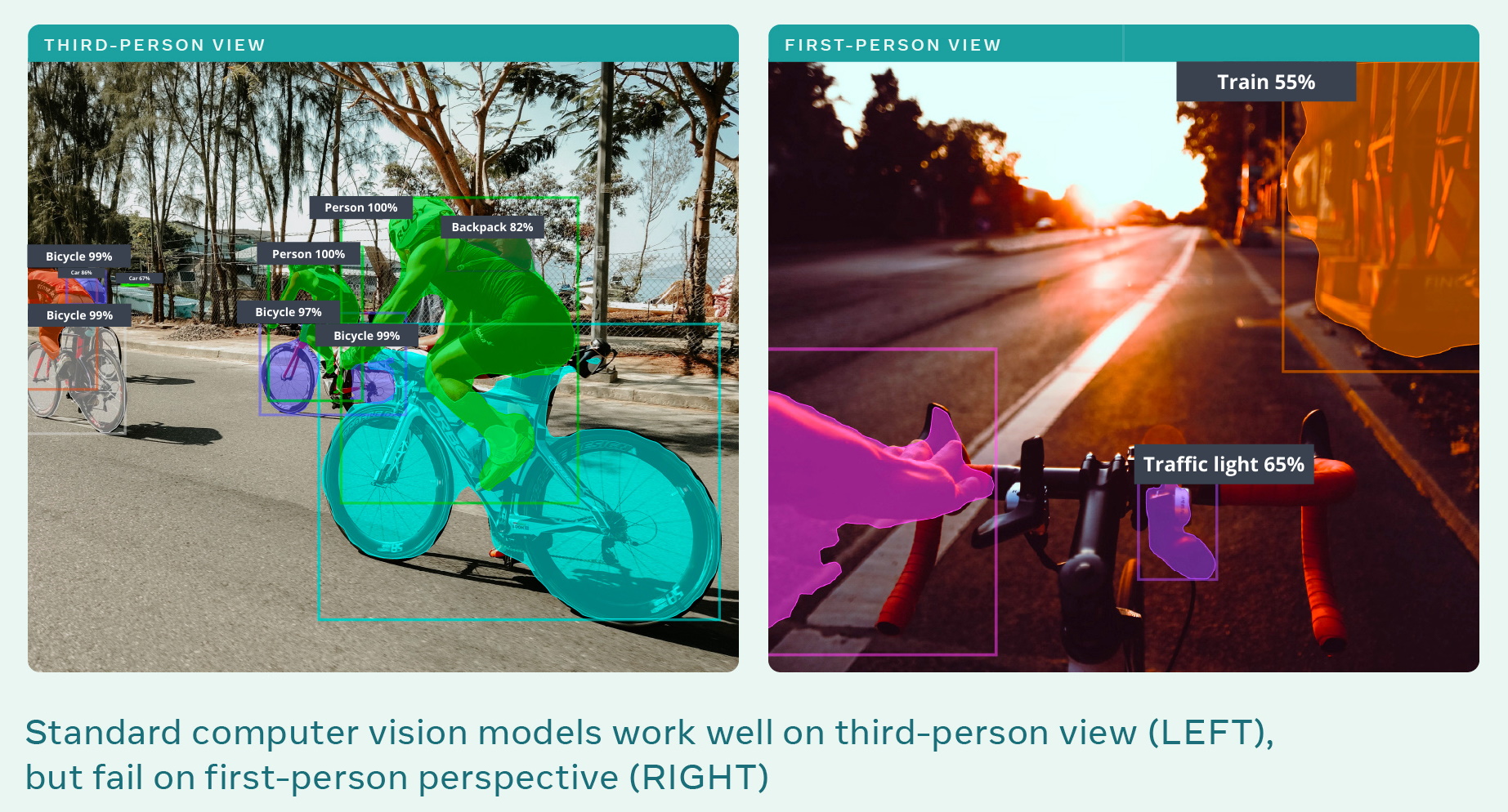

The challenge Facebook is attempting to get a grip on is simply that even the most impressive of object and scene recognition models today have been trained almost exclusively on third-person perspectives. So it can recognize a person cooking, but only if it sees that person standing in a kitchen, not if the view is from the person’s eyes. Or it will recognize a bike, but not from the perspective of the rider. It’s a perspective shift that we take for granted, because it’s a natural part of our experience, but that computers find quite difficult.

The solution to machine learning problems is generally either more or better data, and in this case it can’t hurt to have both. So Facebook contacted research partners around the world to collect first-person video of common activities like cooking, grocery shopping, typing shoelaces or just hanging out.

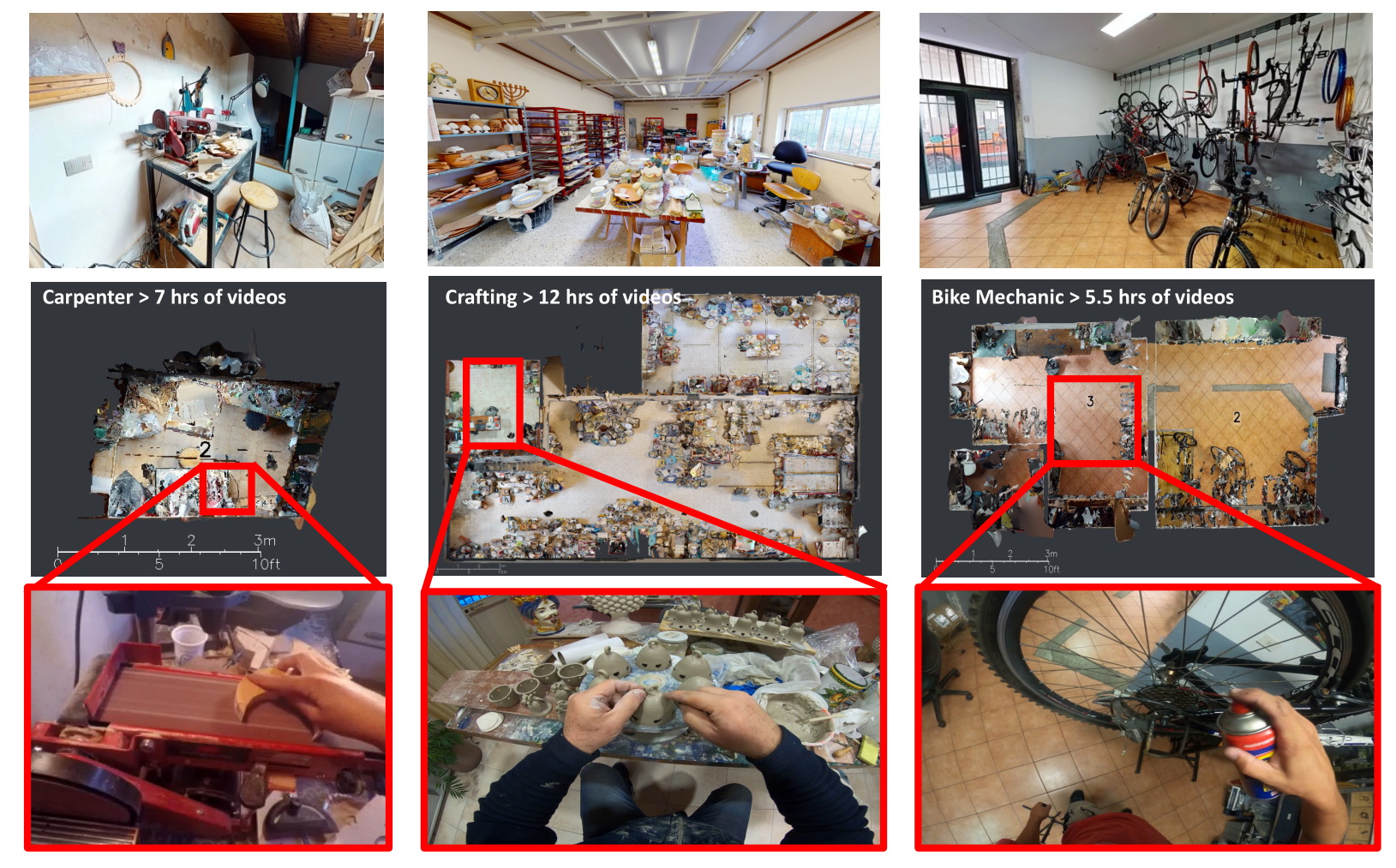

The 13 partner universities collected thousands of hours of video from more than 700 participants in nine countries, and it should be said at the outset that they were volunteers and controlled the level of their own involvement and identity. Those thousands of hours were whittled down to 3,000 by a research team that watched, edited and hand-annotated the video, while adding their own footage from staged environments they couldn’t capture in the wild. It’s all described in this research paper.

The footage was captured by a variety of methods, from glasses cameras to GoPros and other devices, and some researchers chose also to scan the environment in which the person was operating, while others tracked gaze direction and other metrics. It’s all going into a data set Facebook called Ego4D that will be made freely available to the research community at large.

Two images, one showing computer vision successfully identifying objects and another showing it failing in first person. Image Credits: Facebook

“For AI systems to interact with the world the way we do, the AI field needs to evolve to an entirely new paradigm of first-person perception. That means teaching AI to understand daily life activities through human eyes in the context of real-time motion, interaction, and multisensory observations,” said lead researcher Kristen Grauman in a Facebook blog post.

As difficult as it may be to believe, this research and the Ray-Ban Stories smart shades are totally unrelated except in that Facebook clearly thinks that first-person understanding is increasingly important to multiple disciplines. (The 3D scans could be used in the company’s Habitat AI training simulator, though.)

“Our research is strongly motivated by applications in augmented reality and robotics,” Grauman told TechCrunch. “First-person perception is critical to enabling the AI assistants of the future, especially as wearables like AR glasses become an integral part of how people live and move throughout everyday life. Think of how beneficial it would be if the assistants on your devices could remove the cognitive overload from your life, understanding your world through your eyes.”

The global nature of the collected video is a very deliberate move. It would be fundamentally shortsighted to only include imagery from a single country or culture. Kitchens in the U.S. look different from French ones, Rwandan ones and Japanese ones. Making the same dish with the same ingredients, or performing the same general task (cleaning, exercising) may look very different even between individuals, let alone entire cultures. So, as Facebook’s post puts it, “Compared with existing data sets, the Ego4D data set provides a greater diversity of scenes, people, and activities, which increases the applicability of models trained for people across backgrounds, ethnicities, occupations, and ages.”

Examples from Facebook of first-person video and the environment in which it was taken. Image Credits: Facebook

The database isn’t the only thing Facebook is releasing. With this sort of leap forward in data collecting, it’s common to also put out a set of benchmarks for testing how well a given model is using the information. For instance, with a set of images of dogs and cats, you might want a standard benchmark that tests the model’s efficacy in telling which is which.

In this case things are a bit more complicated. Simply identifying objects from a first-person point of view isn’t that hard — it’s just a different angle, really — and it wouldn’t be that new or useful, either. Do you really need a pair of AR glasses to tell you “that is a tomato”? No: like any other tool, an AR device should be telling you something you don’t know, and to do that it needs a deeper understanding of things like intentions, contexts and linked actions.

To that end the researchers came up with five tasks that can be, theoretically anyway, accomplished by analyzing this first-person imagery:

- Episodic memory: tracking objects and concepts in time and space so that arbitrary questions like “where are my keys?” can be answered.

- Forecasting: understanding sequences of events so that questions like “what’s next in the recipe?” can be answered, or things can be preemptively noted, like “you left your car keys in the house.”

- Hand-object interaction: identifying how people grasp and manipulate objects, and what happens when they do, which can feed into episodic memory or perhaps inform the actions of a robot that must imitate those actions.

- Audio-visual diarization: associating sound with events and objects so that speech or music can be intelligently tracked for situations like asking what the song was playing at the café, or what the boss said at the end of the meeting. (“Diarization” is their “word.”)

- Social interaction: understanding who is talking to whom and what is being said, both for purposes of informing the other processes and for in-moment use like captioning in a noisy room with multiple people.

These aren’t the only applications or benchmarks possible, of course, just a set of initial ideas for testing whether a given AI model actually gets what’s happening in a first-person video. Facebook’s researchers performed a base-level run on each task, described in their paper, that serves as a starting point. There’s also a sort of pie-in-the-sky video example of each of these tasks if they were successful in this video summarizing the research.

While the 3,000 hours — meticulously hand-annotated over 250,000 researcher hours, Grauman was careful to point out — are an order of magnitude more than what’s out there now, there’s still plenty of room to grow, she noted. They’re planning on growing the data set and are actively adding partners as well.

If you’re interested in using the data, keep your eye on the Facebook AI Research blog and maybe get in touch with one of the many, many people listed on the paper. It’ll be released in the next few months once the consortium figures out exactly how to do that.