WellSaid Labs, whose tools create synthetic speech that could be mistaken for the real thing, has raised a $10M Series A to grow the business. The company’s home-baked text-to-speech engine works faster than real time and produces natural-sounding clips of pretty much any length, from quick snippets to hours-long readings.

WellSaid came out of the Allen Institute for AI incubator in 2019, and its goal was to make synthetic voices that didn’t sound so robotic for common business purposes like training and marketing content.

It achieved that first by basing its solution on Tacotron, a speech engine developed by Google and academic researchers. But soon it had built its own that was more efficient, resulted in more convincing voices and could produce clips of arbitrary lengths. Speech engines often trip up after a couple sentences, descending into babble or losing tone, but WellSaid’s read the entirety of Mary Shelley’s “Frankenstein” without a hiccup.

The voices were good enough that they were rated as human or as good as human by listeners — not something you could really say about the usual virtual assistant suspects when they speak more than a handful of words. Not only that, but the speech was generated considerably faster than real time, where other high-quality options often operated at a tenth real time or slower — meaning three minutes of speech would take one minute to generate by WellSaid and half an hour or more by Tacotron.

Lastly, the system allows for new “Voice Avatars” to be created based on existing voice talent, like a trusted company spokesperson or voiceover artist. Originally about 20 hours of audio was needed to build a model of their quirks and voice style, but now it can do so with as little as two hours, CEO Matt Hocking said.

The company is strictly business-focused right now, which is to say there’s no user-facing app to digitize your voice into an avatar or anything. There are attendant risks and no realistic business model for it, so that’s off the table for now.

Such a realistic voice might still be of enormous help to people with disabilities, however, something Hocking acknowledges but admits they’re not quite ready to tackle yet.

“We are committed to expanding access to this technology so that nonverbal communicators, nonprofits and others can benefit from it,” he said.

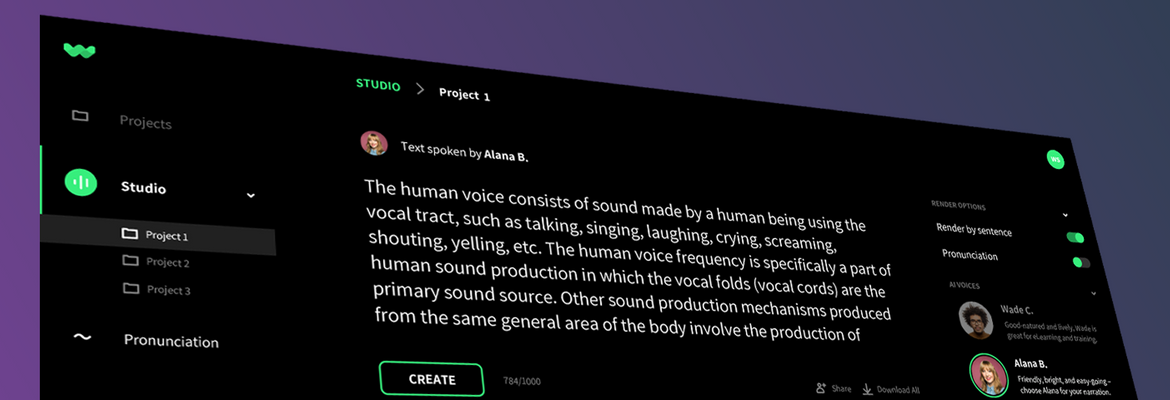

In the meantime the company has expanded from its first market, corporate training videos, to marketing, longer copy, interactive products with considerable text and app experiences. One hopes that the talent these avatars are based on are being properly compensated for helping create a digital likeness of their voice.

The oversubscribed $10M round was led by FUSE, with participation from repeat investor Voyager, Qualcomm Ventures LLC and GoodFriends, all of whom were likely impressed by the product and business growth. Synthetic voices have served a handful of popular use cases but content has not been a big one — so there’s plenty of room to grow. The company will invest the money in deepening its product offering and growing the team along with it.