In a new report, Amnesty International says it has found evidence of EU companies selling digital surveillance technologies to China — despite the stark human rights risks of technologies like facial recognition ending up in the hands of an authoritarian regime that’s been rounding up ethnic Uyghurs and holding them in “re-education” camps.

The human rights charity has called for the bloc to update its export framework, given that the export of most digital surveillance technologies is currently unregulated — urging EU lawmakers to bake in a requirement to consider human rights risks as a matter of urgency.

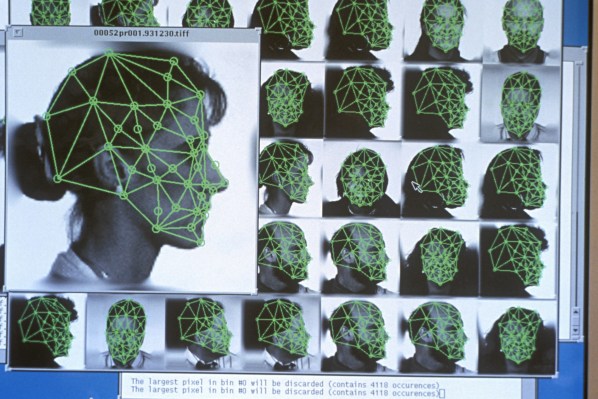

“The current EU exports regulation (i.e. Dual Use Regulation) fails to address the rapidly changing surveillance dynamics and fails to mitigate emerging risks that are posed by new forms of digital surveillance technologies [such as facial recognition tech],” it writes. “These technologies can be exported freely to every buyer around the globe, including Chinese public security bureaus. The export regulation framework also does not obligate the exporting companies to conduct human rights due diligence, which is unacceptable considering the human rights risk associated with digital surveillance technologies.”

“The EU exports regulation framework needs fixing, and it needs it fast,” it adds, saying there’s a window of opportunity as the European legislature is in the process of amending the exports regulation framework.

Amnesty’s report contains a number of recommendations for updating the framework so it’s able to respond to fast-paced developments in surveillance tech — including saying the scope of the Recast Dual Use Regulation should be “technology-neutral,” and suggesting obligations are placed on exporting companies to carry out human rights due diligence, regardless of size, location or structure.

We’ve reached out to the European Commission for a response to Amnesty’s call for updates to the EU export framework.

The report identifies three EU-based companies — biometrics authentication solutions provider Morpho (now Idemia) from France; networked camera maker Axis Communications from Sweden; and human (and animal) behavioral research software provider Noldus Information Technology from the Netherlands — as having exported digital surveillance tools to China.

“These technologies included facial and emotion recognition software, and are now used by Chinese public security bureaus, criminal law enforcement agencies, and/or government-related research institutes, including in the region of Xinjiang,” it writes, referring to a region of northwest China that’s home to many ethnic minorities, including the persecuted Uyghurs.

“None of the companies fulfilled their human rights due diligence responsibilities for these transactions, as prescribed by international human rights law,” it adds. “The exports pose significant risks to human rights.”

Amnesty suggests the risks posed by some of the technologies that have already been exported from the EU include interference with the right to privacy — such as via eliminating the possibility for individuals to remain anonymous in public spaces — as well as interference with non-discrimination, freedom of opinion and expression, and potential impacts on the rights to assembly and association too.

We contacted the three EU companies named in the report.

At the time of writing only Axis Communications had replied — pointing us to a public statement, where it writes that its network video solutions are “used all over the world to help increase security and safety,” adding that it “always” respects human rights and opposes discrimination and repression “in any form.”

“In relation to the ethics of how our solutions are used by our customers, customers are systematically screened to highlight any legal restrictions or inclusion on lists of national and international sanctions,” it also claims, although the statement makes no reference to why this process did not prevent it from selling its technology to China.

Update: Noldus Information Technology has also now sent a statement — in which it denies manufacturing digital surveillance tools and argues that its research tool software does not pose a human rights risk.

It also accuses Amnesty of failing to do due diligence during its investigation.

“Amnesty International… has not presented a single piece of evidence that human rights violations have occurred, nor has it presented a single example of how our software could be a risk to human rights,” Noldus writes. “Regarding the sales to Shihezi University and Xinjiang Normal University, Amnesty admits that “our research did not investigate direct links between the university projects involving Noldus products and the expansion of state surveillance and control in Xinjiang.” These universities, and many others in China, purchased our tools for developmental and educational psychology research, common application areas in academic research around the world, during a nationwide program to improve research infrastructure in Chinese universities.”

“We agree with Amnesty that the misuse of technology that could potentially violate human rights should be prevented at all time. We also understand and support Amnesty’s plea for stricter Dual Use regulations to control the export of mass surveillance technology. However, Noldus Information Technology does not make surveillance systems and we are not active in the public security market, so we don’t understand why Amnesty included our company in their report,” it adds. “The discussion about risks of digital technology for human rights should be based on evidence, not suspicions or assumptions. Amnesty’s focus should have been on technologies that form a risk to human rights, and Noldus’ tools don’t do that.”

On the domestic front, European lawmakers are in the process of fashioning regional rules for the use of “high risk” applications of AI across the bloc — with a draft proposal due next year, per a recent speech by the Commission president.

Thus far the EU’s executive has steered away from an earlier suggestion that it could seek a temporary ban on the use of facial recognition tech in public places. It also appears to favor lighter-touch regulation, which defines only a sub-set of “high risk” applications, rather than imposing any blanket bans. Additionally regional lawmakers have sought a “broad” debate on circumstances where use of remote use of biometric identification could be justified, suggesting nothing is yet off the table.