Swyg, a Dublin-based startup that believes it can reduce bias in recruitment by combining a peer-interview process with its own AI, has picked up $1.2 million in pre-seed funding.

Leading the round is Frontline Ventures, alongside angel investors including Charles Bibby (co-founder of Pointy) and Martin Henk (co-founder of Pipedrive). The funding will be used to grow Swyg’s technical and product team, and to further develop its platform.

“Candidate selection is a big problem in hiring,” Swyg founder Vincent Lonij tells me. “It is the most labor-intensive and most error-prone part of the process… Bad decisions are made when a single reviewer/interviewer tries to make a decision based on limited information such as a resume or static profile. This scenario is exactly where human bias enters into the process.”

In addition, on the applicant side, Lonij notes that the overwhelming majority of job candidates want to receive feedback from their time-consuming job interviews, “yet only 41% receive it, hindering their ability to learn and grow.”

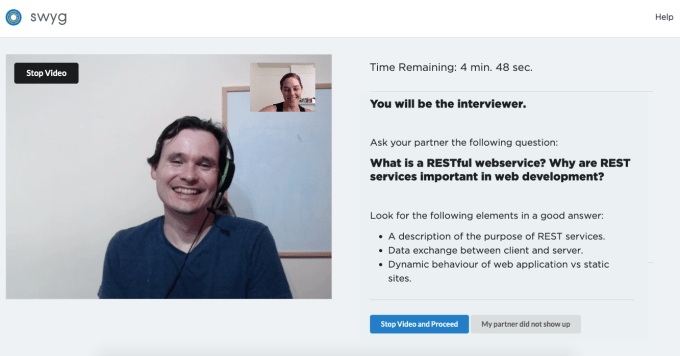

To solve this, the Swyg platform puts candidates through an interview process that sees them interview each other through a series of one-to-one video chats using pre-defined structured questions.

“The peer-to-peer process draws on the expertise of a diverse group of individuals instead of relying on a single recruiter or hiring manager,” explains the Swyg founder. “Simply getting input from more diverse reviewers already reduces bias.” In addition, Swyg’s AI technology claims to be able to calibrate peer-interviewers in real time “to detect and correct bias and human error.”

Image Credits: Swyg

One way to think about it is that to understand the interviewee and how they performed in the interview, first Swyg needs to understand more about the interviewer. This could include taking into account how they score candidates in aggregate (i.e. are they more positive or more negative in their scores) and other variables, such as if they are inclined to be a tougher judge following a high score and vice versa or if they remain fairly consistent.

There are also systems in place to detect when something unexpected happens, including participants deliberately giving unfair ratings. This triggers a review process where Swyg can exclude certain reviews if it is warranted.

“In a nutshell, we use machine learning to understand the interviewers that in turn understand the interviewees, as opposed to trying to judge candidates directly with AI/ML,” explains Lonij. “We’re able to use this technology to detect and correct for known cognitive biases of the interviewers, which leads to more accurate assessments.”

Meanwhile, Lonij says that everyone else is trying to solve the candidate selection problem using fully automated solutions or fully manual solutions. “Neither of these will work,” he argues.

That’s because AI in general is not developed enough to be able to judge humans in a fully automated way, resulting in CV keyword matching or automated analysis of recorded videos being extremely unreliable. In turn, human interviewers alone are error prone and subject to a range of biases.

“We are different because of our hybrid approach,” adds Lonij. “By making candidates part of the process we can take advantage of the best parts of human integrity and adaptability while also getting the efficiency of AI.”