Robots primarily rely on two basic senses: vision and touch. But even the latter still has a long way to go to get up to the speed with the former. Researchers at Carnegie Mellon University are looking to hearing as a potential additional sense to help machines increase their perception of the world around them.

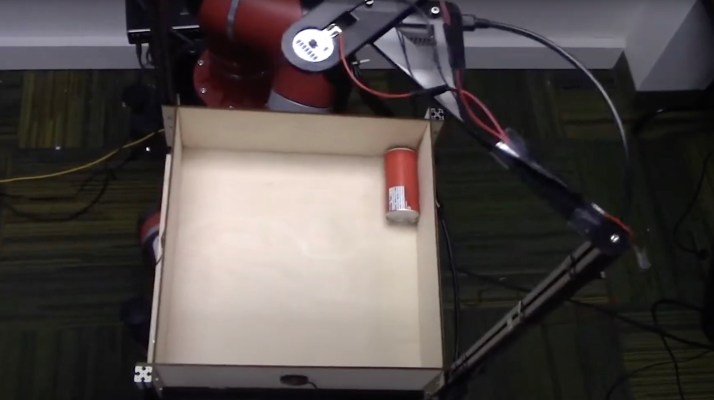

A new experiment from CMU features Rethink Robotics’ Sawyer moving objects inside a metal tray to get a sense of the sounds they make as they roll around, slide and crash into the sides. There are 60 objects in all — including tools, wooden blocks, tennis balls and an apple — with 15,000 “interactions” recorded and cataloged.

The robot, named “Tilt-Bot” by the team, was capable of identifying objects with a 76% success rate, even determining the relatively small material differences between a metal screwdriver and wrench. Using the sound data, the robot was often able to correctly determine the material makeup of the objects.

“I think what was really exciting was that when it failed, it would fail on things you expect it to fail on,” CMU assistant professor Oliver Kroemer said in a release tied to the research. “But if it was a different object, such as a block versus a cup, it could figure that out.”

This is still early stages stuff, with the initial results only just having been published, but the researches foresee the potential to harness sound detection as yet another tool in a robot’s sensing arsenal. Among the possibilities is the inclusion of a “cane” the machines could us to tap an object in order to better determine its material properties.