Lidar is a critical part of many autonomous cars and robotic systems, but the technology is also evolving quickly. A new company called Sense Photonics just emerged from stealth mode today with a $26M A round, touting a whole new approach that allows for an ultra-wide field of view and (literally) flexible installation.

Still in prototype phase but clearly enough to attract eight figures of investment, Sense Photonics’ lidar doesn’t look dramatically different from others at first, but the changes are both under the hood and, in a way, on both sides of it.

Early popular lidar systems like those from Velodyne use a spinning module that emit and detect infrared laser pulses, finding the range of the surroundings by measuring the light’s time of flight. Subsequent ones have replaced the spinning unit with something less mechanical, like a DLP-type mirror or even metamaterials-based beam steering.

All these systems are “scanning” systems in that they sweep a beam, column, or spot of light across the scene in some structured fashion — faster than we can perceive, but still piece by piece. Few companies, however, have managed to implement what’s called “flash” lidar, which illuminates the whole scene with one giant, well, flash.

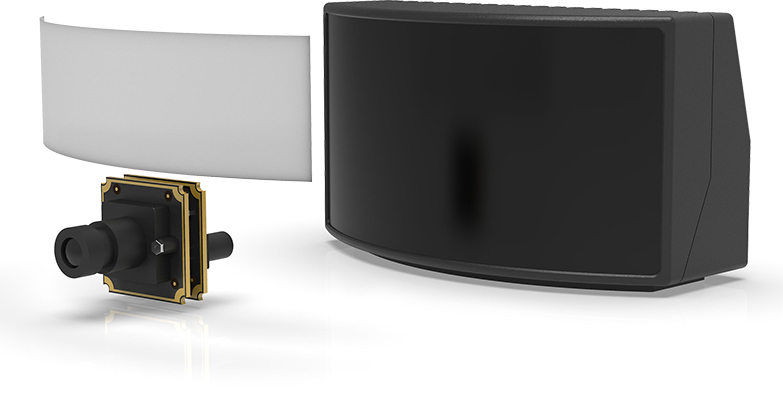

That’s what Sense has created, and it claims to have avoided the usual shortcomings of such systems — namely limited resolution and range. Not only that, but by separating the laser emitting part and the sensor that measures the pulses, Sense’s lidar could be simpler to install without redesigning the whole car around it.

I talked with CEO and co-founder Scott Burroughs, a veteran engineer of laser systems, about what makes Sense’s lidar a different animal from the competition.

“It starts with the laser emitter,” he said. “We have some secret sauce that lets us build a massive array of lasers — literally thousands and thousands, spread apart for better thermal performance and eye safety.”

These tiny laser elements are stuck on a flexible backing, meaning the array can be curved — providing a vastly improved field of view. Lidar units (except for the 360-degree ones) tend to be around 120 degrees horizontally, since that’s what you can reliably get from a sensor and emitter on a flat plane, and perhaps 50 or 60 degrees vertically.

These tiny laser elements are stuck on a flexible backing, meaning the array can be curved — providing a vastly improved field of view. Lidar units (except for the 360-degree ones) tend to be around 120 degrees horizontally, since that’s what you can reliably get from a sensor and emitter on a flat plane, and perhaps 50 or 60 degrees vertically.

“We can go as high as 90 degrees for vert which i think is unprecedented, and as high as 180 degrees for horizontal,” said Burroughs proudly. “And that’s something auto makers we’ve talked to have been very excited about.”

Here it is worth mentioning that lidar systems have also begun to bifurcate into long-range, forward-facing lidar (like those from Luminar and Lumotive) for detecting things like obstacles or people 200 meters down the road, and more short-range, wider-field lidar for more immediate situational awareness — a dog behind the vehicle as it backs up, or a car pulling out of a parking spot just a few meters away. Sense’s devices are very much geared toward the second use case for the present, though the company is working on a long-range version of its hardware as well.

Particularly because of the second interesting innovation they’ve included: the sensor, normally part and parcel with the lidar unit, can exist totally separately from the emitter, and is little more than a specialized camera. That means that while the emitter can be integrated into a curved surface like the headlight assembly, while the tiny detectors can be stuck in places where there are already traditional cameras: side mirrors, bumpers, and so on.

The camera-like architecture is more than convenient for placement; it also fundamentally affects the way the system reconstructs the image of its surroundings. Because the sensor they use is so close to an ordinary RGB camera’s, images from the former can be matched to the latter very easily.

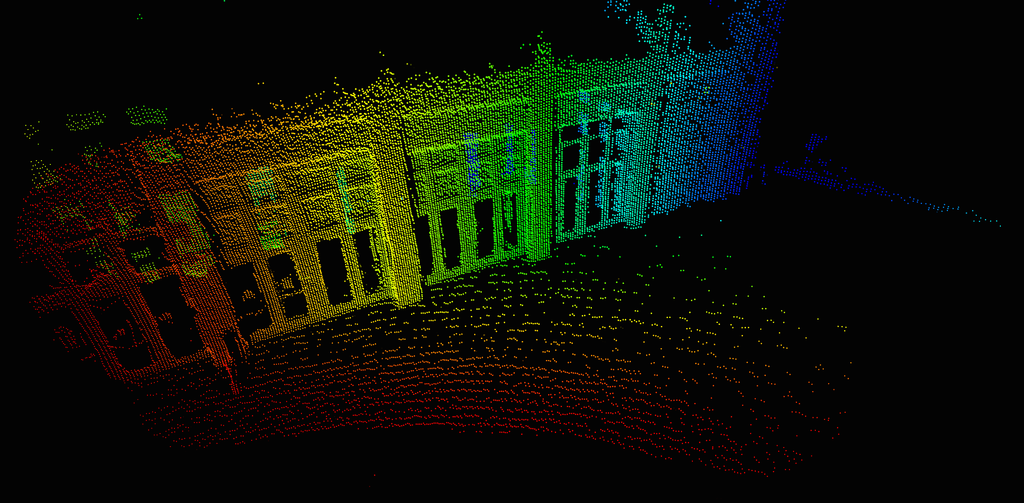

Most lidars output a 3D point cloud, the result of the beam finding millions of points with different ranges. This is a very different form of “image” than a traditional camera, and it can take some work to convert or compare the depths and shapes of a point cloud to a 2D RGB image. Sense’s unit not only outputs a 2D depth map natively, but that data can be synced with a twin camera so the visible light image matches pixel for pixel to the depth map. It saves on computing time and therefore on delay — always a good thing for autonomous platforms.

The benefits of Sense’s system are manifest, but of course right now the company is still working on getting the first units to production. To that end it has of course raised the $26 million A round, “co-led by Acadia Woods and Congruent Ventures, with participation from a number of other investors, including Prelude Ventures, Samsung Ventures and Shell Ventures,” as the press release puts it.

Cash on hand is always good. But it has also partnered with Infineon and others, including an unnamed tier-1 automotive company, which is no doubt helping shape the first commercial Sense Photonics product. The details will have to wait until later this year when that offering solidifies, and production should start a few months after that — no hard timeline yet, but expect this all before the end of the year.

“We are very appreciative of this strong vote of investor confidence in our team and our technology,” Burroughs said in the press release. “The demand we’ve encountered – even while operating in stealth mode – has been extraordinary.”