Industrial robots are typically all about repeating a well-defined task over and over again. Usually, that means performing those tasks a safe distance away from the fragile humans that programmed them. More and more, however, researchers are now thinking about how robots and humans can work in close proximity to humans and even learn from them. In part, that’s what Nvidia’s new robotics lab in Seattle focuses on and the company’s research team today presented at the International Conference on Robotics and Automation (ICRA), in Brisbane, Australia some of its most recent work around teaching robots by observing humans.

Nvidia’s director of robotics research Dieter Fox.

As Dieter Fox, the senior director of robotics research at Nvidia (and a professor at the University of Washington), told me, the team wants to enable this next generation of robots that can safely work in close proximity to humans. But to do that, those robots need to be able to detect people, track their activities and learn how they can help people. That may be in a small-scale industrial setting or in somebody’s home.

While it’s possible to train an algorithm to successfully play a video game by rote repetition and teaching it to learn from its mistakes, Fox argues that the decision space for training robots that way is far too large to do this efficiently. Instead, a team of Nvidia researchers led by Stan Birchfield and Jonathan Tremblay developed a system that allows them to teach a robot to perform new tasks by simply observing a human.

The tasks in this example are pretty straightforward and involve nothing more than stacking a few colored cubes. But it’s also an important step in this overall journey to enable us to quickly teach a robot new tasks.

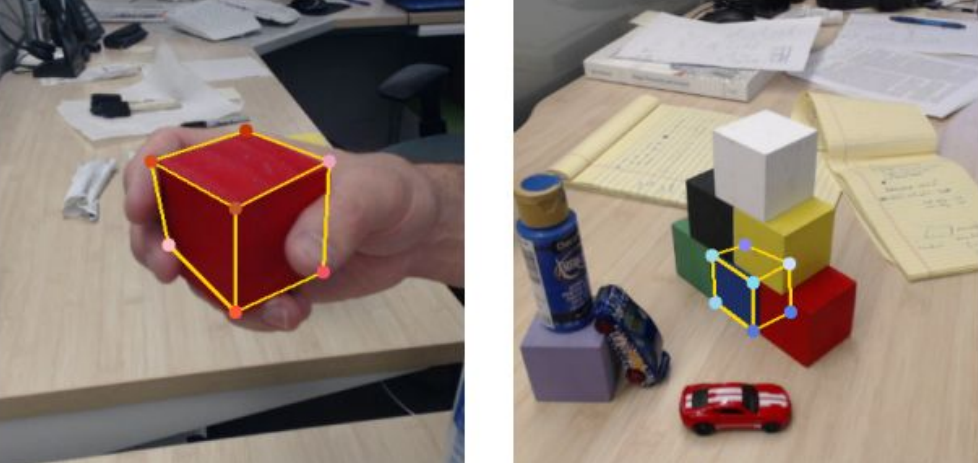

The researchers first trained a sequence of neural networks to detect objects, infer the relationship between them and then generate a program to repeat the steps it witnessed the human perform. The researchers say this new system allowed them to train their robot to perform this stacking task with a single demonstration in the real world.

One nifty aspect of this system is that it generates a human-readable description of the steps it’s performing. That way, it’s easier for the researchers to figure out what happened when things go wrong.

Birchfield tells me that the team aimed to make training the robot easy for a non-expert — and few things are easier to do than to demonstrate a basic task like stacking blocks. In the example the team presented in Brisbane, a camera watches the scene as the human simply walks up, picks up the blocks and stacks them. Then the robot repeats the task. Sounds easy enough, but it’s a massively difficult task for a robot.

To train the core models, the team mostly used synthetic data from a simulated environment. As both Birchfield and Fox stressed, it’s these simulations that allow for quickly training robots. Training in the real world would take far longer, after all, and can also be more far more dangerous. And for most of these tasks, there is no labeled training data available to begin with.

“We think using simulation is a powerful paradigm going forward to train robots to do things that weren’t possible before,” Birchfield noted. Fox echoed this and noted that this need for simulations is one of the reasons Nvidia thinks its hardware and software are ideally suited for this kind of research. There is a very strong visual aspect to this training process, after all, and Nvidia’s background in graphics hardware surely helps.

Fox admitted there’s still a lot of research left to do be done here (most of the simulations aren’t photorealistic yet, after all), but that the core foundations for this are now in place.

Going forward, the team plans to expand the range of tasks that the robots can learn and the vocabulary necessary to describe those tasks.