It’s easy enough to draw a basic floorplan of your home, but turning that floorplan into 3D rendering typically falls into the realm of professional designers. With home.me, TechCrunch Berlin Hackathon hackers Charles Wong and Aravind Kandiah, who are both students at the Singapore University of Technology and Design, built a tool that can easily turn your 2D drawings into 3D renderings.

“We always had this fascination with bringing to live what you can draw,” Kandiah said. “But there hasn’t been an easy to create this.”

That by itself is pretty cool, but by asking you about your location and a few more details about the building you are trying to render, Home.me is able to estimate the square footage and prize of what you’re drawing, too. Once the team’s tools have figured out the floorplan and rendered it, the next step is to visualize it in augmented reality, too (using the floorplan as its anchor).

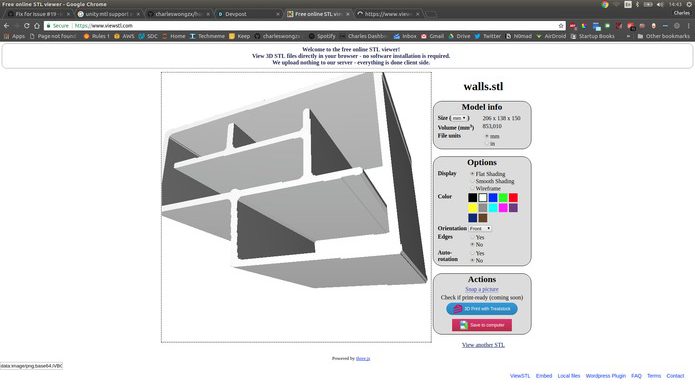

The Home.me team uses computer vision to capture a hand-drawn or printed floorplan and converts it into a 3D rendering. They considered using deep learning, but decided that this would be overkill and that there wasn’t enough time to train a model during the hackathon anyway.

As Wong and Kanidah told me, the hardest part here was figuring out the corners in the floorplan and it took a bit of a hack to make this work. The great thing about the team’s solution to this problem, though, is that it can now also handle curved walls and not just standard straight lines.

Like virtually all good machine learning-based tools, Wong built home.me in Python and he used the popular OpenCV library to power its computer vision features. The tool exports its 3D models into Unity models, something the team had to figure out on the fly during the long hackathon night.

Wong and Kandiah tell us that they’ll likely continue to work on this project, though their focus for the time being is their studies, where they are working on building an autonomous car (which isn’t a bad project to be working on either, to be fair).