Did you notice that Siri sounds a little more sprightly today? Apple’s ubiquitous virtual assistant has had a little virtual work done on her virtual vocal cords, and her newly dulcet-ized tones went live today as part of iOS 11. (Check out a few more lesser-known iOS 11 features here.)

It turns out a lot of work went into this little upgrade. The old methods of creating speech from text produced the familiar but stilted voices we’re all familiar with from the last decade or two. Basically you took a big library of voice sounds — “ah,” “ess,” etc. — and stuck them together to make words.

The new way, like everything else these days, involves machine learning. Apple detailed the technique earlier in the year (published, even), but it’s worth recounting here. First Apple recorded more than 20 hours of a “new voice talent” performing tons of scripted speech: books, jokes, answers to questions.

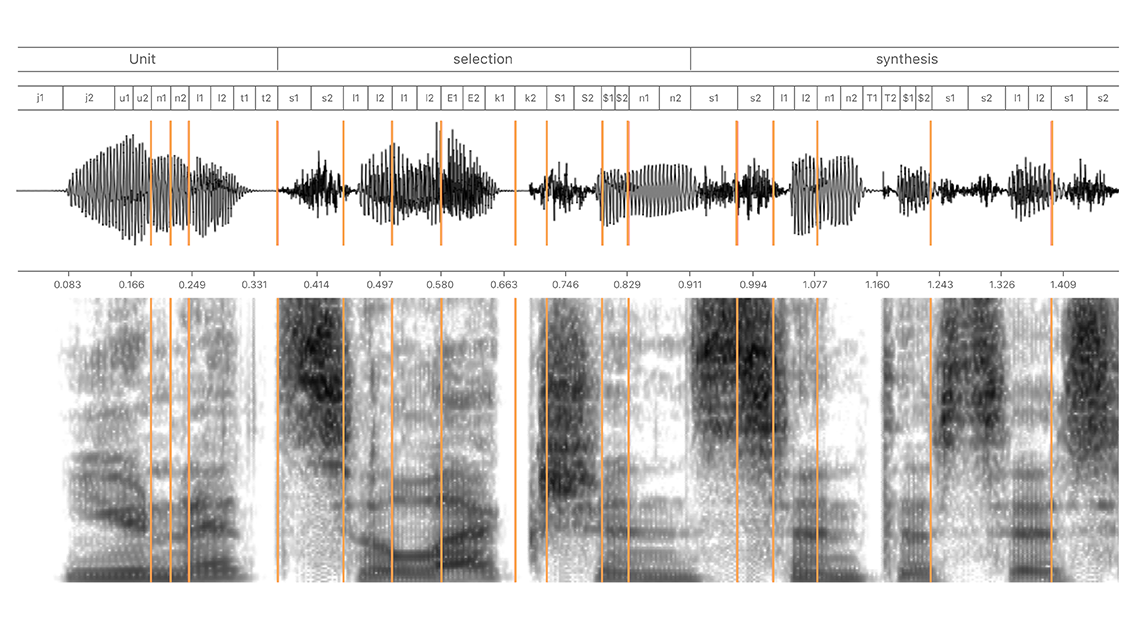

That speech was then segmented into tiny pieces called half-phones; phones are the smallest sounds that make up speech, but of course they can be said in different ways — rising, falling, quicker, slower, with more or less aspiration, that kind of thing. Half-phones… well, obviously, they’re half a phone.

All these tiny sound pieces were run through a machine learning model that figures out more or less which piece makes sense in which situation. This type of “er” sound when starting a sentence, that type when ending a sentence — that kind of thing. (Google’s WaveNet did something like this by reconstructing voice sample by sample, which Apple’s researchers acknowledge, but also point out isn’t really practical.)

The resulting voice system, while still synthetic, sounds less robotic and more lifelike, in part because the new speaker seems to be a bit more energetic to begin with — but also because it incorporates all her little idiosyncrasies, those of a real voice speaking sentences the speaker understands.

In fact, it incorporates those idiosyncrasies so completely that Molly Babel, a speech expert consulted by Popular Science, instantly pinpointed where Siri is “from.”

“She is textbook Californian,” Babel said. Well, what were you expecting?